CS7642 | Reinforcement Learning

CS7642 Lecture Notes

Part 1: Reinforcement Learning Foundations

RL is a fundamental science trying to understand the optimal way to make decisions.

A large part of the human brain / behavior is linked to a dopamine system (reward system), this neurotransmitter dopamine reflects exactly on one of the main algorithms we apply in RL.

1a. Introduction to Reinforcement Learning

This course expands on the RL mini-course from the Machine Learning class, going deeper into both theory and practice of reinforcement learning.

Theory

- Convergence of RL algorithms

- Convergence rates and sample complexity

- Bounds on mistakes before convergence

- Situations where convergence fails

Practice & Topics Covered

- Temporal Difference Learning (TD Lambda)

- Reward Shaping: expressing goals via reward functions; the sole communication channel with RL algorithms

- Generalization & Scaling: tying into abstraction

- POMDPs: decision-making under partial observability

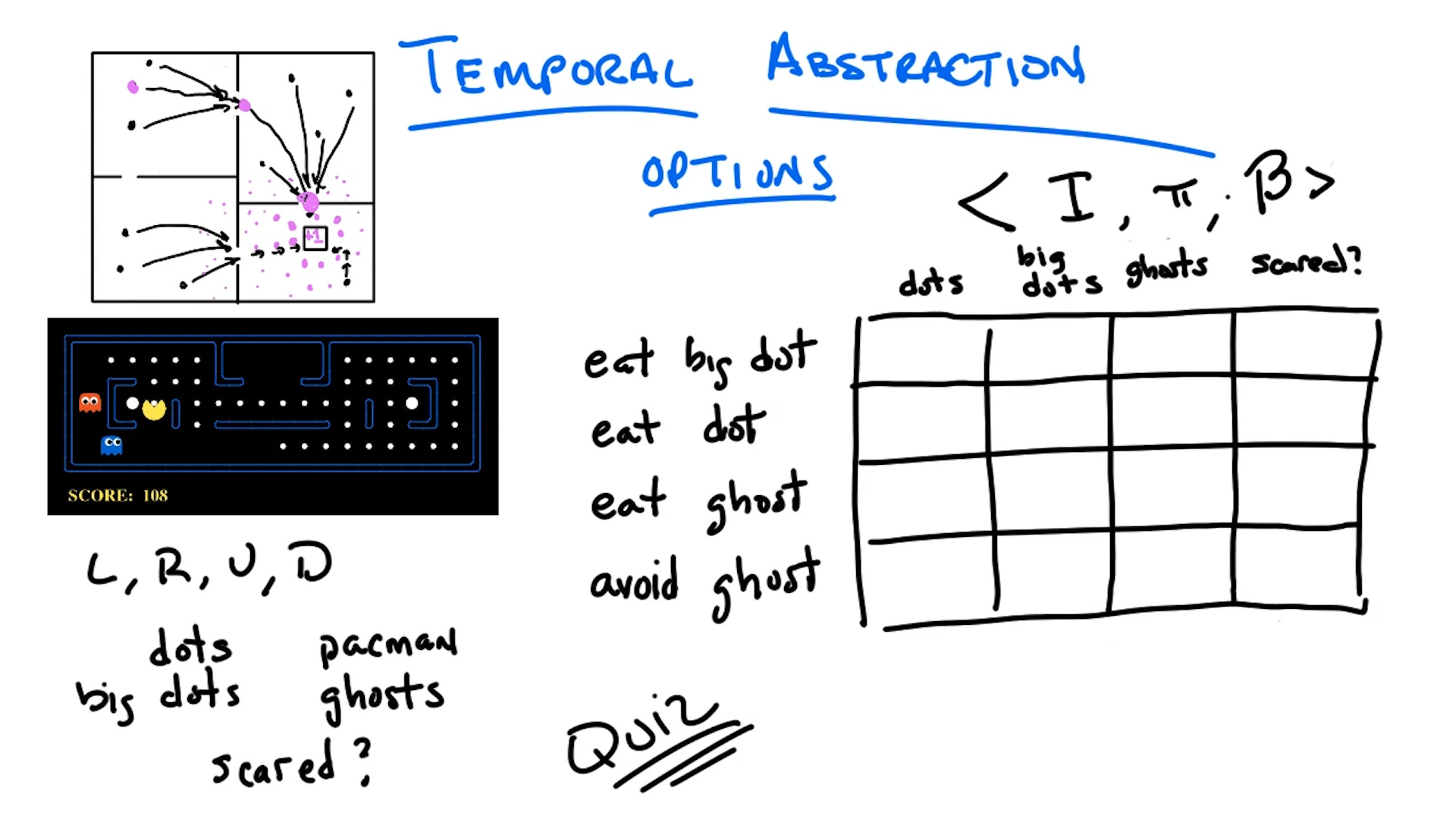

- Options Framework: temporal abstraction in RL

- Monte Carlo Methods

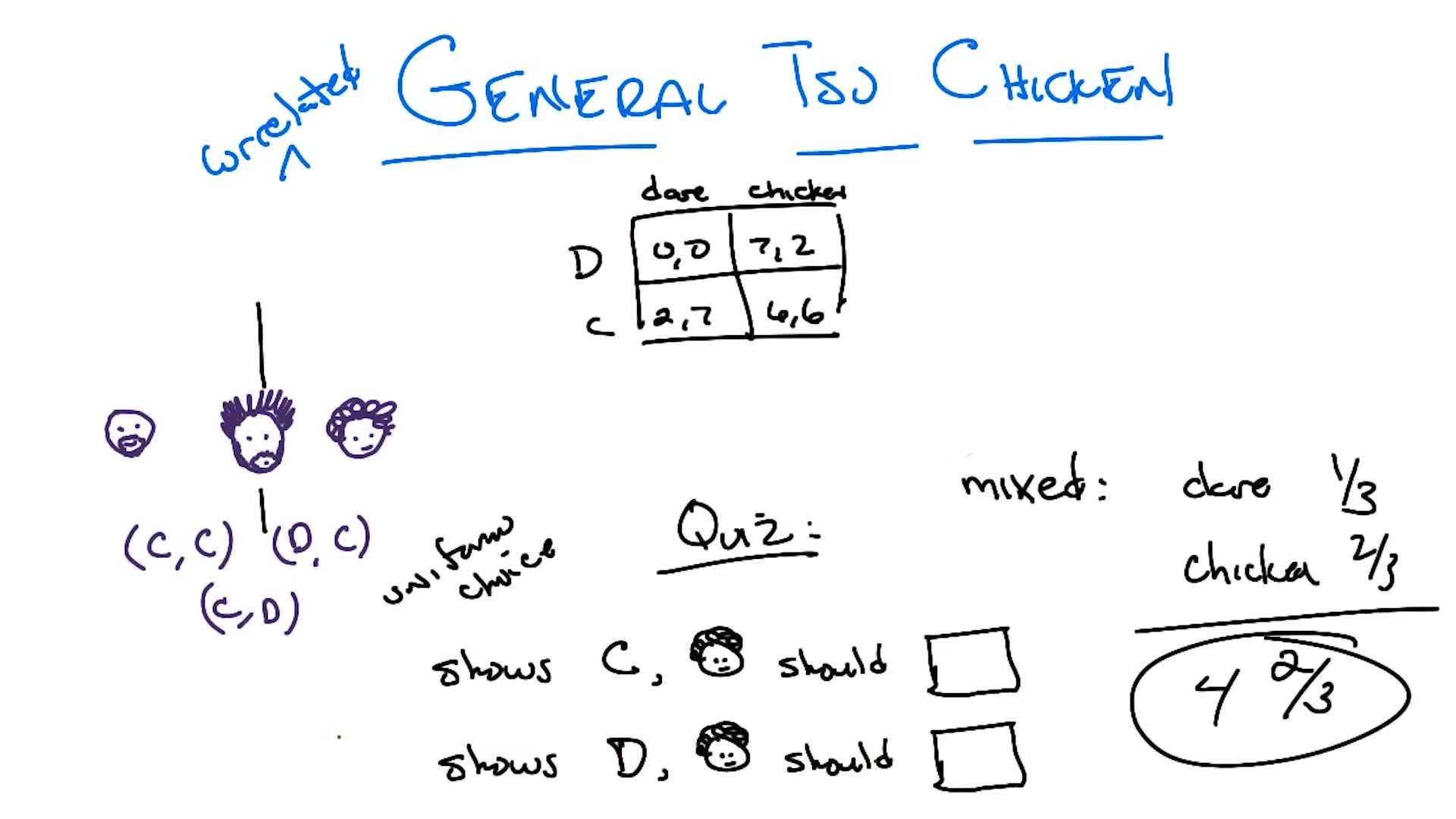

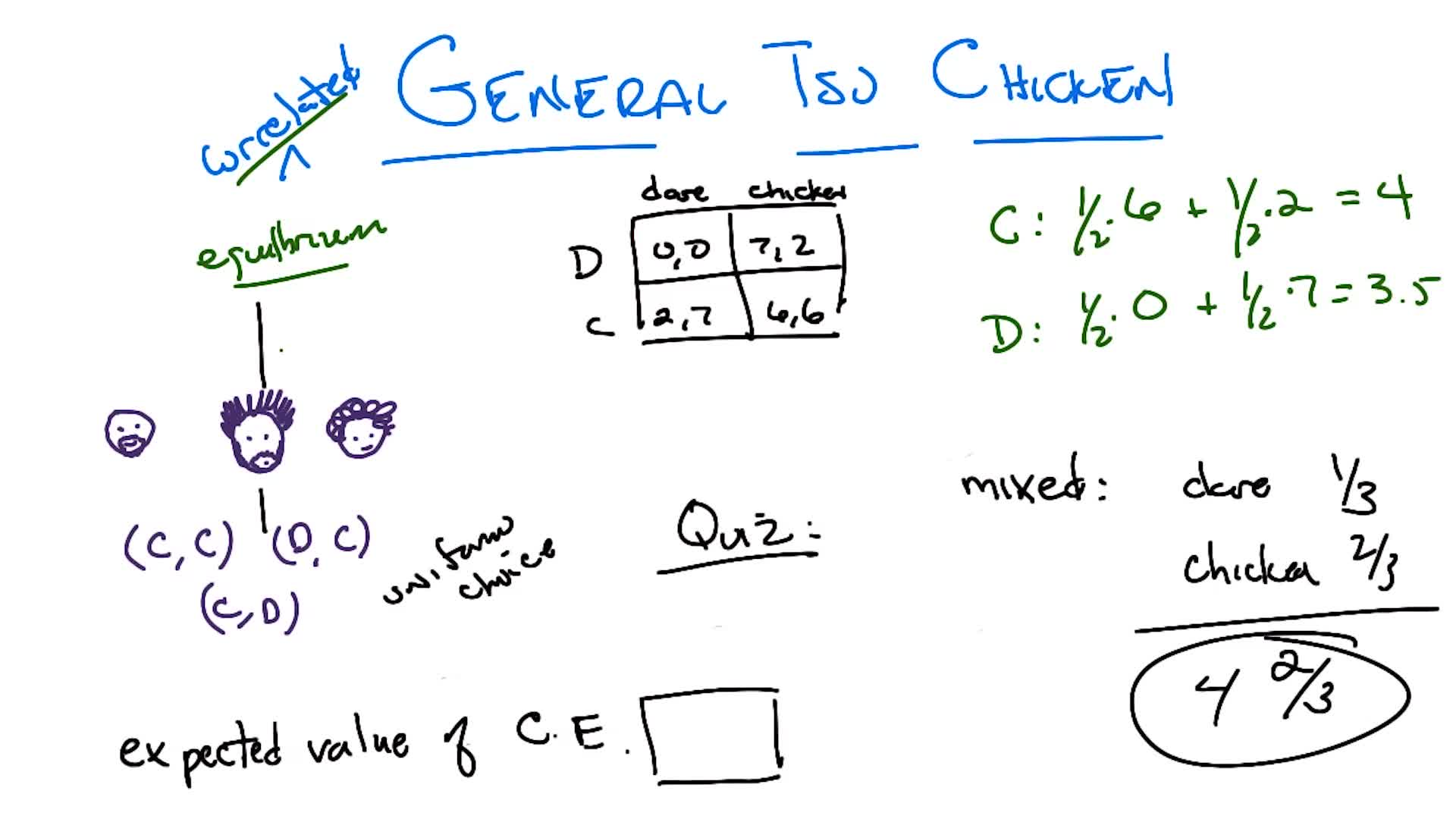

- Game Theory: solution concepts beyond Nash equilibria (e.g. correlated equilibrium)

[Supplementary] Introduction to Reinforcement Learning

Where RL Fits in AI

Artificial Intelligence: the ability of machines to simulate human behavior.

AI encompasses many subfields: planning (GPS navigation, resource allocation), knowledge-based AI (expert systems for medical diagnosis, teaching, etc.), machine learning, computer vision, robotics, and more. As these technologies mature, they often stop being perceived as “AI” and become just software.

Machine Learning narrows this down: simulating human behavior by learning from data (as opposed to planning, which uses clever algorithms without a data-learning requirement). ML has three main branches:

- Supervised Learning: learn from labeled data (input-output pairs) to generalize to unseen examples (e.g. image classification)

- Unsupervised Learning: learn structure from unlabeled data (e.g. clustering)

- Reinforcement Learning: learn to make optimal decisions through interaction with an environment

The key insight: supervised/unsupervised learning help you perceive (classify, cluster), but ultimately what you care about is acting optimally based on those perceptions. RL directly addresses the decision-making problem.

What Makes RL Different

RL is characterized by three types of feedback (Littman, Nature 2015):

| Supervised ML | Tabular RL | Deep RL | |

|---|---|---|---|

| Sequential | No (one-shot) | Yes | Yes |

| Evaluative | No (supervised) | Yes | Yes |

| Sampled | Yes | No (exhaustive) | Yes |

1. Sequential Feedback

Actions determine not just immediate outcomes but have long-term consequences. The agent must learn to balance immediate and long-term goals.

This gives rise to the credit assignment problem: when a reward is received many steps after the actions that caused it, which actions deserve credit?

Example: A robot navigating to a goal has two paths. Path A starts with $-1$ but leads into $-100$. Path B has repeated $-10$ steps but lower total cost. The optimal choice depends on the entire sequence, not individual steps.

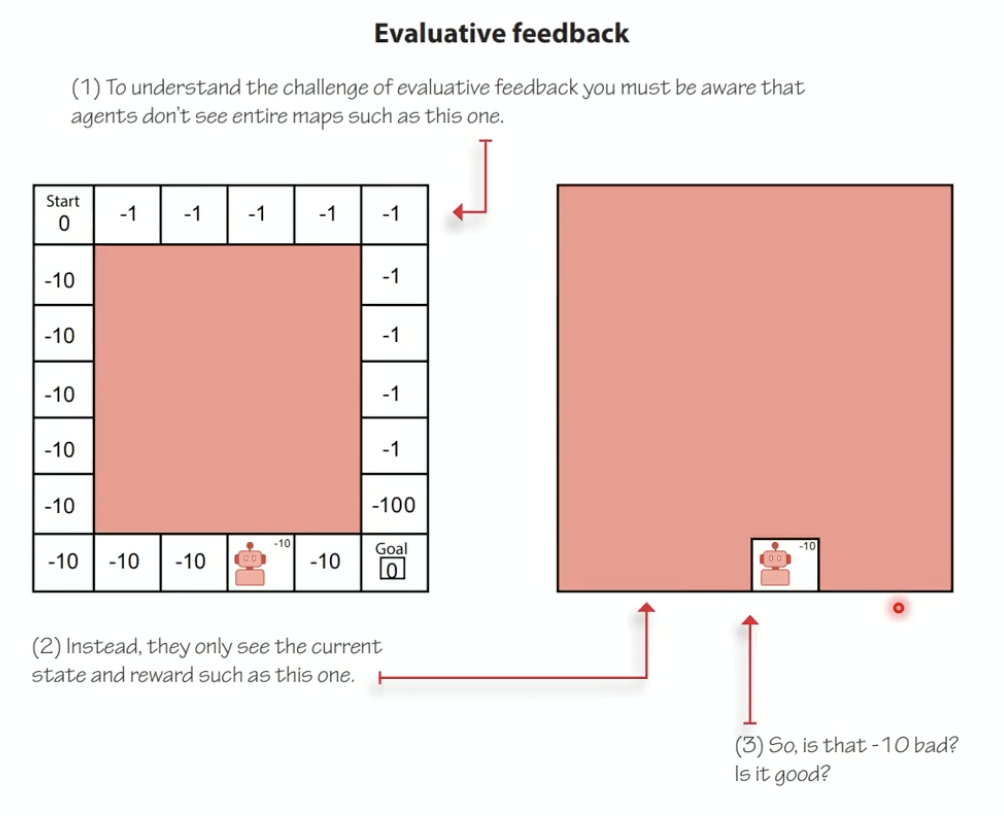

2. Evaluative Feedback

Rewards are relative, not supervisory. The environment doesn’t tell the agent what the correct action is; it only provides a scalar signal indicating how well the agent is doing.

- You don’t know the maximum achievable reward

- You must balance exploration (improving your estimates by trying new things) vs exploitation (acting on your current best estimates)

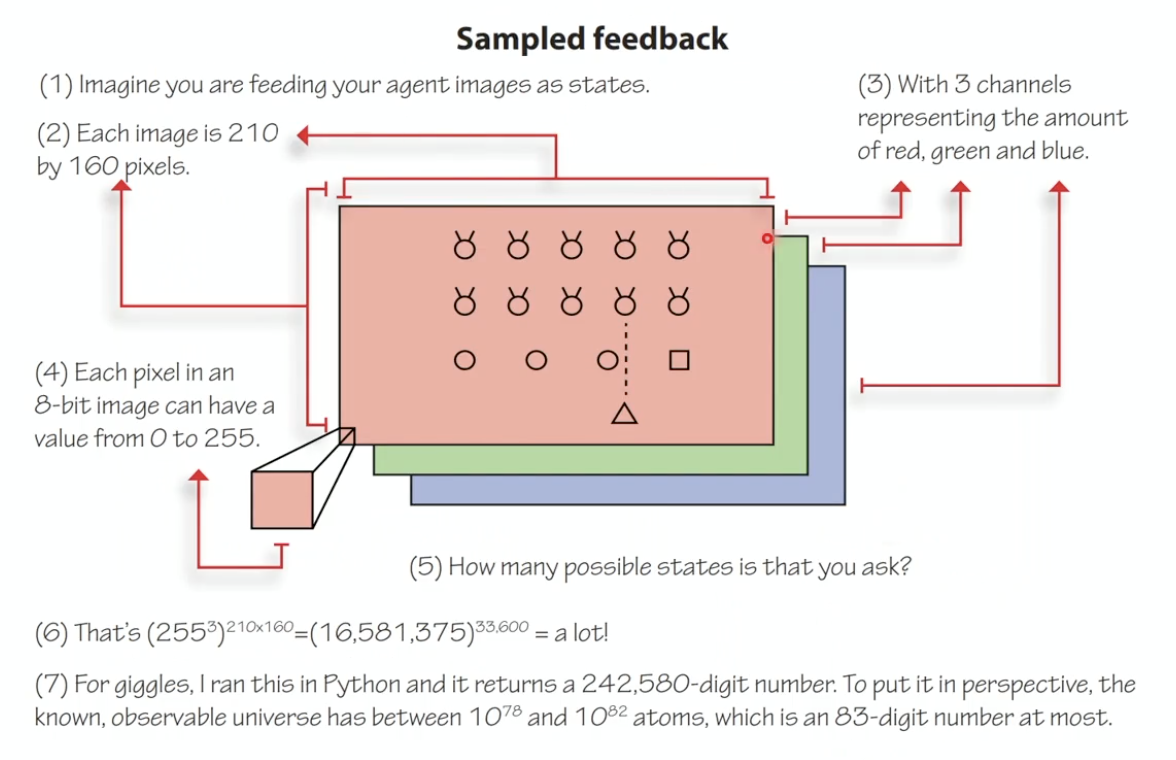

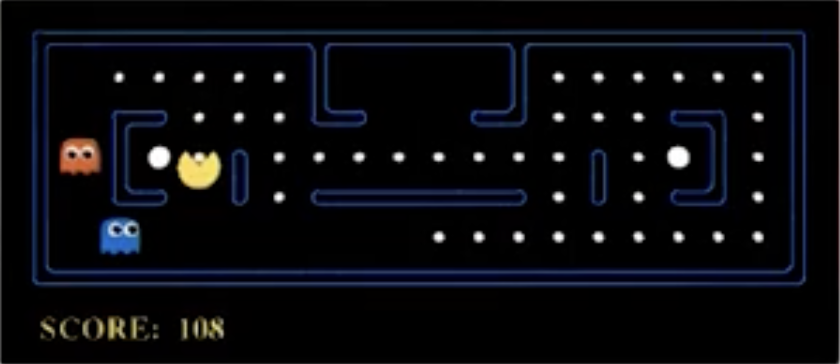

3. Sampled Feedback

The agent cannot experience every possible state. It must generalize from limited experience to draw conclusions about states/actions it hasn’t encountered.

Example: An Atari game frame is $210 \times 160 \times 3$ pixels, each in $[0, 255]$. The state space is astronomically large ($\approx 10^{242,000}$), impossible to exhaustively explore. The agent must generalize.

Course Structure Note: In Assignment 1 (value iteration / policy iteration), the MDP is given, so evaluative and sampled feedback are relaxed: you solve for the exact optimal policy. In Part 2 (Q-learning, SARSA), evaluative feedback is introduced (explore vs exploit), but state/action spaces remain discrete (exhaustive). Later, all three feedback types are present.

Deep Reinforcement Learning

Deep RL uses multi-layer non-linear function approximators (typically deep neural networks) within the RL loop. The network can approximate different components:

- Policy-based RL: network maps observations $\rightarrow$ actions

- Value-based RL: network approximates value functions

- Model-based RL: network approximates the environment dynamics, enabling planning

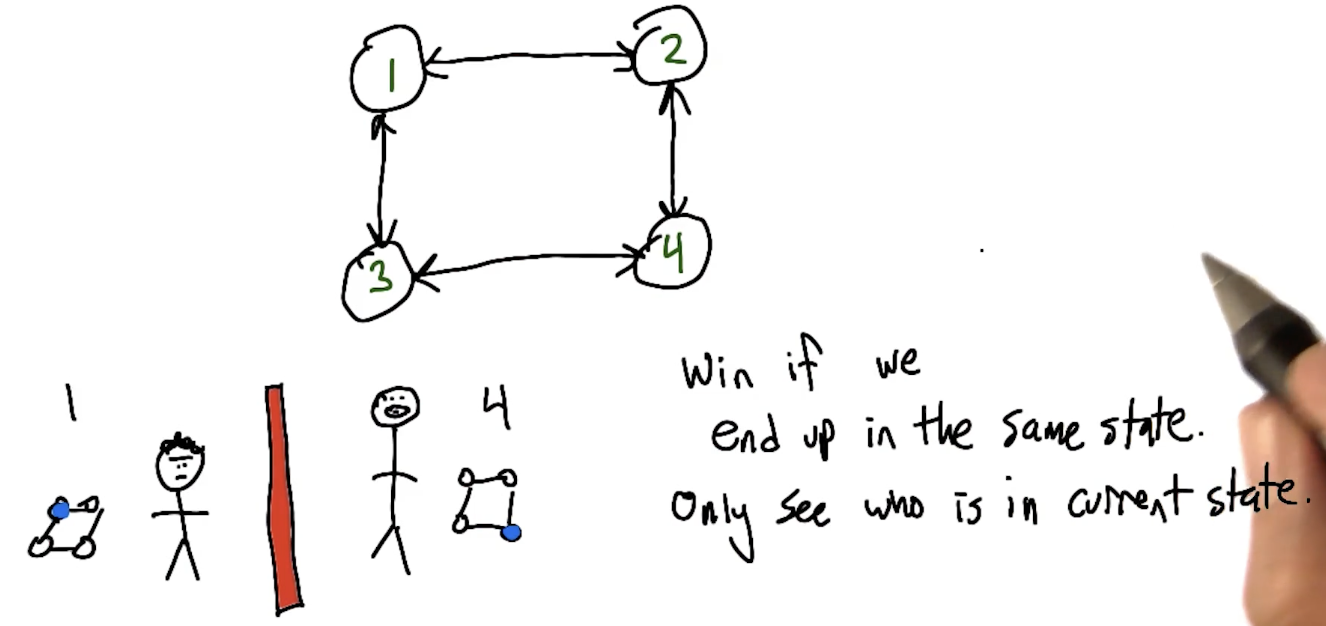

Multi-Agent Reinforcement Learning

Standard RL assumes a stationary environment. When other learning agents are present:

- The environment becomes non-stationary (other agents change their policies)

- Strategies that work against one opponent behavior may fail when the opponent adapts

- Requires specialized algorithms for coordination, competition, and human-AI collaboration

Course Note: Project 3 involves implementing multi-agent scenarios with independent learners, each with their own neural networks, operating in a shared environment.

RL Success Stories

| Domain | System | Key Achievement |

|---|---|---|

| Board Games | AlphaGo / AlphaGo Zero / AlphaZero | Defeated world champion at Go; AlphaZero learns from scratch without human data |

| Video Games | AlphaStar (DeepMind) | Grandmaster-level StarCraft II |

| Video Games | OpenAI Five | Defeated professional Dota 2 teams |

| Locomotion | PPO (OpenAI, 2016) | Same algorithm learns locomotion across different simulated bodies |

| Robotics | Sergey Levine (Berkeley) | Camera-to-robotic-control manipulation |

| Robotics | OpenAI Dactyl | Robotic hand solves Rubik’s cube-like block manipulation |

| Infrastructure | DeepMind Data Center Cooling | Live production system reducing energy consumption |

| Navigation | DeepMind Loon | RL-controlled stratospheric balloons riding wind currents |

| Aerospace | Alpha Dogfight Trials (Lockheed Martin) | Defeated human fighter pilot (with truth state information) |

| Science | AlphaFold (DeepMind) | Protein structure prediction |

| Racing | GT Sophy (Sony AI) | Best Gran Turismo drivers; deployed on PlayStation |

| NLP | ChatGPT (OpenAI) | RLHF phase uses reinforcement learning |

| Mathematics | AlphaProof (DeepMind, 2024) | 28/42 points at International Mathematical Olympiad (silver medal level) |

Key Takeaway

“If a machine is expected to be infallible, it cannot also be intelligent.” : Alan Turing

Intelligence is about trial and error, making decisions under uncertainty, learning from mistakes, and accurately estimating outcomes. This course is about exactly that.

1b. Smoov & Curly’s Bogus Journey

Note: This section includes additional content from Supplementary Lectures on MDPs & Planning Methods

This is the mathematical heart of Part 1: we formalize the decision-making problem as an MDP, derive the Bellman equation, and develop algorithms (value iteration, policy iteration) to solve it. Everything in later lectures builds on this foundation.

The Three Types of Learning (Recap)

| Paradigm | Given | Learn | Goal |

|---|---|---|---|

| Supervised | $(x, y)$ pairs | $f: x \rightarrow y$ | Function approximation |

| Unsupervised | $x$’s only | $f: x \rightarrow$ description | Clustering / description |

| Reinforcement | $(x, z)$ pairs | $f$ that generates $y$’s | Decision making |

In RL, $x$ = states, $z$ = rewards, $y$ = actions, $f$ = policy ($\pi$). We are not told the correct action (as in supervised learning); instead we observe rewards and must figure out which actions led to good outcomes.

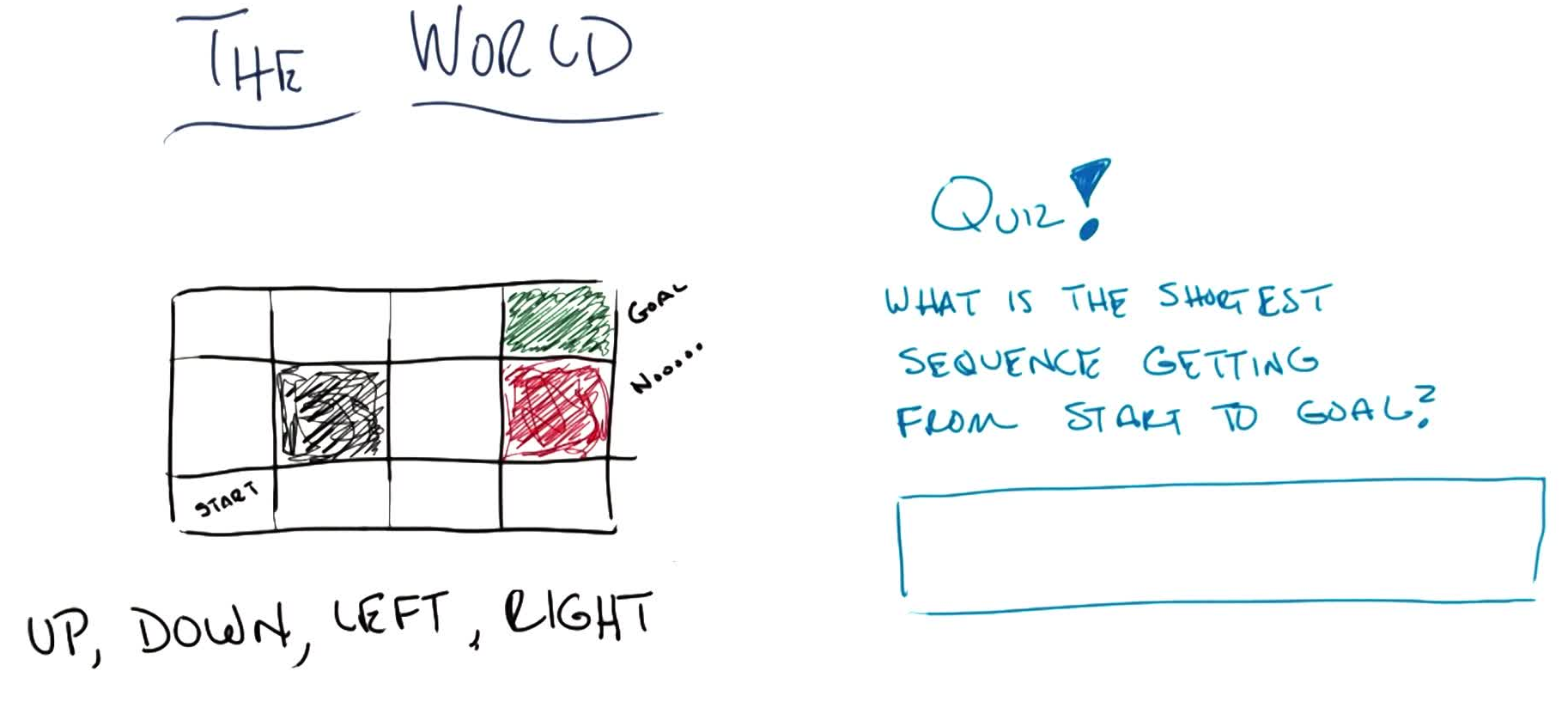

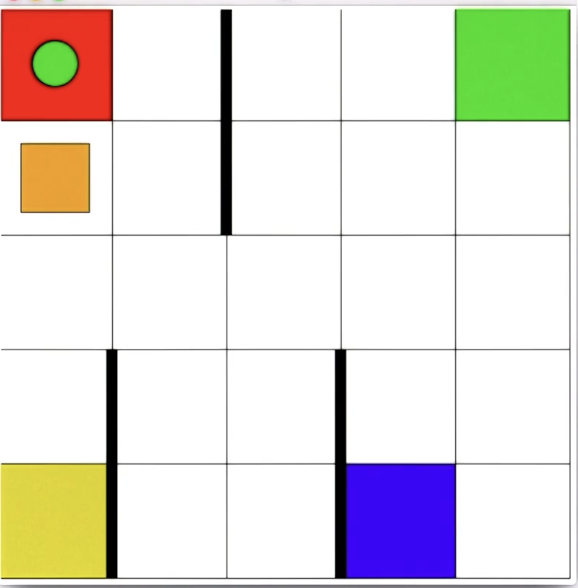

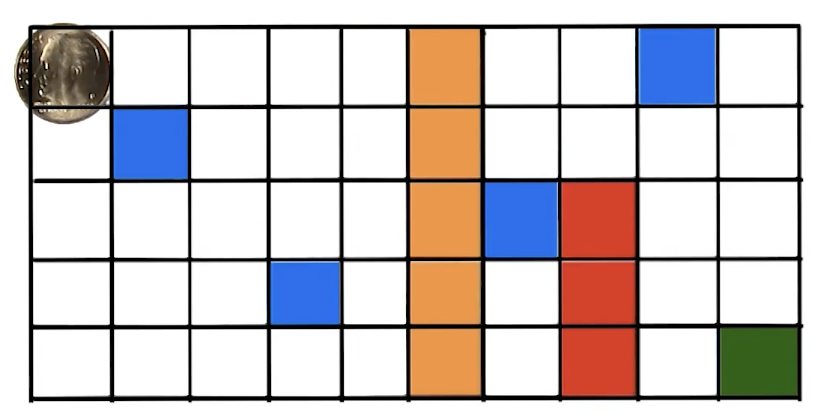

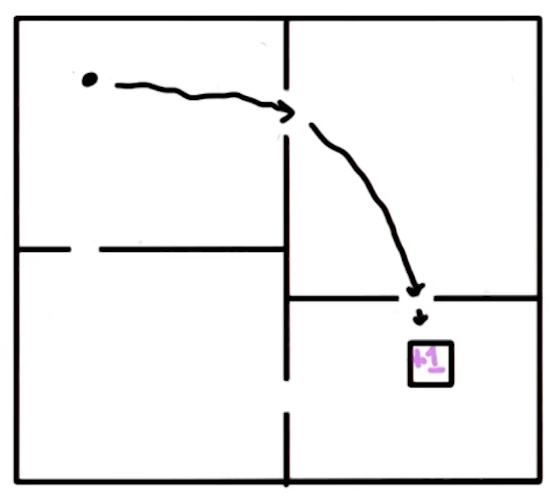

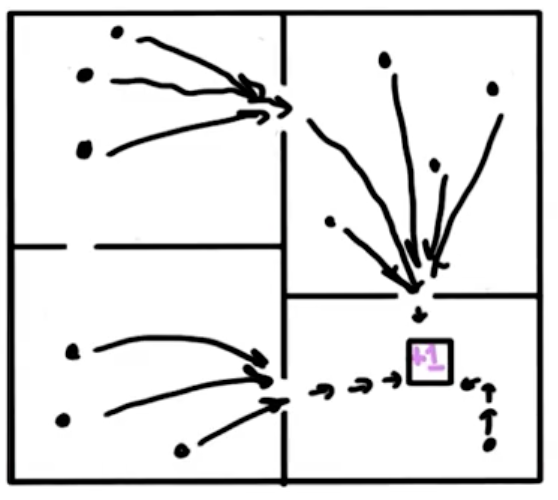

Grid World Example

Consider a $3 \times 4$ grid world with:

- Start state: bottom-left $(1,1)$

- Goal: top-right (green, $+1$), absorbing

- Trap: below goal (red, $-1$), absorbing

- Wall: one impassable cell at $(2,2)$

- Actions: Up, Down, Left, Right

- Hitting a boundary: stay in place

Deterministic case: the shortest path from start to goal is 5 steps (e.g. U, U, R, R, R).

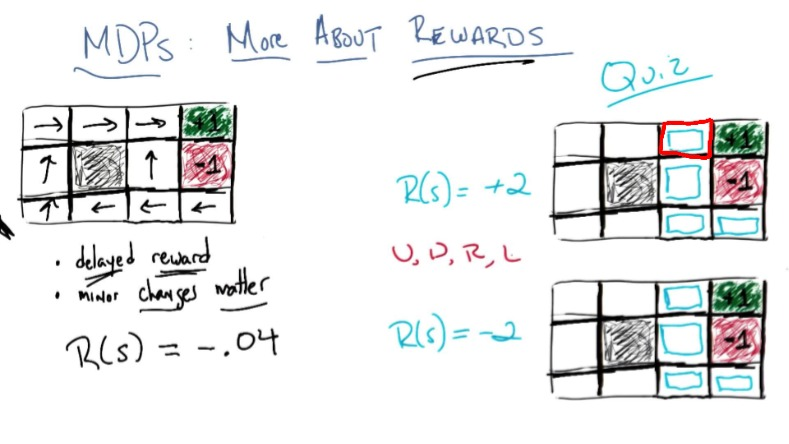

Quiz 1: What is the shortest sequence getting from START to GOAL?

Answer: U, U, R, R, R (or equivalently R, R, U, U, R). Multiple optima exist.

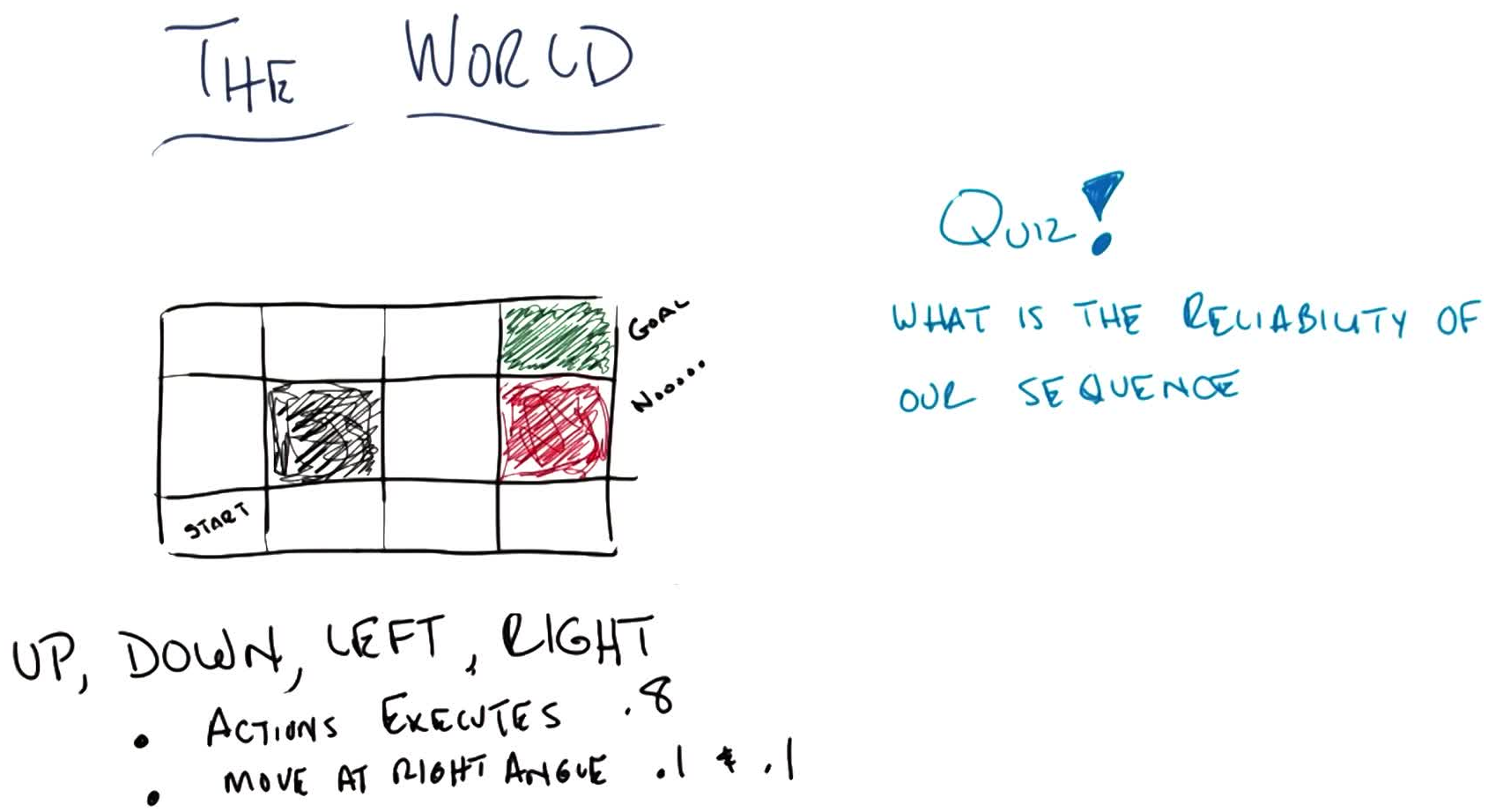

Stochastic case: actions execute correctly with $P = 0.8$, and move at right angles with $P = 0.1$ each.

Quiz 2: What is the reliability of the sequence U, U, R, R, R under stochastic transitions? (Ch. 17, AI: A Modern Approach)

Primary path (all correct): $0.8^5 = 0.32768$

Alternate path (first 4 go “wrong” as right-angles, last correct): $0.1^4 \times 0.8 = 0.00008$

Answer: $0.32768 + 0.00008 = \mathbf{0.32776}$

The alternate path goes underneath the barrier: the 4 “wrong” right-angle moves trace out the other optimal sequence (R, R, U, U, R). By symmetry, whichever 5-step sequence you chose, the reliability is the same (both paths contribute $0.8^5$ for the intended route and $0.1^4 \times 0.8$ for the alternate). A common mistake is forgetting the alternate path and answering $0.32768$.

This motivates why a fixed plan (sequence of actions) is insufficient under uncertainty: we need a policy that tells us what to do in every state.

Markov Decision Processes (MDPs)

Definition (MDP): A Markov Decision Process is defined by the tuple $(S, A, T, R, \mu_0, \gamma, H)$:

- $S^+$: set of all states (including terminal). $S \subset S^+$: non-terminal states. $S_i \subset S^+$: initial states.

- $A$: set of actions (possibly state-dependent: $A(s)$)

- $T(s, a, s’)$: transition model: $P(s’ \mid s, a)$. Must sum to 1: $\sum_{s’} T(s,a,s’) = 1 \; \forall s, a$

- $R(s,a,s’)$: reward function (equivalently $R(s)$ or $R(s,a)$; all mathematically equivalent). Maps transitions to scalars $\in \mathbb{R}$.

- $\mu_0$: initial state distribution (fixed throughout training; draw $s_0 \sim \mu_0$ to begin)

- $\gamma \in [0, 1)$: discount factor

- $H$: horizon (finite or infinite)

Terminal / Absorbing States: A terminal state is special: all actions transition back to itself with $P = 1$ and yield $R = 0$. This is not about the reward for entering the terminal state (which can be $+1$, $-1$, etc.), but about what happens from the terminal state onward: the agent is stuck with zero reward forever. This formal property is what makes the episode “end.”

Two key properties:

Markov Property: the transition function depends only on the current state, not on history: \(P(s' \mid s, a) = P(s' \mid s_0, a_0, s_1, a_1, \ldots, s, a)\)

- Non-Markovian processes can be made Markovian by folding history into the state (at the cost of a larger state space)

Stationarity: the transition and reward functions do not change over time (the “physics” of the world are fixed)

MDP vs POMDP: In an MDP, the agent fully observes the state (observation = state). In a Partially Observable MDP (POMDP), the agent receives observations that may differ from the true state, with an emission function $O(o \mid s)$. The term “observation” and “state” are often used interchangeably in RL literature, which can be confusing. Grid world problems in this course are MDPs.

Frozen Lake Example: A $4 \times 4$ grid where each action (U/D/L/R) has only 33% chance of going in the intended direction, and 33% chance each of the two perpendicular directions. Counter-intuitively, the optimal action next to the goal is often to go away from the goal (e.g. DOWN instead of RIGHT) because: if you fail going right, you might slip into a hole, but if you fail going down, you either reach the goal anyway (33%) or stay safe (33%).

Policies

Definition (Policy): A policy $\pi: S \rightarrow A$ maps each state to an action. It tells the agent what to do in every state, not just a fixed plan from a start state.

- Deterministic policy: $\pi(s) = a$ (always the same action for a given state)

- Stochastic policy: $\pi(a \mid s) = P(A = a \mid S = s)$ (probability distribution over actions)

Most policies in this course are deterministic. However, the environment can still be stochastic regardless of the policy type.

A plan is a fixed sequence of actions (e.g. U, U, R, R, R). A policy is reactive: it tells you what to do given whatever state you find yourself in. This makes policies robust to stochastic transitions.

You can’t write “U, U, R, R, R” as a stationary policy because policies map states to actions, not sequence positions to actions. The same state visited at different points in a plan might need different actions. A policy handles this naturally: wherever you end up (due to stochastic transitions), it always knows what to do next. From a policy you can infer a plan, but a plan cannot capture the full robustness of a policy.

Optimal Policy: $\pi^{*} = \arg\max_\pi \; \mathbb{E}\left[\sum_{t=0}^{\infty} \gamma^t R(s_t) \mid \pi\right]$

Delayed Reward & Temporal Credit Assignment

In RL, the reward signal is often delayed: actions taken now may only show their effect many steps later (e.g. losing a chess game on move 100 due to a mistake on move 3).

The temporal credit assignment problem is: given a sequence of $(s, a, r)$ triples, determine which actions were responsible for the final outcome.

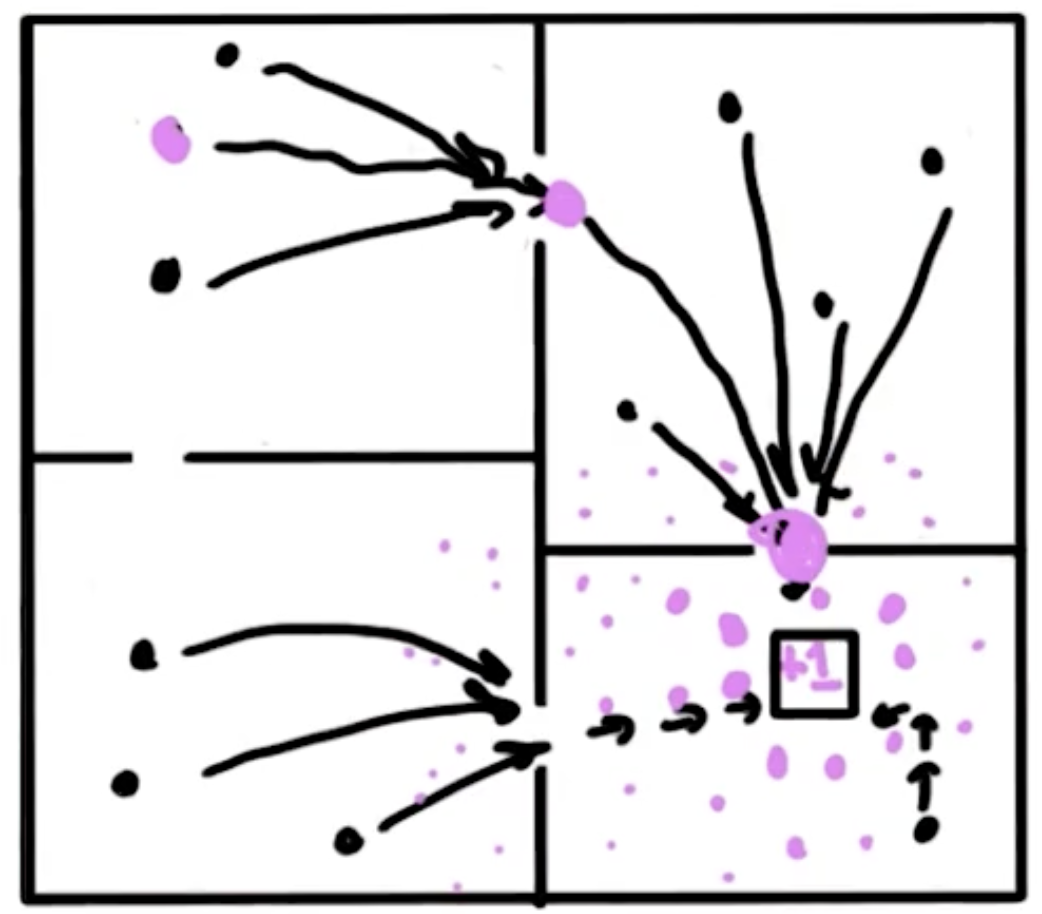

Rewards as Domain Knowledge

The reward function encodes our domain knowledge. Different rewards produce different optimal policies:

| $R(\text{non-absorbing})$ | Optimal Behavior |

|---|---|

| $R = -0.04$ (small negative) | Take safe path to $+1$, avoid $-1$ (slightly warm beach). The state next to the $-1$ trap goes LEFT (long way around) because the $-0.04$ penalty for extra steps is small compared to the 10% chance of slipping into $-1$ if you go UP. |

| $R = +2$ (positive) | Never leave: avoid all absorbing states, accumulate reward forever |

| $R = -2$ (large negative) | End ASAP: even diving into $-1$ is better than accumulating $-2$ per step |

Quiz 3: Given two copies of the grid world with $R = +2$ and $R = -2$ respectively, fill in the optimal action for four highlighted states in each.

Answer ($R = +2$): L, L, L, D: avoid absorbing states at all costs, accumulate positive reward forever. Bash head against wall if needed. For the bottom-right state next to both absorbing states, any direction works: only 3 states can accidentally end the game, and in each you can pick an action that guarantees you don’t slip into an absorbing state.

Answer ($R = -2$): R, R, R, U: end the game ASAP. Even diving into $-1$ is better than staying on the “hot beach.” The bottom-left state goes RIGHT (not UP) because going right either reaches the bottom-right corner, stays put, or goes up: you never get farther from an exit. Going up risks slipping left and getting farther away. For $R < -1.6284$, this “end immediately” policy is optimal.

Rewards must be chosen carefully to induce the desired behavior. They are the teaching signal in RL.

Infinite Horizons & Stationarity of Preferences

Under finite horizons, the optimal policy becomes non-stationary: $\pi(s, t)$ depends on both state and remaining time steps. Under infinite horizons, the policy is stationary: $\pi(s)$ depends only on the current state.

Example: In the grid world with $R = -0.04$, the state near the trap normally goes LEFT (long way around). But if you only have 3 timesteps left, you’d go UP instead: the long way can’t possibly reach $+1$ in time, so taking the risky short path is your only shot at positive reward. Same state, different action, purely because of remaining time. Even within a single run, repeated failed attempts at the same action might cause a switch: not because the action is wrong, but because you’re running out of time.

Episodes, Trajectories & Task Types

- Timestep: a global clock discretizing time (not the same as a value iteration “iteration”)

- Episodic task: finite number of timesteps; agent starts in $s_0 \sim \mu_0$, interacts until reaching a terminal state

- Continuing task: no natural end; the task goes on forever (e.g. locomotion, continuous control). Often an artificial terminal state is imposed after a fixed number of timesteps.

Truncation vs Termination: When an environment ends because a terminal state was reached, that’s termination (natural). When it ends because a time limit was hit, that’s truncation (artificial). Truncation requires special handling in value function calculations because the agent didn’t truly “finish”: the future value from the truncated state is not zero.

- Trajectory: the complete sequence of interactions from initial to terminal state in a single episode:

The Return $G_t$

The return $G_t$ is the total discounted reward from timestep $t$ onward:

\[G_t = R_{t+1} + \gamma R_{t+2} + \gamma^2 R_{t+3} + \cdots = \sum_{k=0}^{\infty} \gamma^k R_{t+k+1}\]This can be written recursively:

\[G_t = R_{t+1} + \gamma \cdot G_{t+1}\]This recursive form is the direct bridge to the Bellman equation: $V(s) = \mathbb{E}[G_t \mid S_t = s]$.

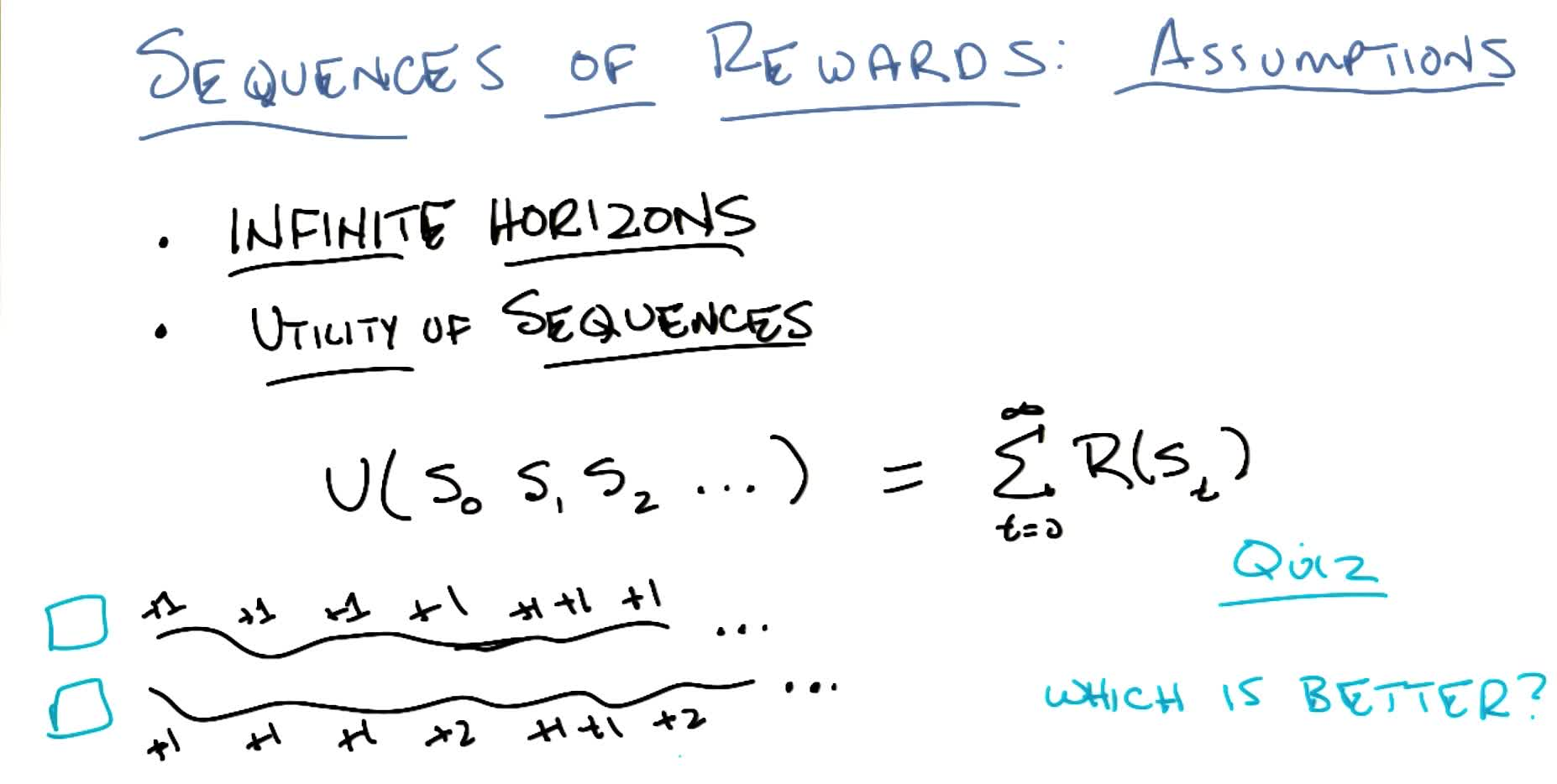

Stationarity of preferences: if $U(s_0, s_1, s_2, \ldots) > U(s_0, s_1’, s_2’, \ldots)$, then $U(s_1, s_2, \ldots) > U(s_1’, s_2’, \ldots)$. This forces utilities to be additive over rewards.

Discounted Rewards

Summing undiscounted rewards over an infinite horizon yields $\infty$ for any policy with positive rewards, making comparison impossible. The fix: discounting.

\[U(s_0, s_1, s_2, \ldots) = \sum_{t=0}^{\infty} \gamma^t R(s_t), \quad \gamma \in [0, 1)\]Quiz 4: Which infinite reward sequence is better: $(+1, +1, +1, \ldots)$ or $(+1, +1, +1, +2, +1, +1, +2, \ldots)$?

Answer: Both (neither is better). Both sum to $\infty$. This is the “existential dilemma of being immortal”: if you live forever, $\infty = \infty$ regardless of path. This motivates discounting.

Geometric series bound:

\[\sum_{t=0}^{\infty} \gamma^t R(s_t) \leq \sum_{t=0}^{\infty} \gamma^t R_{\max} = \frac{R_{\max}}{1 - \gamma}\]Proof sketch: Let $x = 1 + \gamma + \gamma^2 + \cdots$. Then $x = 1 + \gamma x$, so $x(1 - \gamma) = 1$, giving $x = \frac{1}{1-\gamma}$.

Intuition: $\gamma < 1$ creates an effective “soft horizon” that is always the same finite distance away, no matter how many steps you’ve taken. This preserves stationarity while keeping utilities finite.

- $\gamma \to 0$: only care about immediate reward

- $\gamma \to 1$: care about far future (approaches undiscounted case)

The Bellman Equation

Bellman Equation (Value Form):

\[V(s) = R(s) + \gamma \max_a \sum_{s'} T(s, a, s') \cdot V(s')\]The true value of a state is the immediate reward plus the discounted expected value of the best reachable next state.

This is a recursive equation: the value of every state depends on the values of neighboring states. It encodes the entire MDP: rewards, transitions, actions, and discount factor.

Key distinction — Reward vs Return vs Value:

- $R(s)$ or $R(s,a)$: reward — immediate scalar feedback for a single transition

- $G_t = \sum_{k=0}^{\infty} \gamma^k R_{t+k+1}$: return — discounted sum of rewards from one trajectory starting at time $t$

- $V(s) = \mathbb{E}[G_t \mid S_t = s]$: value — expected return over all possible trajectories from state $s$

The agent maximizes value (not reward, not return): it accounts for both the long-term sum and the stochasticity of outcomes.

Analogy: Poking the university president earns you \$ 1 immediately ($R = +1$), but the long-term utility is catastrophic ($V \ll 0$). Conversely, enrolling in a master’s degree has negative immediate reward ($R = -\$6600$), but high long-term utility ($V \gg 0$). Utility accounts for all delayed rewards, which is how it connects back to the credit assignment problem.

Once we know $V(s)$ for all states, the optimal policy follows immediately:

\[\pi^{\*}(s) = \arg\max_a \sum_{s'} T(s, a, s') \cdot V(s')\]Value Iteration

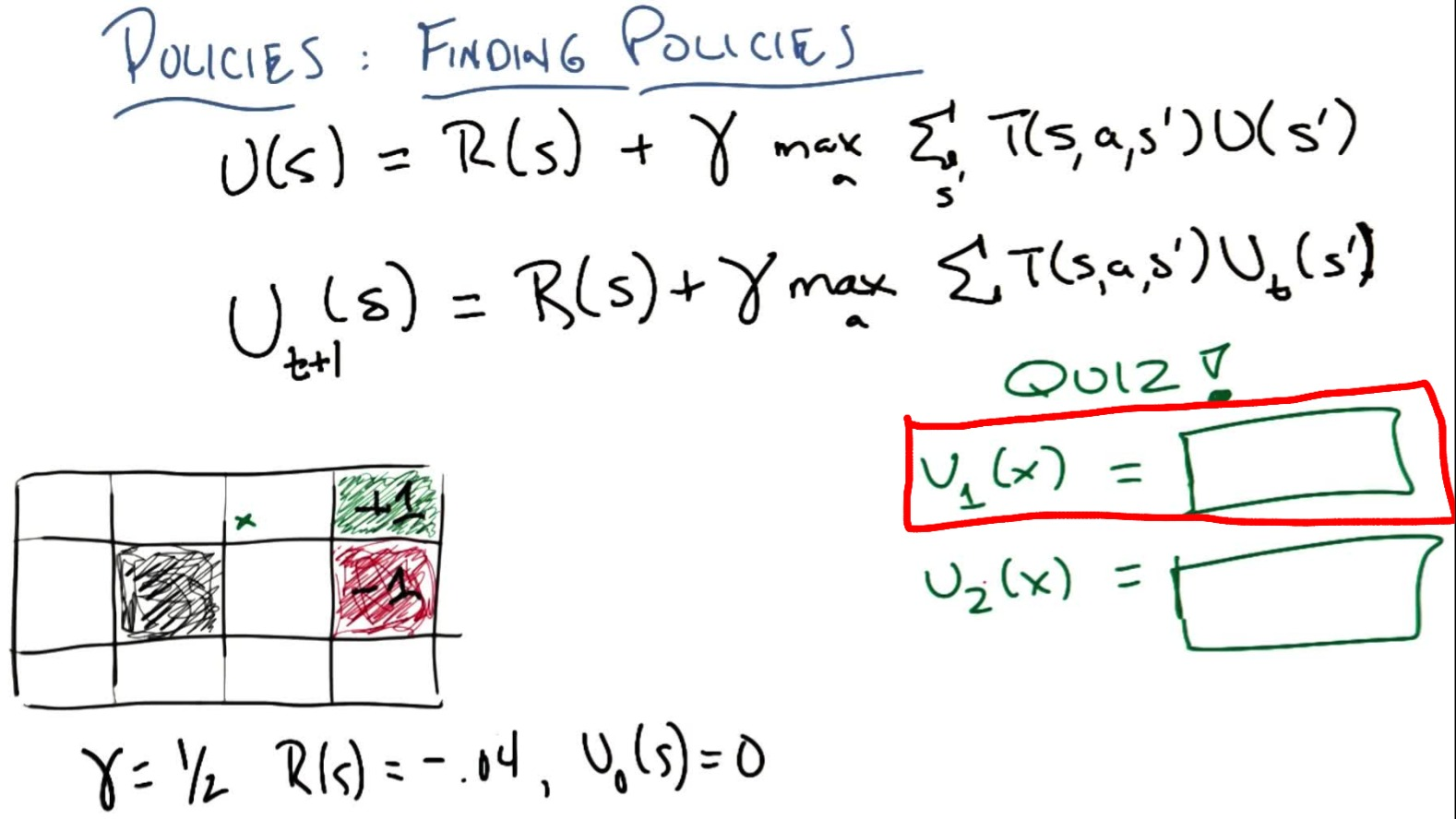

The Bellman equation gives $n$ equations in $n$ unknowns (one per state), but the $\max$ makes them non-linear: we can’t solve via matrix inversion. Instead, we iterate:

Value Iteration Algorithm:

\[\hat{V}_{t+1}(s) = R(s) + \gamma \max_a \sum_{s'} T(s, a, s') \cdot \hat{V}_t(s')\]

- Initialize $\hat{V}_0(s) = 0$ for all non-absorbing states

- Repeat until convergence:

- Extract policy: $\pi(s) = \arg\max_a \sum_{s’} T(s, a, s’) \cdot V(s’)$

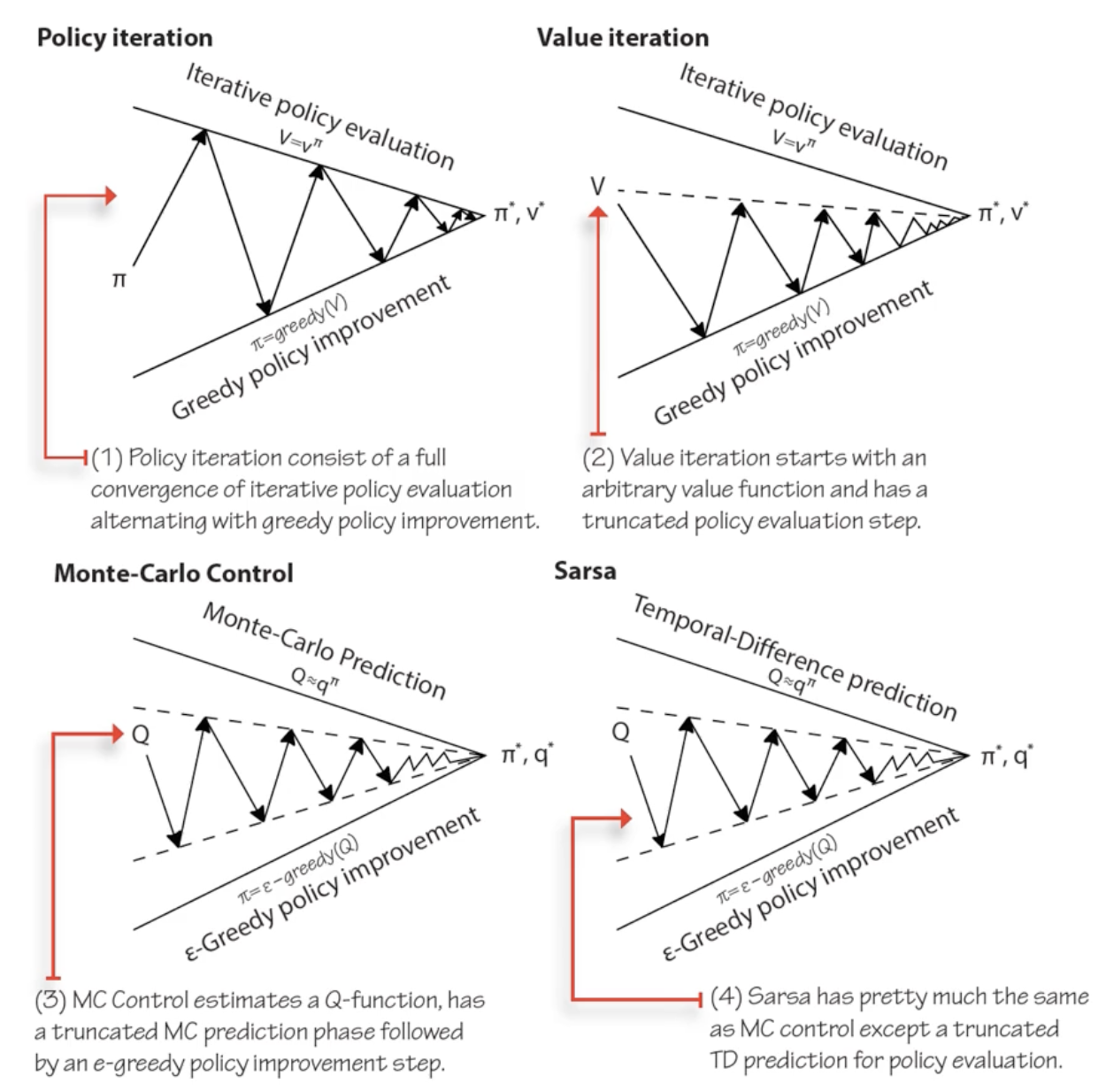

Value iteration can be understood as truncated policy iteration: it performs the evaluation and improvement steps in a single iteration (the $\max$ inside the update does both at once). In contrast, full policy iteration runs evaluation to convergence before improving. Modified policy iteration is the middle ground: run evaluation for $k$ iterations (not to convergence) before improving.

Why it converges: at each iteration, true reward $R(s)$ is injected and propagated through neighbors. The discount factor $\gamma < 1$ contracts the influence of the (initially wrong) utility estimates, so truth gradually overwhelms noise. This is a contraction mapping argument.

Quiz 5: Given $\gamma = 0.5$, $R = -0.04$ everywhere (except absorbing states), and initial utilities $\hat{V}_0 = 0$ (except $V(+1) = 1$, $V(-1) = -1$), compute $\hat{V}_1(x)$ and $\hat{V}_2(x)$ for the state marked $x$ (one cell left of the $+1$ goal).

$\hat{V}_1(x)$: Best action is Right (0.8 chance of reaching $+1$). Other neighbors are 0.

\[\hat{V}_1(x) = -0.04 + 0.5 \times (0.8 \times 1 + 0.1 \times 0 + 0.1 \times 0) = -0.04 + 0.4 = \mathbf{0.36}\]$\hat{V}_2(x)$: Still go Right. State below $x$ now has $\hat{V}_1 = -0.04$ (from wall-bashing). State $x$ itself has $\hat{V}_1 = 0.36$.

\[\hat{V}_2(x) = -0.04 + 0.5 \times (0.1 \times 0.36 + 0.1 \times (-0.04) + 0.8 \times 1) = -0.04 + 0.5 \times 0.832 = \mathbf{0.376}\]Important observation: The state below $x$ initially “bashes head against wall” (goes LEFT): at $t=0$ all utilities are 0, so the best strategy is to avoid $-1$ at all costs. But as $x$’s utility grows ($0.36 \to 0.376 \to \ldots$), eventually going UP from below $x$ becomes worthwhile. This illustrates how value iteration initially computes wrong local policies that self-correct as true values propagate outward from reward sources.

Values vs policies: We don’t actually need exact utilities to get the right policy. If the ordering of actions is correct (even with wrong absolute values), the policy is already optimal. This is analogous to classification vs regression: $\pi$ is a classifier (state → discrete action), while $V$ is regression (state → continuous value). Given $V$ we can find $\pi$, but many different $V$’s yield the same $\pi$.

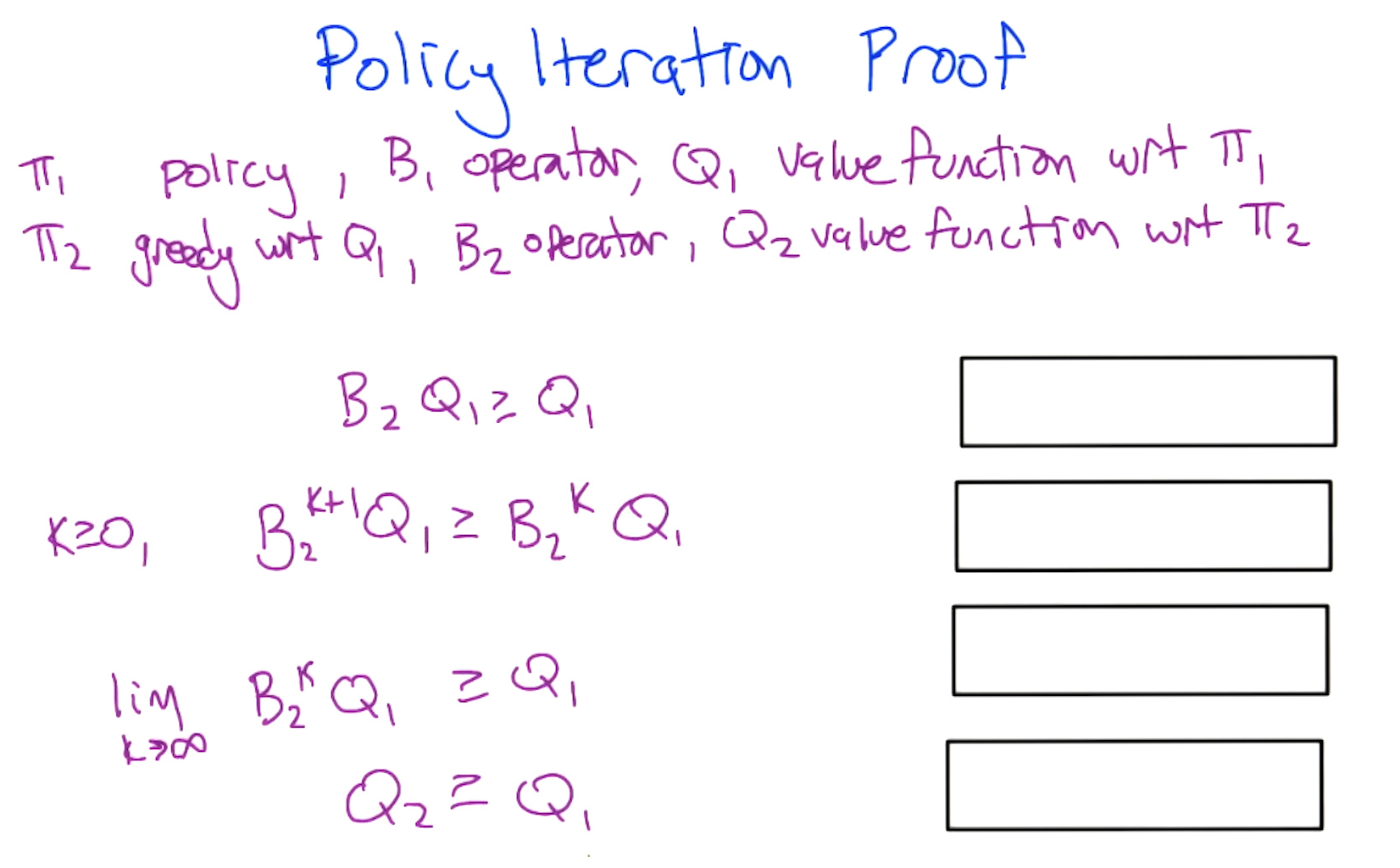

Policy Iteration

Since we care about policies (discrete mappings), not exact values (continuous), we can exploit this:

Policy Iteration Algorithm:

\[V^{\pi}(s) = R(s) + \gamma \sum_{s'} T(s, \pi(s), s') \cdot V^{\pi}(s')\]

- Start with arbitrary policy $\pi_0$

- Evaluate: solve for $V^{\pi_t}(s)$ (this is $n$ linear equations in $n$ unknowns, since $\pi$ fixes the action):

- Improve: $\pi_{t+1}(s) = \arg\max_a \sum_{s’} T(s, a, s’) \cdot V^{\pi_t}(s’)$

- Repeat until $\pi$ converges

Why it’s different from value iteration: by fixing the policy, the $\max$ disappears and the equations become linear: solvable via matrix inversion ($O(n^3)$). Policy iteration makes bigger jumps in policy space rather than small incremental updates in value space, often converging in fewer iterations.

Convergence: there are finitely many policies; each step improves or maintains the policy, so it must converge. Specifically, policy iteration converges when the improvement step produces no change in the policy: $\pi_{t+1} = \pi_t$. At that point, $\pi_t$ is optimal.

Policy Evaluation (the “evaluate” step) can also be run standalone to estimate $V^\pi$ for any given policy. This is the prediction problem: given a policy, what is its value? The control problem is finding the optimal policy. Policy iteration solves control by alternating prediction (evaluation) and improvement. These two problems (prediction and control) recur throughout RL: TD learning solves prediction, Q-learning solves control.

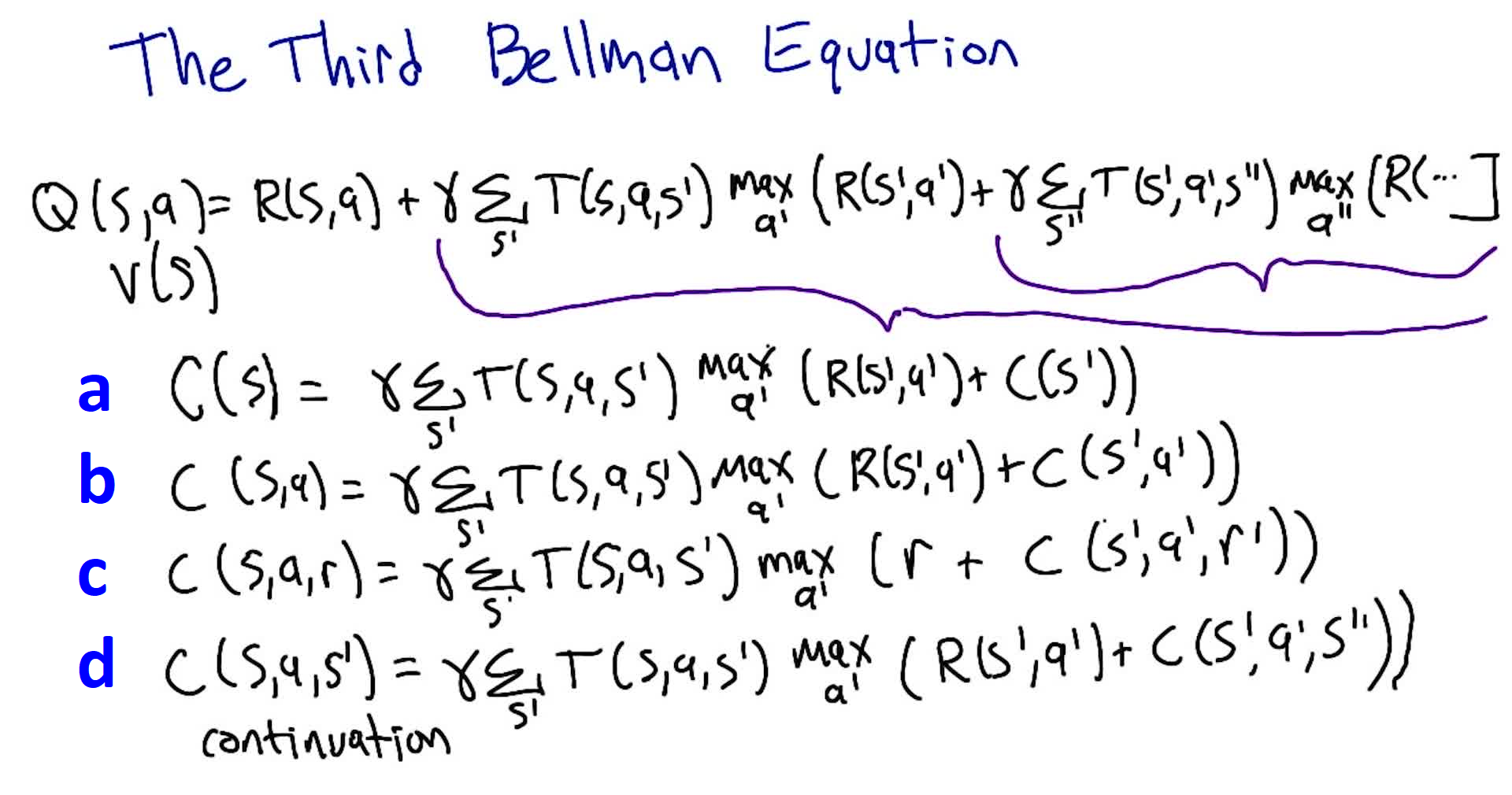

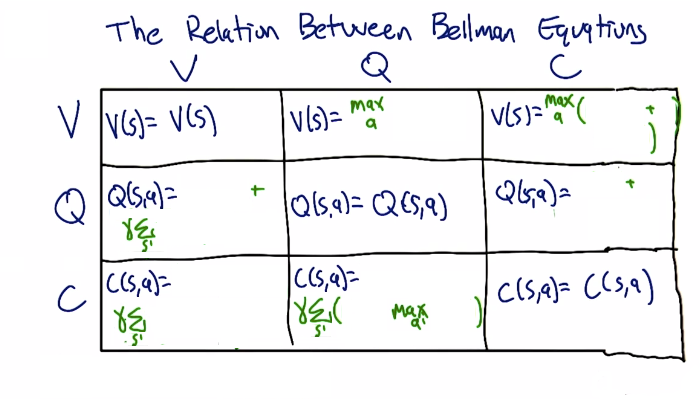

Bellman Equations: V, Q, and C

Notation change: from this point forward, we use $V$ (value) instead of $U$ (utility), and $R(s,a)$ instead of $R(s)$. The history of an agent is the infinite sequence: $s_1, a_1, r_1, s_2, a_2, r_2, \ldots$ (SAR, SAR, SAR…). All prior results still hold; this is purely notational.

The infinite SAR sequence can be “cut” at different points, yielding three Bellman equations:

V: Value Function (starts at state)

\[V(s) = \max_a \left[ R(s,a) + \gamma \sum_{s'} T(s,a,s') \cdot V(s') \right]\]Q: Quality Function (starts at state-action)

\[Q(s,a) = R(s,a) + \gamma \sum_{s'} T(s,a,s') \cdot \max_{a'} Q(s', a')\]C: Continuation Function (starts after reward)

\[C(s,a) = \gamma \sum_{s'} T(s,a,s') \cdot \max_{a'} \left[ R(s', a') + C(s', a') \right]\]A: Advantage Function

\[A(s,a) = Q(s,a) - V(s)\]The advantage tells you how much better (or worse) action $a$ is compared to the policy’s default action in state $s$. If $A(s,a) > 0$, action $a$ is better than what $\pi$ currently prescribes. Used heavily in policy gradient methods (e.g. A2C, PPO) to provide a signal for policy improvement.

Quiz 6: Derive the equation for C (continuation). (Multiple choice)

Answer: $C(s,a) = \gamma \sum_{s’} T(s,a,s’) \max_{a’} [R(s’,a’) + C(s’,a’)]$ (choice b). The continuation starts after the reward, so it needs both $s$ and $a$ as arguments (not just $s$), and the recursive call must compute the next reward, not reuse the current one.

Relationships:

Quiz 7: Fill in the V/Q/C relationship table: express each function in terms of the other two.

In terms of V In terms of Q In terms of C V — $\max_a Q(s,a)$ $\max_a [R(s,a) + C(s,a)]$ Q $R(s,a) + \gamma \sum_{s’} T(s,a,s’) V(s’)$ — $R(s,a) + C(s,a)$ C $\gamma \sum_{s’} T(s,a,s’) V(s’)$ $\gamma \sum_{s’} T(s,a,s’) \max_{a’} Q(s’,a’)$ — Key insight: Q encapsulates everything needed to derive V and the policy without knowing $T$ or $R$. C requires $R$ to recover Q or V. V requires both $T$ and $R$ to recover Q.

Reading down the columns (what extra knowledge does each function need?):

- If you have C: need $R$ to get V or Q

- If you have V: need $T$ to get C, need both $R$ and $T$ to get Q

- If you have Q: need nothing extra to get V ($\max_a Q$), need only $T$ to get C

This is why C is useful when the reward function is hard to represent (Dietterich), and Q is powerful because it gives answers to everything without knowing the model.

Why Q matters for RL: To go from $V$ to a policy, you need $T$ and $R$ (the model). But $Q$ directly encodes the value of each action: $\pi^{*}(s) = \arg\max_a Q(s,a)$, requiring no knowledge of transitions or rewards. This makes Q the natural choice for model-free reinforcement learning.

2. Reinforcement Learning Basics

Agent-Environment Interaction

In RL, the computation happens inside the agent’s head. Unlike planning (where the MDP is given as a graph and we compute on it), in RL the agent doesn’t know the environment: it only experiences it through interaction.

The interaction loop:

- Environment reveals state $s$ to agent

- Agent selects action $a$

- Environment transitions, returns next state $s’$ and reward $r$

- Repeat

The agent and the policy are the same thing conceptually. The environment plays the role of the MDP. But crucially, the MDP is not living inside the agent’s head: the agent must learn about it through experience.

The Taxi Problem (Interactive Demo)

The lecture demonstrates RL from the agent’s perspective using a grid world where:

- The agent controls an orange square (taxi) via 6 actions (4 directional + pickup + dropoff)

- A colored circle (passenger) must be picked up and delivered to the matching colored square

- The agent starts knowing nothing: not the rules, not the goal, not even what actions do

Key observations from the interactive demo:

- Humans bring massive background knowledge: assuming walls are impassable, navigation is consistent across states, actions are deterministic

- RL algorithms have none of these assumptions and may literally bash their head against walls repeatedly

- The agent had to first explore to learn what actions do (transition function), then figure out what gives reward (reward function), then exploit that knowledge

- Even an experienced human found it “incredibly frustrating” not knowing the rules, generating empathy for RL agents

Takeaway: RL is fundamentally harder than planning/solving MDPs because the agent doesn’t know $T$ or $R$. It must discover them through trial and error, which requires balancing exploration (learning the environment) and exploitation (using what you’ve learned).

Types of Behavior (After Learning)

| Type | Description | Handles Stochasticity? |

|---|---|---|

| Plan | Fixed sequence of actions (e.g. L, R, U, U, pickup) | No: breaks if any action has unintended effect |

| Conditional Plan | Sequence with if-statements at branch points | Partially: handles anticipated branches |

| Stationary Policy | Mapping $\pi: S \rightarrow A$ for every state | Yes: robust to any stochasticity |

- A plan works in deterministic environments but fails under stochasticity

- A conditional plan is like a program with if-else branches at certain states

- A stationary policy (also called universal plan) handles everything but is very large: it must specify an action for every possible state

There always exists an optimal stationary policy for any MDP. You never need to look beyond stationary policies to find optimal behavior. This is why RL focuses almost entirely on learning stationary policies.

Trade-off: stationary policies are powerful (handle all stochasticity, always include optimal) but large (must specify action for every state, which means the learner must discover what to do everywhere).

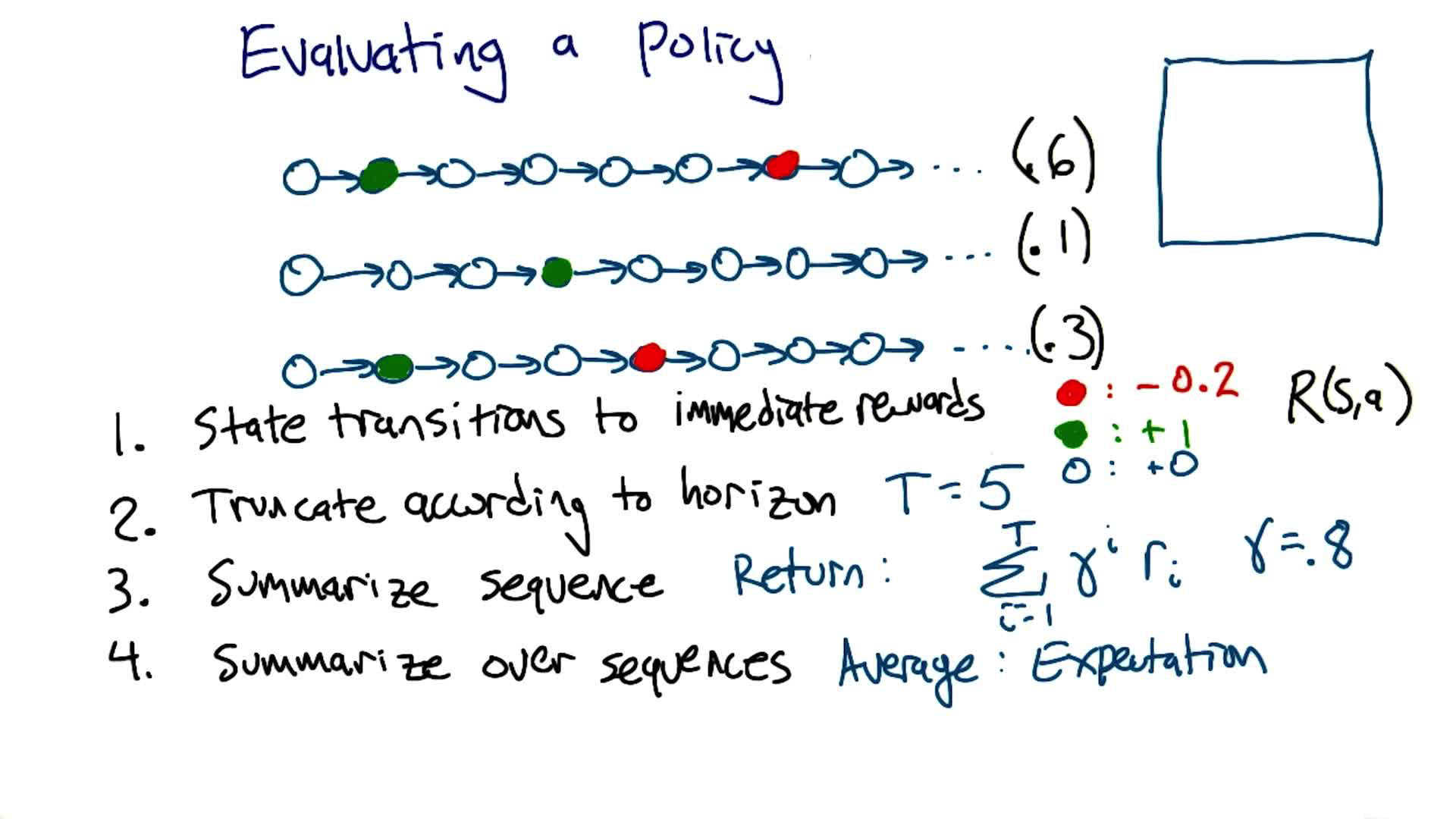

Evaluating a Policy

Given a policy $\pi$, how do we assign it a single number (its “value”)? Four steps:

- State transitions → immediate rewards: use $R(s,a,s’)$ to convert each transition to a number

- Truncate according to horizon: if finite horizon $T$, cut the sequence after $T$ steps

- Summarize each sequence → return: compute the discounted sum $G = \sum_{i=0}^{T-1} \gamma^i r_i$

- Summarize over sequences → expectation: weight each return by the probability of that trajectory occurring

Quiz: Given $\gamma = 0.8$, $T = 5$, $R(\text{green}) = +1$, $R(\text{red}) = -0.2$, $R(\text{white}) = 0$, and three trajectories with probabilities $0.6$, $0.1$, $0.3$, what is the value of this policy?

Note: here green/red circles are generic colored states with the given rewards, not the absorbing goal/trap states from the grid world. The agent passes through them and continues.

- Trajectory 1 ($P = 0.6$): green at step 2 ($t=1$) → $G_1 = \gamma^1 \times 1 = 0.8$

- Trajectory 2 ($P = 0.1$): green at step 4 ($t=3$) → $G_2 = \gamma^3 \times 1 = 0.512$

- Trajectory 3 ($P = 0.3$): green at step 2 ($t=1$), red at step 5 ($t=4$) → $G_3 = \gamma^1 \times 1 + \gamma^4 \times (-0.2) = 0.8 - 0.08192 = 0.71808$

Answer: $\mathbb{E}[G] = 0.6 \times 0.8 + 0.1 \times 0.512 + 0.3 \times 0.71808 \approx \mathbf{0.75}$

Evaluating Learners

Two learners that both find the optimal policy can still differ. We evaluate RL algorithms along two axes:

| Metric | Description |

|---|---|

| Computational complexity | Wall-clock time / compute required to learn |

| Sample complexity | Amount of experience (interactions with environment) needed to learn a good policy |

A learner that finds $\pi^{*}$ in 10 episodes is better (in sample complexity) than one that needs 1 million episodes, even if the first one uses more computation per episode. In practice, there are trade-offs: lower sample complexity often requires more computation (e.g. model-based methods build an internal model which is expensive to maintain but reduces needed interactions).

- Computational complexity: how much processing between interactions

- Sample complexity: how many interactions with the environment are needed

- Space complexity: generally not the bottleneck in RL; computational and sample complexity dominate

3. TD & Friends

Note: This section includes additional content from Supplementary Lecture on Prediction & Control

We now move from planning (solving a known MDP) to learning (figuring out the right thing to do from experience alone). Temporal Difference (TD) methods are the core algorithms for learning value functions without a model, and they form the backbone of modern RL.

Three Families of RL Algorithms

| Family | How it learns | Intermediate representations | Learning directness |

|---|---|---|---|

| Model-based | Learns $T$ and $R$ from experience, then solves the MDP | $\text{SARS} \rightarrow T, R \rightarrow Q^{*} \rightarrow \pi$ | Most supervised (predicting next states/rewards) |

| Value-function-based (Model-free) | Directly learns $Q$ from experience, without building a model | $\text{SARS} \rightarrow Q \rightarrow \pi$ | Middle ground |

| Policy search | Directly modifies $\pi$ from experience | $\text{SARS} \rightarrow \pi$ | Most direct, but least useful feedback |

Model-based methods have the most supervised learning signal but the most intermediate computation. Policy search is the most direct but gets very little useful feedback for how to improve. Value-function-based methods (where TD learning lives) strike a balance. This course focuses primarily on family 2.

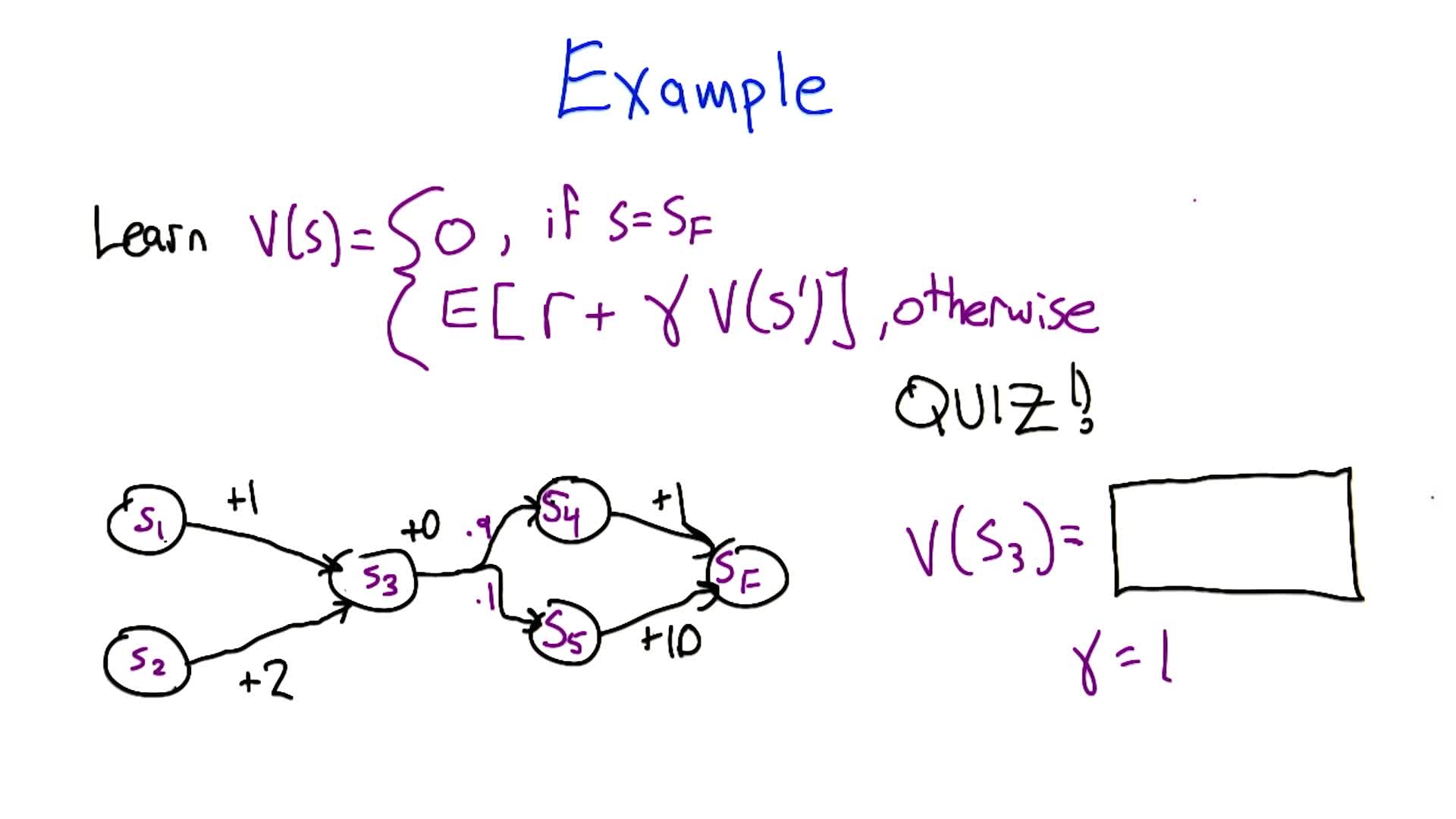

TD Learning: Estimating Values from Experience

TD learning predicts the expected sum of discounted rewards from a sequence of states. It’s a subroutine for RL: predict future rewards to better choose actions.

Computing V from a Markov Chain

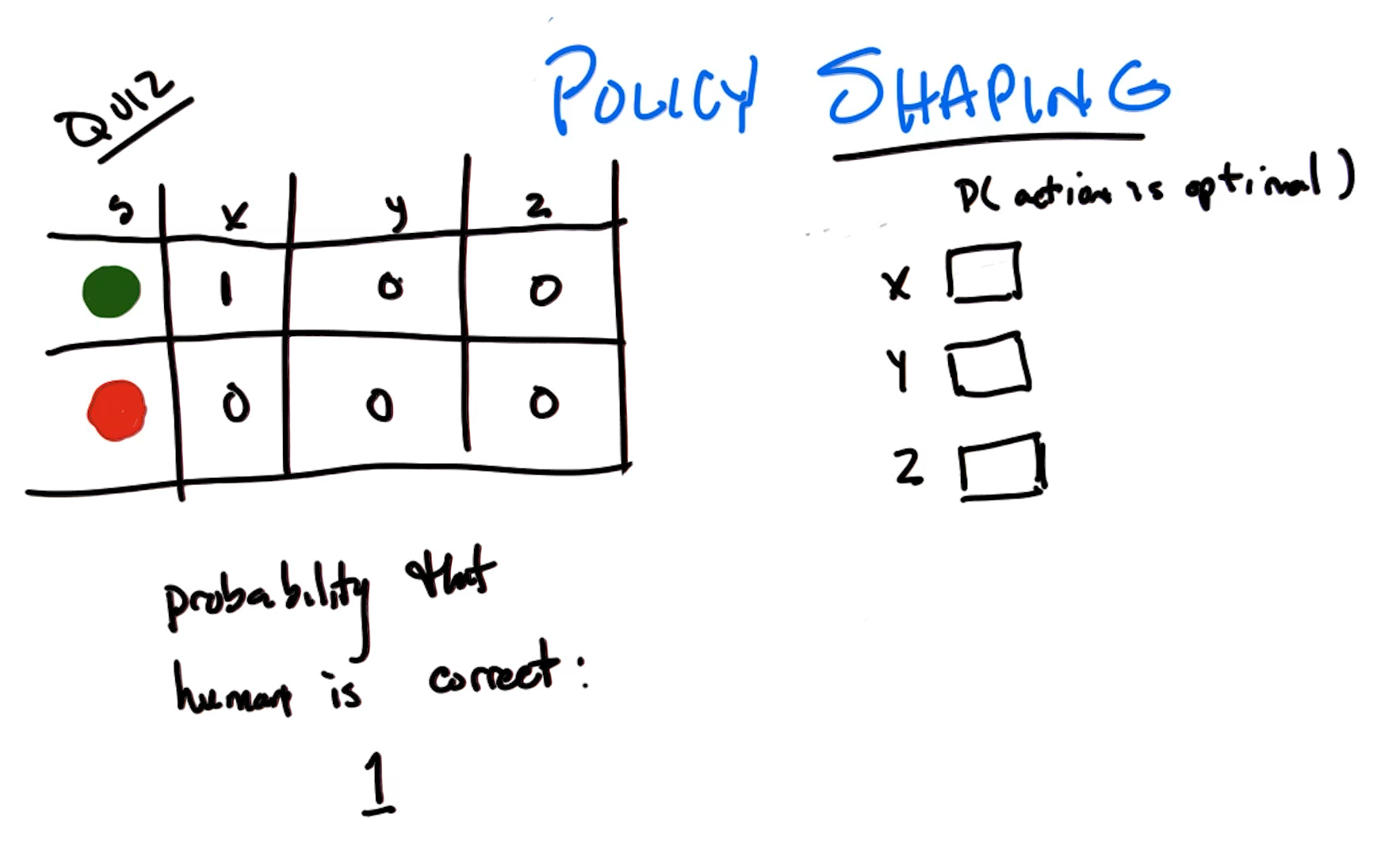

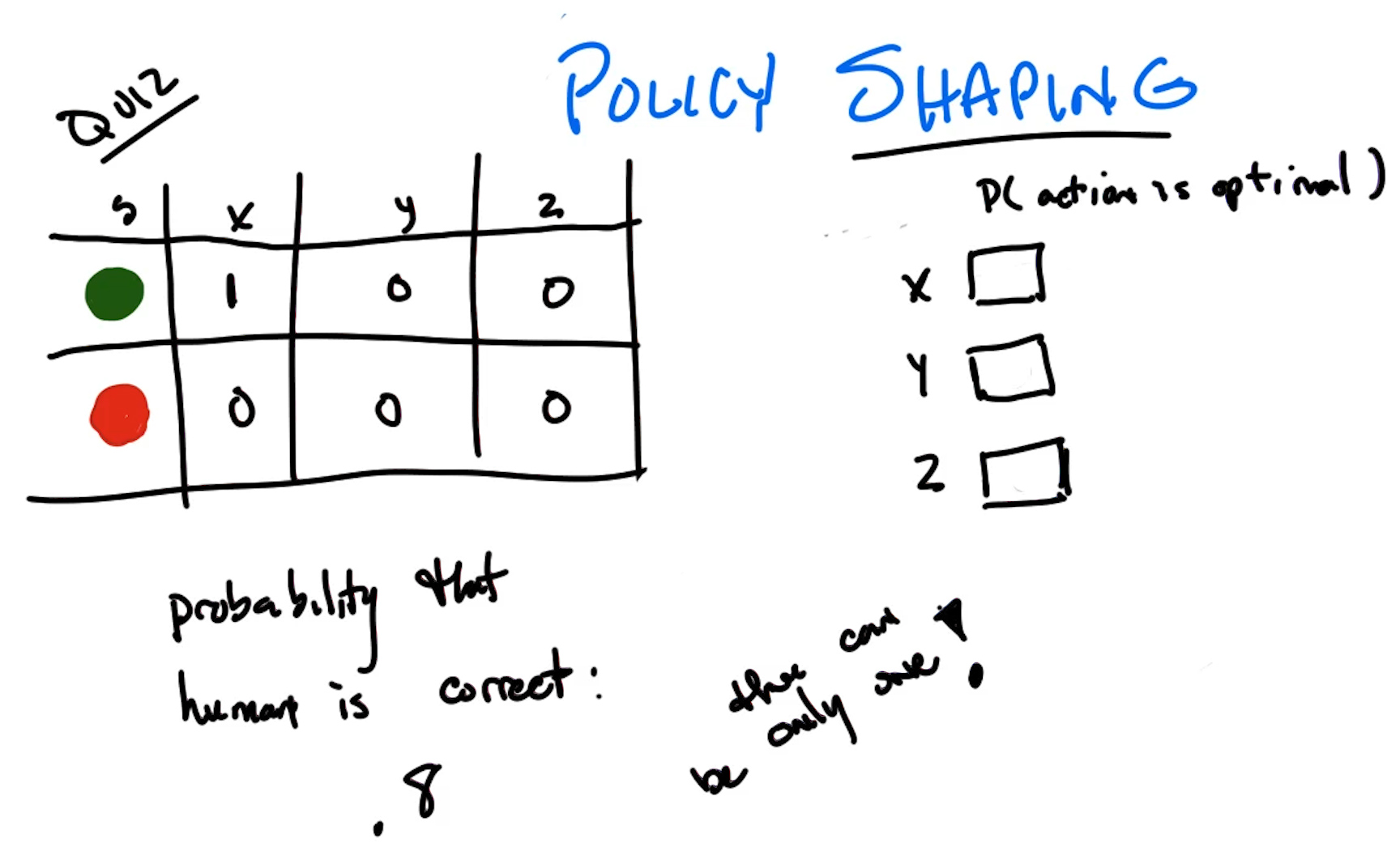

Quiz 1: Given this Markov chain with $\gamma = 1$, what is $V(S_3)$?

Work backwards from $V(S_F) = 0$:

- $V(S_4) = 1 + \gamma \cdot 0 = 1$

- $V(S_5) = 10 + \gamma \cdot 0 = 10$

- $V(S_3) = 0 + \gamma \cdot (0.9 \times 1 + 0.1 \times 10) = 0.9 + 1.0 = \mathbf{1.9}$

- $V(S_1) = 1 + 1.9 = 2.9$, $V(S_2) = 2 + 1.9 = 3.9$

Estimating Values from Data (Outcome-Based)

Instead of knowing the model, we observe episodes and estimate values by averaging returns:

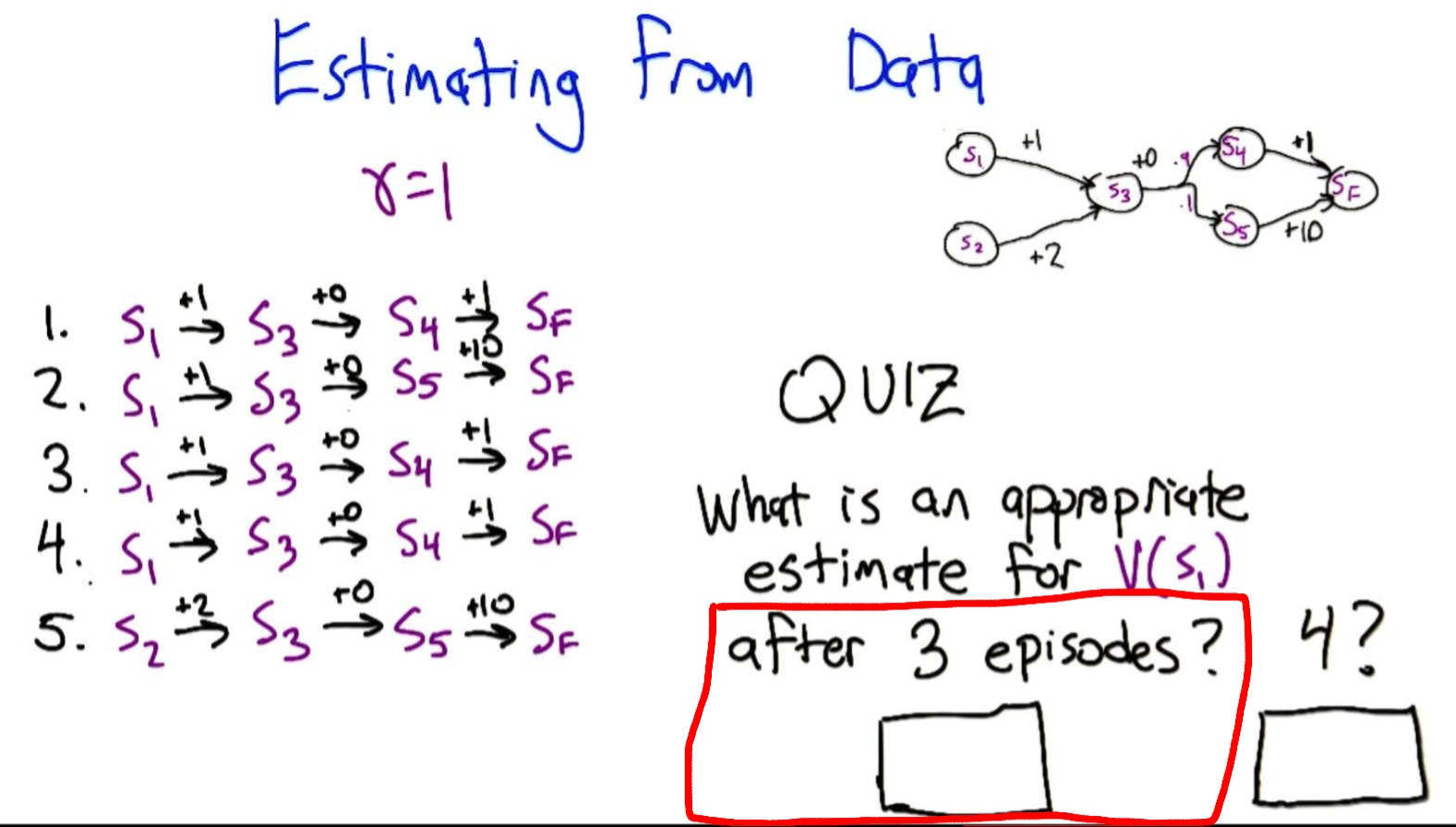

Quiz 2: Given episodes from $S_1$, estimate $V(S_1)$ after 3 and 4 episodes ($\gamma = 1$):

- Episode 1: $S_1 \to S_3 \to S_4 \to S_F$, return = $1 + 0 + 1 = 2$

- Episode 2: $S_1 \to S_3 \to S_5 \to S_F$, return = $1 + 0 + 10 = 11$

- Episode 3: $S_1 \to S_3 \to S_4 \to S_F$, return = $2$

- Episode 4: $S_1 \to S_3 \to S_4 \to S_F$, return = $2$

After 3 episodes: $(2 + 11 + 2) / 3 = \mathbf{5}$

After 4 episodes: $(2 + 11 + 2 + 2) / 4 = \mathbf{4.25}$

True value is 2.9. Estimate is high because the rare $S_5$ outcome (10% probability) appeared in 1/3 of our episodes.

Deriving the Incremental Update Rule

From the averaging formula, we can derive an incremental update. If $V_{T-1}(S_1) = 5$ after 3 episodes, and episode 4 gives return $R_T = 2$:

\[V_T(S_1) = \frac{(T-1) \cdot V_{T-1}(S_1) + R_T}{T}\]Rearranging algebraically:

\[\boxed{V_T(s) = V_{T-1}(s) + \alpha_T \left[ G_T(s) - V_{T-1}(s) \right]}\]where $\alpha_T = \frac{1}{T}$ is the learning rate and $G_T(s)$ is the return (discounted sum of rewards) observed for state $s$ in episode $T$. This looks exactly like the perceptron update rule: move the estimate a little bit in the direction of the error $(G_T - V_{T-1})$.

Learning Rate Properties

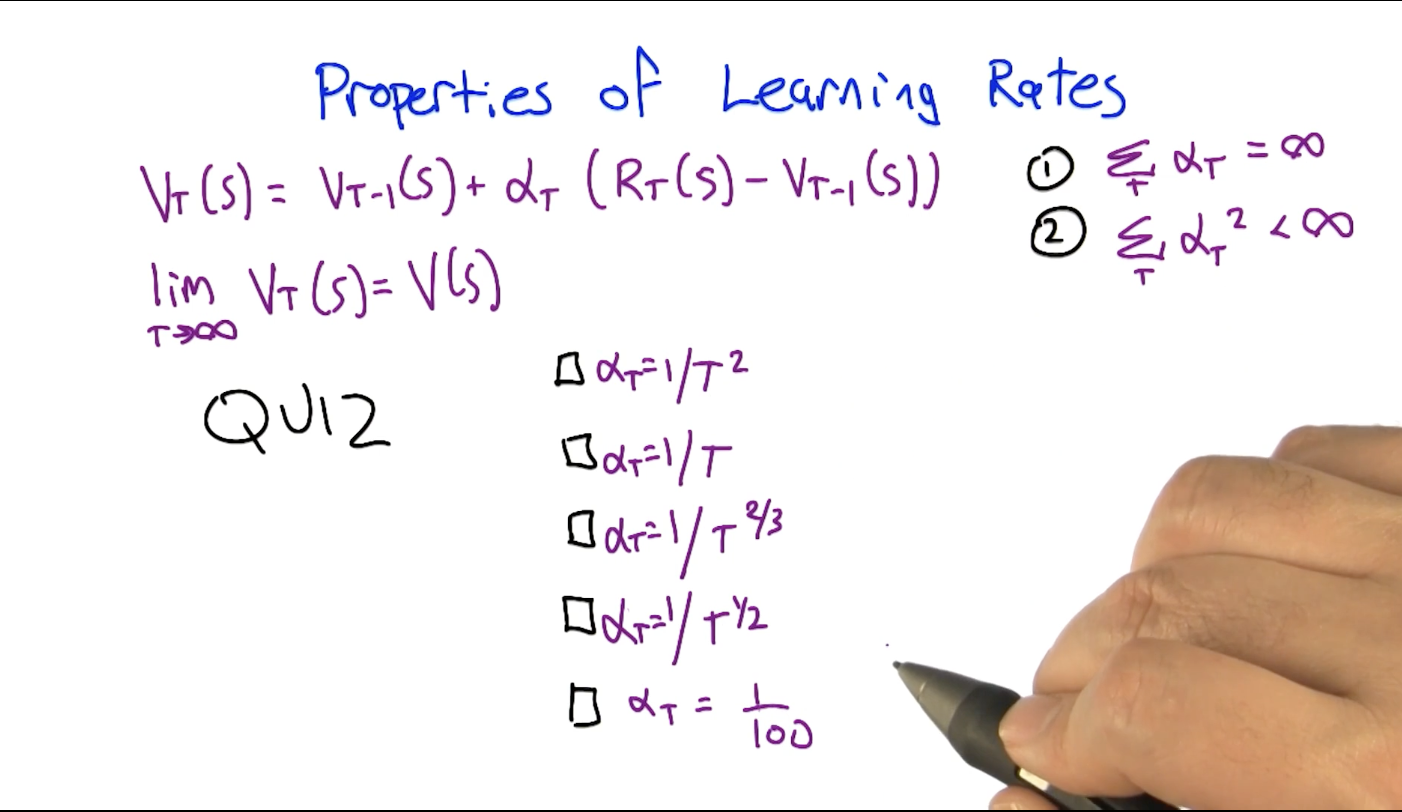

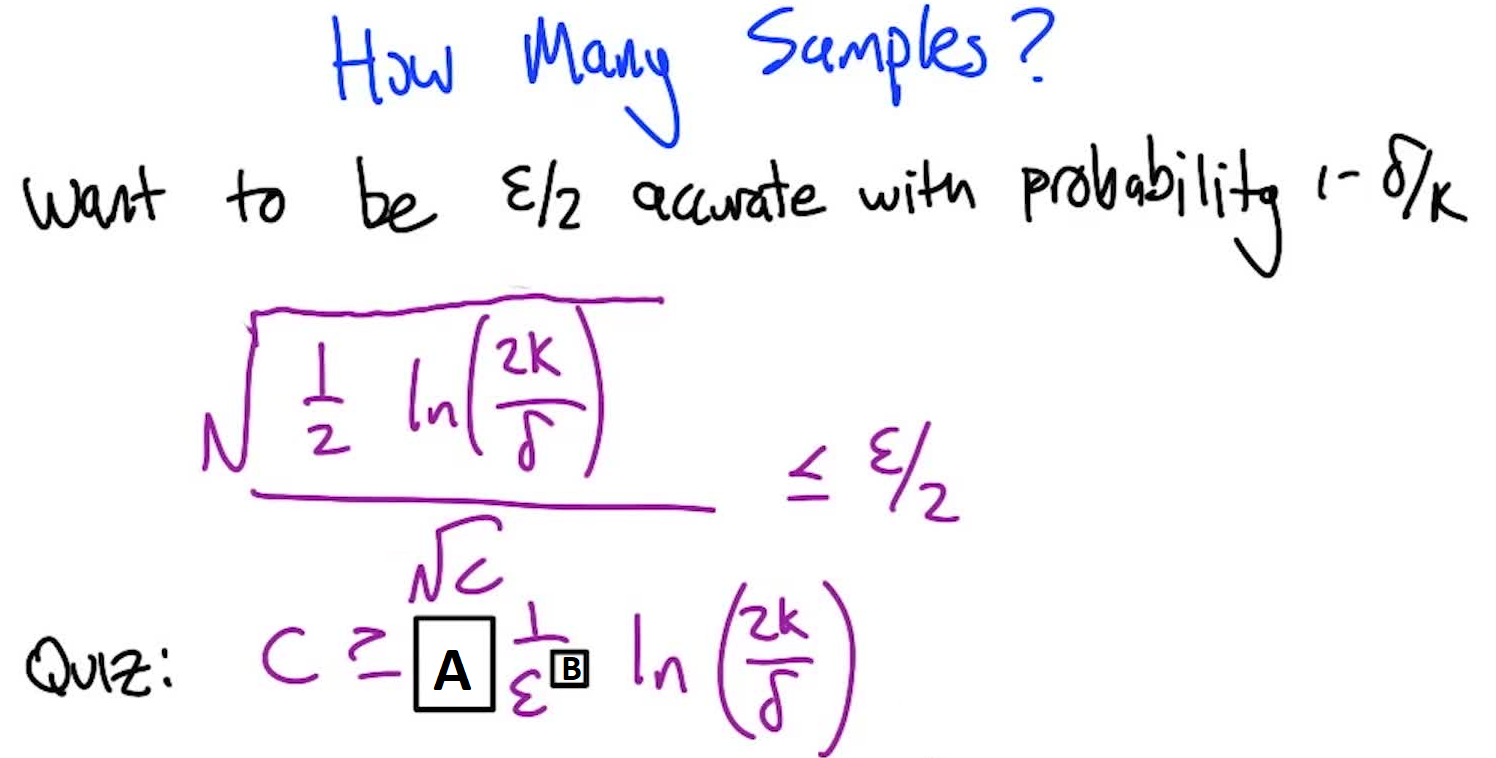

For the update rule to converge to the true expectation, the learning rate sequence ${\alpha_T}$ must satisfy:

\[\sum_{T=1}^{\infty} \alpha_T = \infty \quad \text{(can reach any value)} \qquad \sum_{T=1}^{\infty} \alpha_T^2 < \infty \quad \text{(noise dampens out)}\]Quiz 3: Which learning rate sequences satisfy both properties?

$\alpha_T$ $\sum \alpha_T$ $\sum \alpha_T^2$ Valid? $1/T^2$ $< \infty$ (converges to $\pi^2/6$) $< \infty$ No (sum too small) $1/T$ $= \infty$ (harmonic series) $< \infty$ Yes $1/T^{2/3}$ $= \infty$ $1/T^{4/3} < \infty$ Yes $1/\sqrt{T}$ $= \infty$ $1/T = \infty$ No (squares diverge) constant $c$ $= \infty$ $= \infty$ No (never converges) Rule of thumb: exponent on $T$ must be in $(1/2, 1]$ for both conditions to hold.

Note: A constant learning rate $\alpha$ fails the convergence conditions but is widely used in practice (e.g. in deep RL) because it allows continuous adaptation to non-stationary environments.

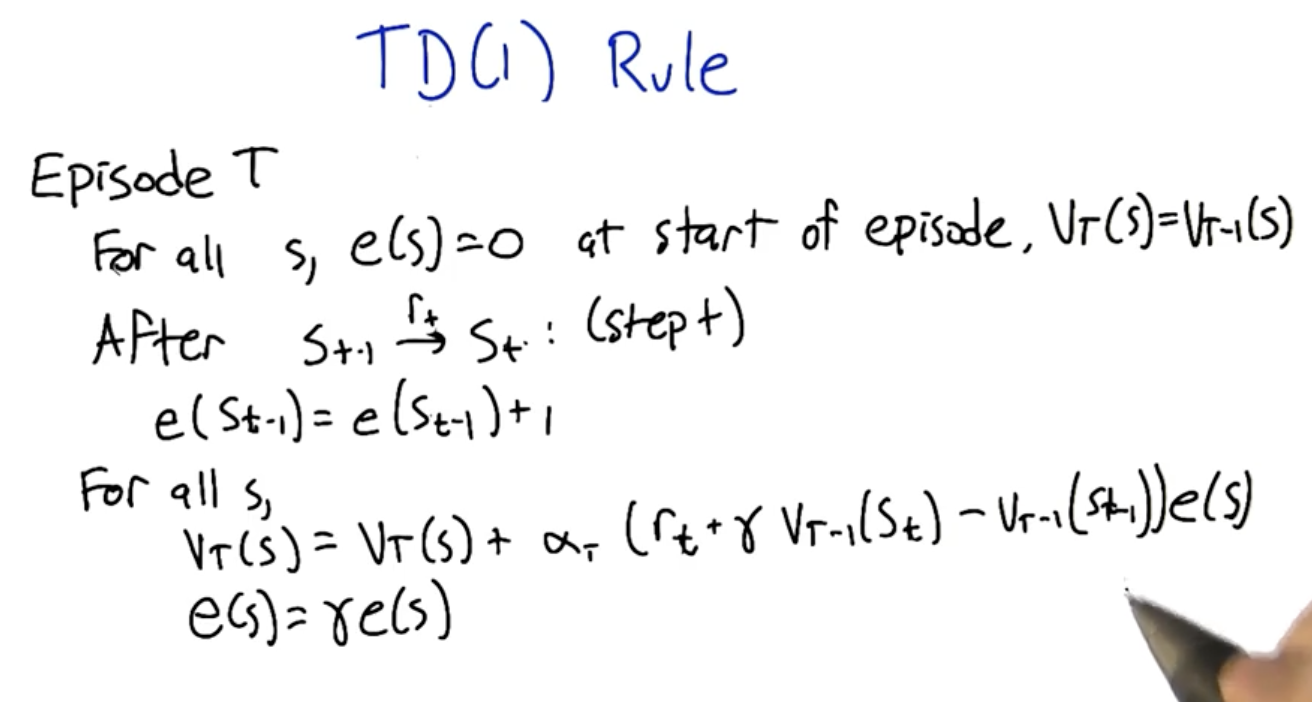

TD(1): Outcome-Based Updates with Eligibility Traces

TD(1) uses eligibility traces $e(s)$ to propagate TD errors to all previously visited states:

TD(1) Algorithm:

- At episode start: $e(s) = 0$ for all $s$; $V_T \leftarrow V_{T-1}$

- For each transition $s_{t-1} \xrightarrow{r_t} s_t$:

- Update eligibility: $e(s_{t-1}) \leftarrow e(s_{t-1}) + 1$

- Compute TD error: $\delta_t = r_t + \gamma V_{T-1}(s_t) - V_{T-1}(s_{t-1})$

- Update all states: $V_T(s) \leftarrow V_T(s) + \alpha_T \cdot \delta_t \cdot e(s)$

- Decay eligibilities: $e(s) \leftarrow \gamma \cdot e(s)$ for all $s$

Key property: Through telescoping cancellation of intermediate $V$ terms, TD(1) updates are equivalent to outcome-based (Monte Carlo) updates: the total update for a state equals $\alpha_T \cdot (G_t - V_{T-1}(s))$ where $G_t$ is the actual discounted return.

Advantage over pure MC: When states are visited multiple times in an episode, TD(1) incorporates what was learned during the episode (intra-episode learning), while outcome-based updates ignore mid-episode information.

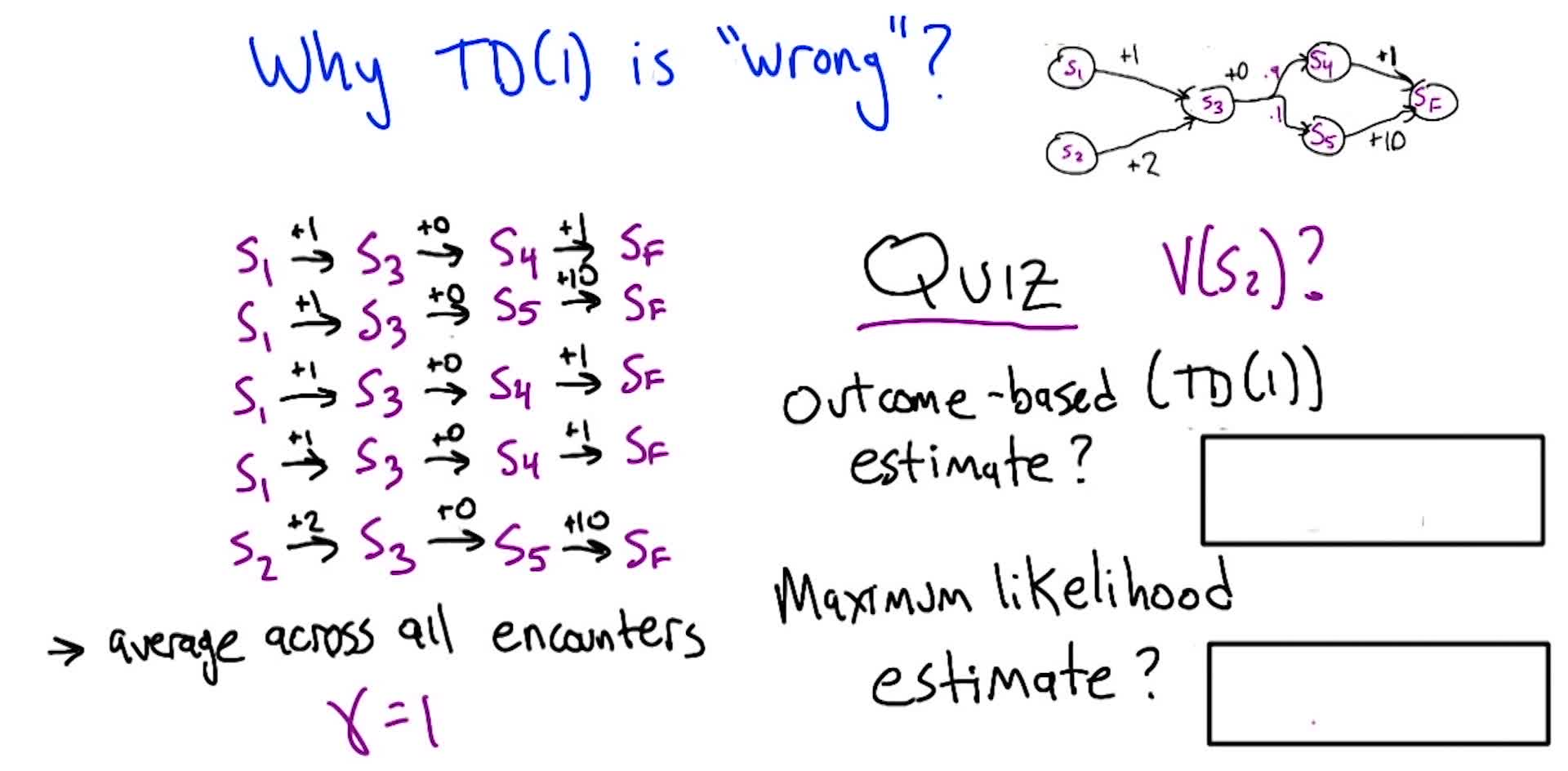

TD(1) vs Maximum Likelihood: Why TD(1) Can Be Wasteful

Quiz 4: Given 5 episodes, compute the TD(1) outcome-based estimate and the maximum likelihood estimate for $V(S_2)$ ($\gamma = 1$):

TD(1) / Outcome-based: $S_2$ appears in only 1 episode with return $2 + 0 + 10 = 12$. Average of 1 sample: $\mathbf{12}$.

Maximum Likelihood: Use all 5 episodes to estimate transition probabilities: $P(S_3 \to S_4) = 3/5 = 0.6$, $P(S_3 \to S_5) = 2/5 = 0.4$. Then: $V(S_3) = 0.6 \times 1 + 0.4 \times 10 = 4.6$, so $V(S_2) = 2 + 4.6 = \mathbf{6.6}$.

True value: $V(S_2) = 3.9$. TD(1) only used 1/5 trajectories. ML used all 5 (information from $S_1$-starting episodes improved $S_3$’s estimate, which improved $S_2$’s estimate). ML is closer because it uses more data.

TD(0): Bootstrapping for Better Data Efficiency

\[V(s_{t-1}) \leftarrow V(s_{t-1}) + \alpha \left[ r_t + \gamma V(s_t) - V(s_{t-1}) \right]\]Indexing convention note: This course uses $s_{t-1} \xrightarrow{r_t} s_t$ (transition from $s_{t-1}$, receiving $r_t$, landing in $s_t$). Sutton & Barto use $s_t \xrightarrow{r_{t+1}} s_{t+1}$ instead. They are equivalent: just a shift of indices. If an exam question uses $t$/$t+1$ notation, mentally substitute $s_{t-1} \to s_t$ and $s_t \to s_{t+1}$.

TD(0) updates using only the immediate reward + estimated value of next state (one-step lookahead). The key terms:

- TD Target: $r_t + \gamma V(s_t)$

- TD Error: $\delta_t = r_t + \gamma V(s_t) - V(s_{t-1})$

Why TD(0) gives the maximum likelihood estimate: When TD(0) is run repeatedly over finite data, the expected update averages transitions according to their observed frequencies. This is equivalent to building an ML model and solving it, but without explicitly constructing the model. TD(1) cannot do this because it only uses literal episode returns.

See also: David Silver RL Lecture 4: TD vs MC comparison — TD consistently achieves lower error faster than MC for appropriately chosen step sizes, because TD bootstraps: information about valuable states propagates backward immediately rather than waiting for complete episodes.

TD($\lambda$): Unifying TD(0) and TD(1)

TD($\lambda$) generalizes both by introducing $\lambda \in [0, 1]$ into the eligibility decay:

\[e(s) \leftarrow \lambda \gamma \cdot e(s) \quad \text{(was } \gamma \cdot e(s) \text{ in TD(1))}\]- $\lambda = 0$: eligibility immediately zeroes out after use → only update the most recent state → TD(0)

- $\lambda = 1$: eligibility decays by $\gamma$ only → full trace back to episode start → TD(1)

- $0 < \lambda < 1$: intermediate behavior, blending both

K-Step Estimators (Forward View)

TD($\lambda$) can also be understood as a weighted combination of k-step estimators:

| Estimator | Formula | Description |

|---|---|---|

| $E_1$ (TD(0)) | $r_t + \gamma V(s_{t+1})$ | 1 real reward + estimate |

| $E_2$ | $r_t + \gamma r_{t+1} + \gamma^2 V(s_{t+2})$ | 2 real rewards + estimate |

| $E_k$ | $\sum_{i=0}^{k-1} \gamma^i r_{t+i+1} + \gamma^k V(s_{t+k})$ | $k$ real rewards + estimate |

| $E_\infty$ (TD(1)) | $\sum_{i=0}^{\infty} \gamma^i r_{t+i+1}$ | All rewards, no estimate |

TD($\lambda$) weights these estimators geometrically:

\[\text{Weight on } E_k = (1 - \lambda) \lambda^{k-1}\]These weights sum to 1 (geometric series). When $\lambda = 0$: all weight on $E_1$. When $\lambda = 1$: all weight on $E_\infty$.

See: David Silver RL Lecture 4: $\lambda$-return weighting function and eligibility traces illustration for visual depictions of this weighting and how eligibility traces work.

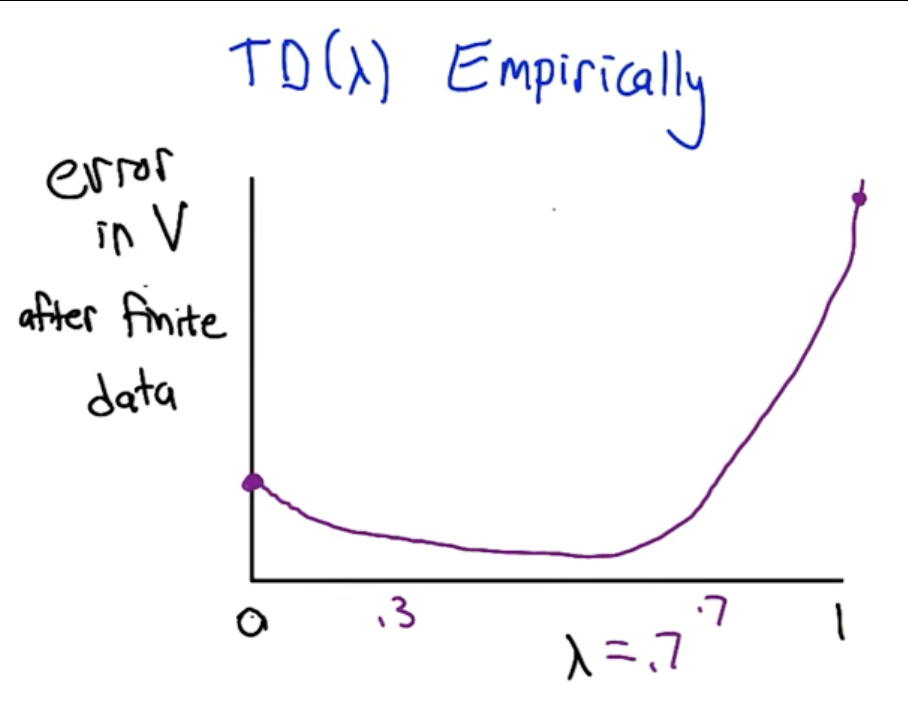

Empirical Performance of TD($\lambda$)

On finite data, the error curve as a function of $\lambda$ is typically U-shaped: TD(0) has some error, TD(1) has more error (high variance from using only episode returns), and intermediate values $\lambda \in [0.3, 0.7]$ often achieve the lowest error by combining the benefits of both:

- From TD(0): rapid information propagation via bootstrapping

- From TD(1): unbiased estimates that use actual rewards

In practice, $\lambda \in [0.3, 0.7]$ is commonly used for prediction tasks. For control (action selection), $\lambda = 0$ often works better because bootstrapping is more useful when you need to quickly propagate reward information for decision-making.

Exploration vs Exploitation

The exploration-exploitation trade-off arises because the agent doesn’t have access to the MDP: it must discover $T$ and $R$ through samples.

- Exploration: trying different actions to gain information. Necessary cost to learn, but may yield poor immediate rewards.

- Exploitation: maximizing reward based on current estimates. Risks sub-optimal decisions if estimates are wrong.

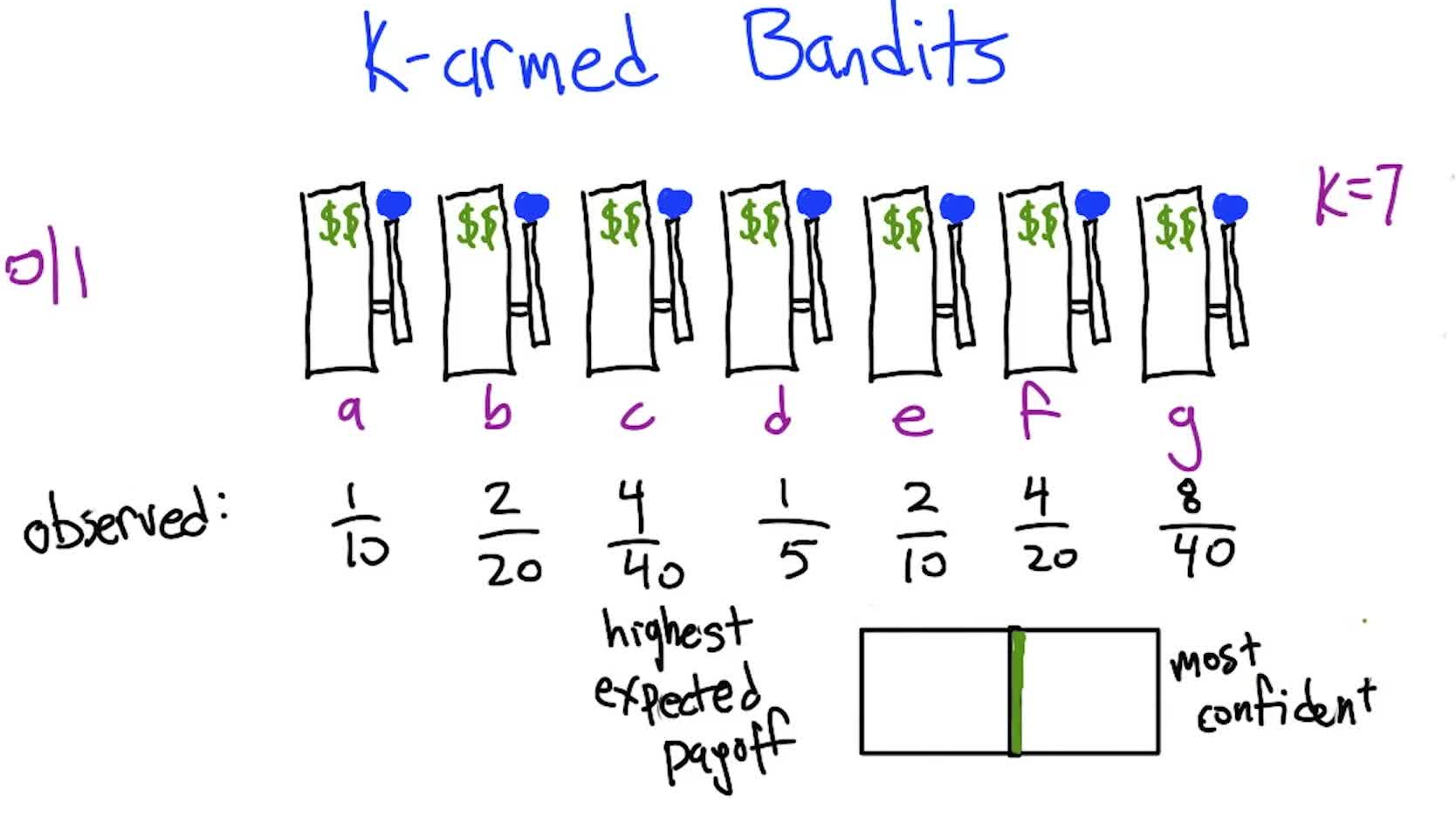

Multi-Armed Bandits

The simplest exploration/exploitation problem: one state, multiple actions (arms), unknown reward distributions. No sequential decision-making, just repeated single-step choices. The regret metric measures cumulative difference between optimal and chosen actions.

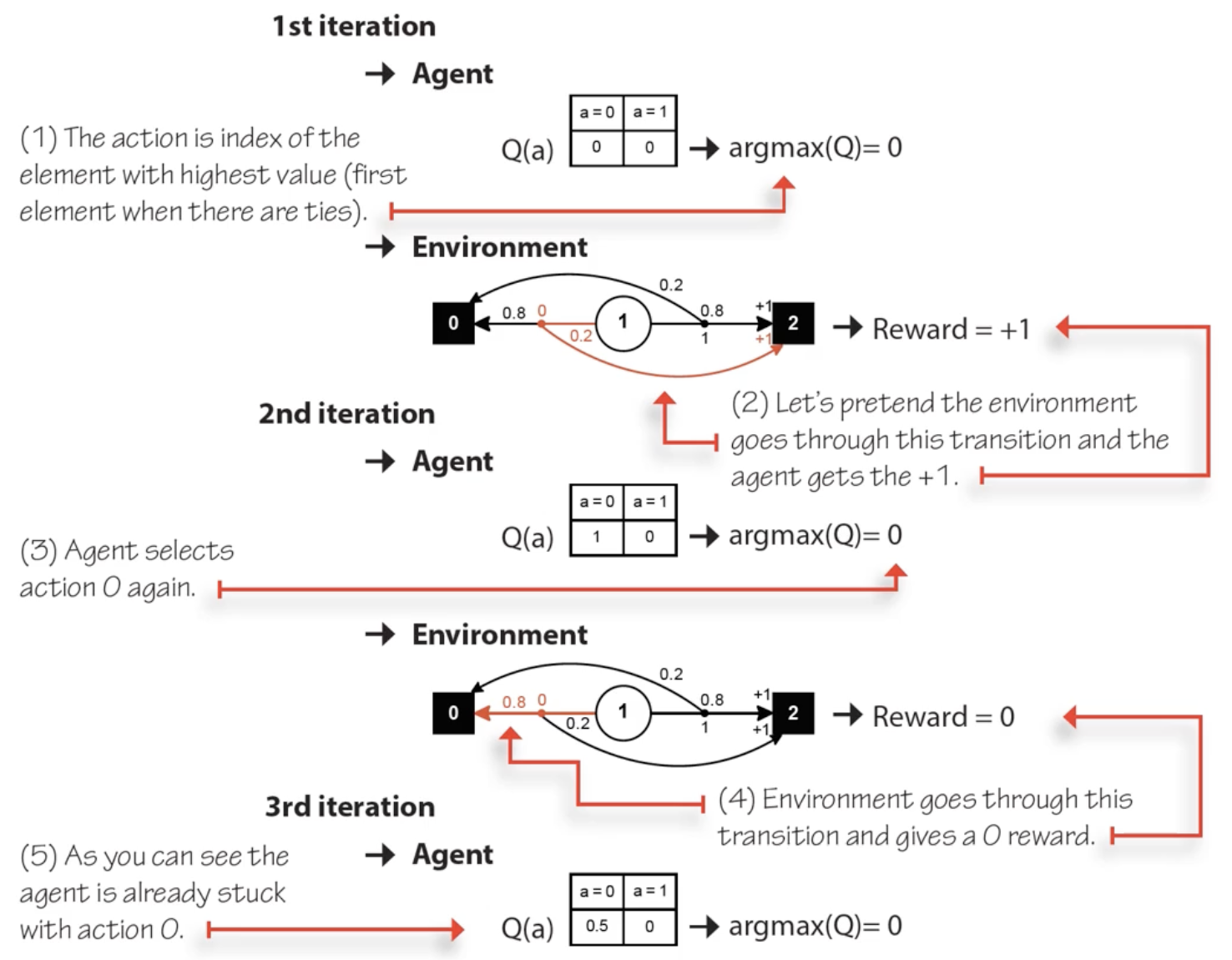

Exploration Strategies

| Strategy | Behavior | Problem |

|---|---|---|

| Greedy (always exploit) | Always pick $\arg\max_a Q(s,a)$ | Gets stuck on first “lucky” action; never discovers better alternatives |

| Random (always explore) | Pick uniformly at random | Finds true values eventually but never exploits them; high regret |

| $\varepsilon$-greedy | With probability $1-\varepsilon$: exploit (greedy). With probability $\varepsilon$: random action | Balances both; $\varepsilon$ typically 0.01–0.1 |

$\varepsilon$-greedy is surprisingly effective in practice, even in deep RL with large state/action spaces. Despite its simplicity, a small amount of random noise in action selection is often sufficient to learn near-optimal behavior.

MC vs TD: Bias-Variance Trade-off

| Property | Monte Carlo | TD(0) |

|---|---|---|

| Target | $G_t$ (actual return to episode end) | $r_{t+1} + \gamma V(s_{t+1})$ (one-step bootstrap) |

| Bias | Unbiased (uses actual rewards) | Biased (bootstraps on estimated $V$) |

| Variance | High (depends on entire trajectory) | Low (depends on one transition) |

| Updates | End of episode only | Every timestep |

| Requires episodes to end? | Yes | No (works in continuing tasks) |

| Data efficiency | Uses only visited-state returns | Propagates information via bootstrapping |

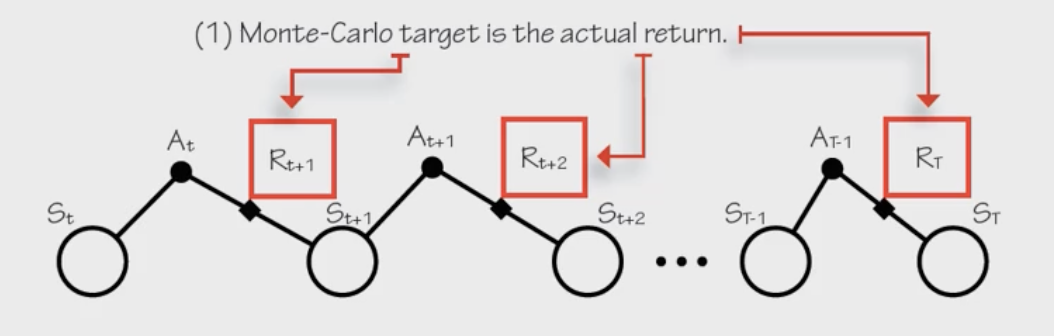

MC target: $G_t$ (actual discounted return). MC error: $G_t - V(s_t)$.

TD target: $r_{t+1} + \gamma V(s_{t+1})$. TD error: $\delta_t = r_{t+1} + \gamma V(s_{t+1}) - V(s_t)$.

From Prediction to Control

The prediction problem (estimate $V^\pi$) and the control problem (find $\pi^{*}$) combine to form the full RL problem. Control algorithms mix a prediction method with an exploration strategy:

| Algorithm | Prediction Method | Exploration | Policy Type |

|---|---|---|---|

| MC Control | Monte Carlo (full episode returns) | $\varepsilon$-greedy | On-policy |

| SARSA | TD(0) (one-step bootstrap) | $\varepsilon$-greedy | On-policy |

| Q-Learning | TD(0) (one-step bootstrap) | $\varepsilon$-greedy (but learns greedy) | Off-policy |

SARSA (On-Policy TD Control)

\[Q(s_t, a_t) \leftarrow Q(s_t, a_t) + \alpha \left[ r_{t+1} + \gamma Q(s_{t+1}, \underbrace{a_{t+1}}_{\text{actual next action}}) - Q(s_t, a_t) \right]\]The name comes from the quintuple used in each update: $(S_t, A_t, R_{t+1}, S_{t+1}, A_{t+1})$. SARSA uses the action actually taken at $s_{t+1}$ (which may be exploratory) in its update.

Q-Learning (Off-Policy TD Control)

\[Q(s_t, a_t) \leftarrow Q(s_t, a_t) + \alpha \left[ r_{t+1} + \gamma \underbrace{\max_{a'} Q(s_{t+1}, a')}_{\text{best action, regardless of what was taken}} - Q(s_t, a_t) \right]\]Q-learning uses the max over all actions at $s_{t+1}$, regardless of which action was actually selected. This is the sole difference from SARSA.

On-Policy vs Off-Policy

- On-policy (SARSA): the policy being learned is the same policy used to generate experience. Learns about the $\varepsilon$-greedy policy it’s actually following.

- Off-policy (Q-learning): learns about a different policy (the greedy policy) than the one generating experience ($\varepsilon$-greedy). Two policies: behavior policy (generates data) and target policy (being learned).

Convergence Requirements

For tabular RL algorithms to converge to optimal $Q^{*}$ and $\pi^{*}$:

1. GLIE (Greedy in the Limit with Infinite Exploration)

- All state-action pairs must be explored infinitely often

- The policy must converge to a greedy policy (i.e. $\varepsilon \to 0$)

In practice: slowly decay $\varepsilon$ toward zero. Too fast → insufficient exploration. Too slow → inefficient.

2. Learning Rate (Robbins-Monro conditions)

\[\sum_{t=1}^{\infty} \alpha_t = \infty, \qquad \sum_{t=1}^{\infty} \alpha_t^2 < \infty\](Same conditions from TD prediction apply here.)

For Q-learning specifically: convergence to $Q^{*}$ requires GLIE condition 1 (all pairs explored infinitely) + learning rate conditions. The policy doesn’t need to converge to greedy for Q-learning to find $Q^{*}$ (only for it to execute optimally), since Q-learning learns off-policy.

4. Convergence

We’ve seen that TD and Q-learning seem to work, but do they actually converge to the right answer? This section makes that rigorous: we prove that the Bellman operator is a contraction, which guarantees that value iteration and Q-learning converge to $Q^*$, and then explore what other operators share this property.

From TD to Control: Q-Learning Revisited

The Q-learning update combines two approximations simultaneously:

- Averaging over stochastic transitions: using sampled next states instead of the full expectation (handled by the learning rate conditions)

- Bellman equation contraction: using one-step lookahead with the max operator to converge toward $Q^{*}$

To prove Q-learning converges, we need to show both pieces work. The key tool: contraction mappings.

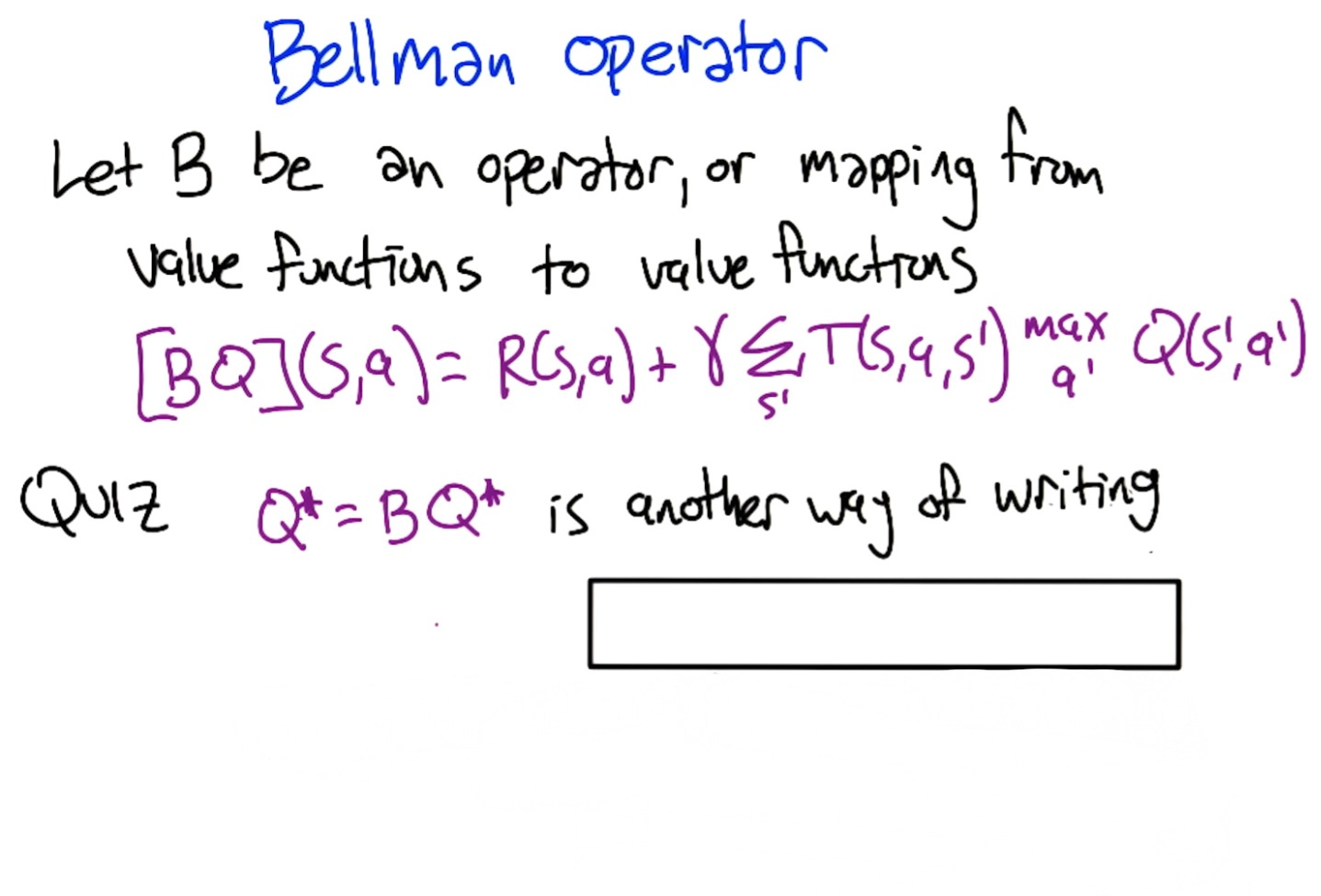

The Bellman Operator

Definition: The Bellman operator $B$ maps Q-functions to Q-functions:

\[[BQ](s,a) = R(s,a) + \gamma \sum_{s'} T(s,a,s') \max_{a'} Q(s',a')\]Give it any Q-function, it returns a new Q-function by applying one step of the Bellman equation.

Quiz 1: What are these in standard RL terminology?

- $Q^{*} = BQ^{*}$: the Bellman Equation (the fixed point equation)

- $Q_{t+1} = BQ_t$: Value Iteration (iteratively applying $B$ starting from $Q_0$)

Contraction Mappings

Definition: An operator $B$ is a contraction mapping if for all functions $F, G$ and some $\gamma < 1$:

\[\mid BF - BG\mid _\infty \leq \gamma \mid F - G\mid _\infty\]where $\mid F\mid_{\infty} = \max_{s,a} \mid F(s,a)\mid$ is the max norm (largest absolute value across all state-action pairs).

In plain terms: applying $B$ to any two functions makes them at least $\gamma$ closer together. The maximum distance between them shrinks every time.

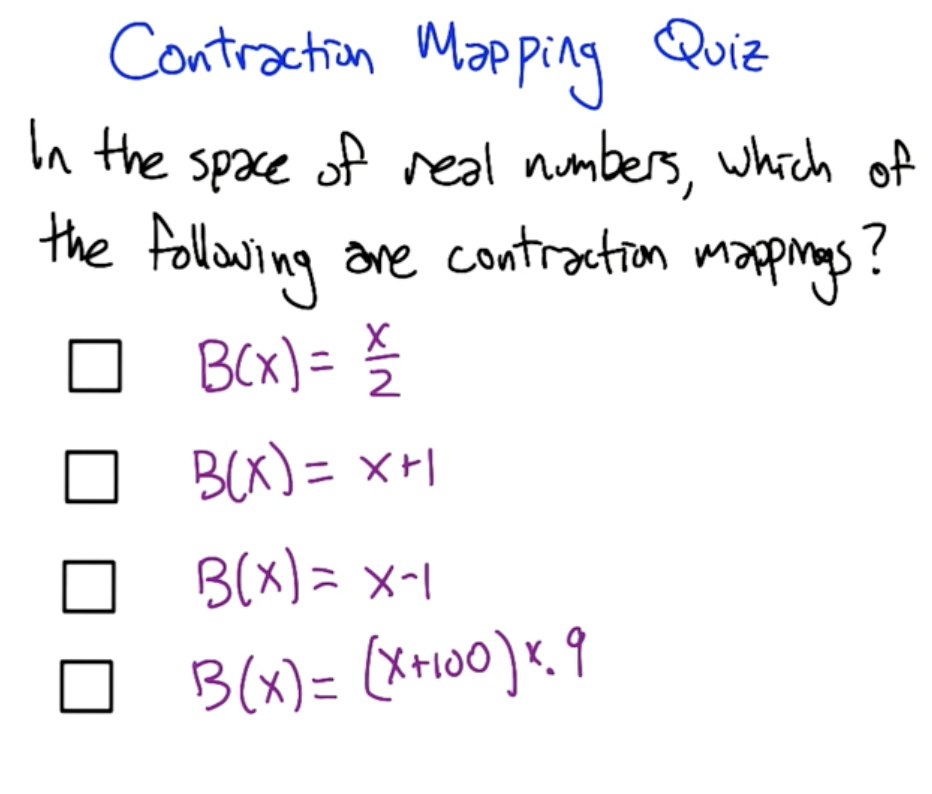

Quiz 2: Which scalar functions are contraction mappings?

$B(x)$ Contraction? Why? $x/2$ Yes $\mid Bx - By\mid = \frac{1}{2}\mid x - y\mid $, $\gamma = 0.5$ $x + 1$ No $\mid Bx - By\mid = \mid x - y\mid $, distance unchanged (translation) $x - 1$ No Same: $\mid Bx - By\mid = \mid x - y\mid $, distance unchanged $(x+100) \times 0.9$ Yes $\mid Bx - By\mid = 0.9\mid x - y\mid $, $\gamma = 0.9$ (constant cancels) Rule: multiplying by $c < 1$ contracts. Adding/subtracting constants doesn’t change distances. Multiplying by $c \geq 1$ expands.

Properties of Contraction Mappings

If $B$ is a contraction mapping, then:

- Existence & uniqueness: The fixed point equation $F^{*} = BF^{*}$ has exactly one solution

- Convergence: The sequence $F_0, F_1 = BF_0, F_2 = BF_1, \ldots$ converges to $F^{*}$ from any starting point $F_0$

Proof of convergence (sketch): Pick any $F_t$ and compare to $F^{*}$. Since $BF^{*} = F^{*}$:

\[\mid F_{t+1} - F^{\*}\mid _\infty = \mid BF_t - BF^{\*}\mid _\infty \leq \gamma \mid F_t - F^{\*}\mid _\infty\]So the distance to $F^{*}$ shrinks by at least $\gamma$ each step. After $k$ steps: $\mid F_k - F^{*}\mid \leq \gamma^k \mid F_0 - F^{*}\mid \to 0$.

Uniqueness: if two fixed points $F^{*}, G^{*}$ existed, then $\mid BF^{*} - BG^{*}\mid = \mid F^{*} - G^{*}\mid $, but contraction requires $\leq \gamma \mid F^{*} - G^{*}\mid $, so $\mid F^{*} - G^{*}\mid = 0$.

Proving the Bellman Operator is a Contraction

The key proof shows $\mid BQ_1 - BQ_2\mid _\infty \leq \gamma \mid Q_1 - Q_2\mid _\infty$:

- Expand $BQ_1 - BQ_2$: the $R(s,a)$ terms cancel, leaving only the discounted next-state values

- The $\sum_{s’} T(s,a,s’)[\cdot]$ is a weighted average, bounded by the worst-case $s’$

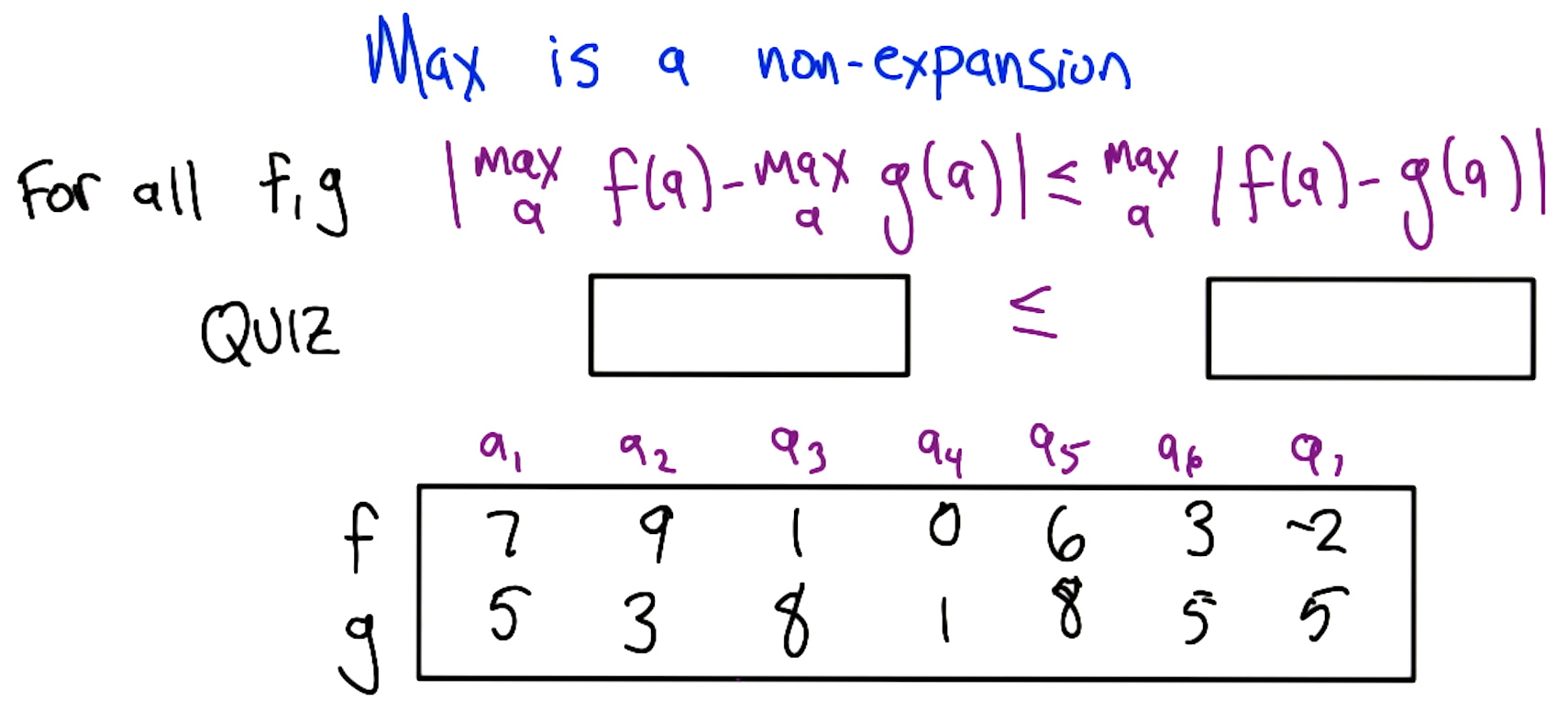

- The critical step: max is a non-expansion:

Quiz 3: Verify the non-expansion property for specific $f$ and $g$:

$\mid \max f - \max g\mid = \mid 9 - 8\mid = 1$, while $\max\mid f - g\mid = 7$. Indeed $1 \leq 7$. ✓

Proof that max is a non-expansion: WLOG assume $\max_a f(a) \geq \max_a g(a)$. Let $a_1 = \arg\max_a f(a)$ and $a_2 = \arg\max_a g(a)$. Then:

\[\max_a f(a) - \max_a g(a) = f(a_1) - g(a_2) \leq f(a_1) - g(a_1) \leq \max_a |f(a) - g(a)|\]The first $\leq$ holds because $g(a_2) \geq g(a_1)$ (since $a_2$ maximizes $g$), so replacing $a_2$ with $a_1$ can only increase the difference. The second $\leq$ holds by definition of max.

Combining: $\gamma$ from discounting × non-expansion from max = contraction. Therefore the Bellman operator is a contraction, value iteration converges, and $Q^{*}$ is unique.

Q-Learning Convergence Theorem

Q-learning converges to $Q^{*}$ given:

- Condition 1 (averaging): the update rule correctly averages over stochastic transitions when using $Q^{*}$ as the lookahead (satisfied by the learning rate conditions)

- Condition 2 (contraction): the one-step Bellman backup is a contraction (proved above)

- Condition 3 (learning rates): $\sum \alpha_t = \infty$, $\sum \alpha_t^2 < \infty$, and all state-action pairs visited infinitely often (hidden in the learning rate condition: $\alpha_t(s,a) = 0$ for unvisited pairs, so the sum can only be $\infty$ if we visit everywhere)

The proof works by decomposing the Q-learning update into two sub-operators: one that handles the stochastic averaging (condition 1) and one that handles the Bellman contraction (condition 2). Each independently satisfies its required property, and the generalized convergence theorem guarantees the combined algorithm converges.

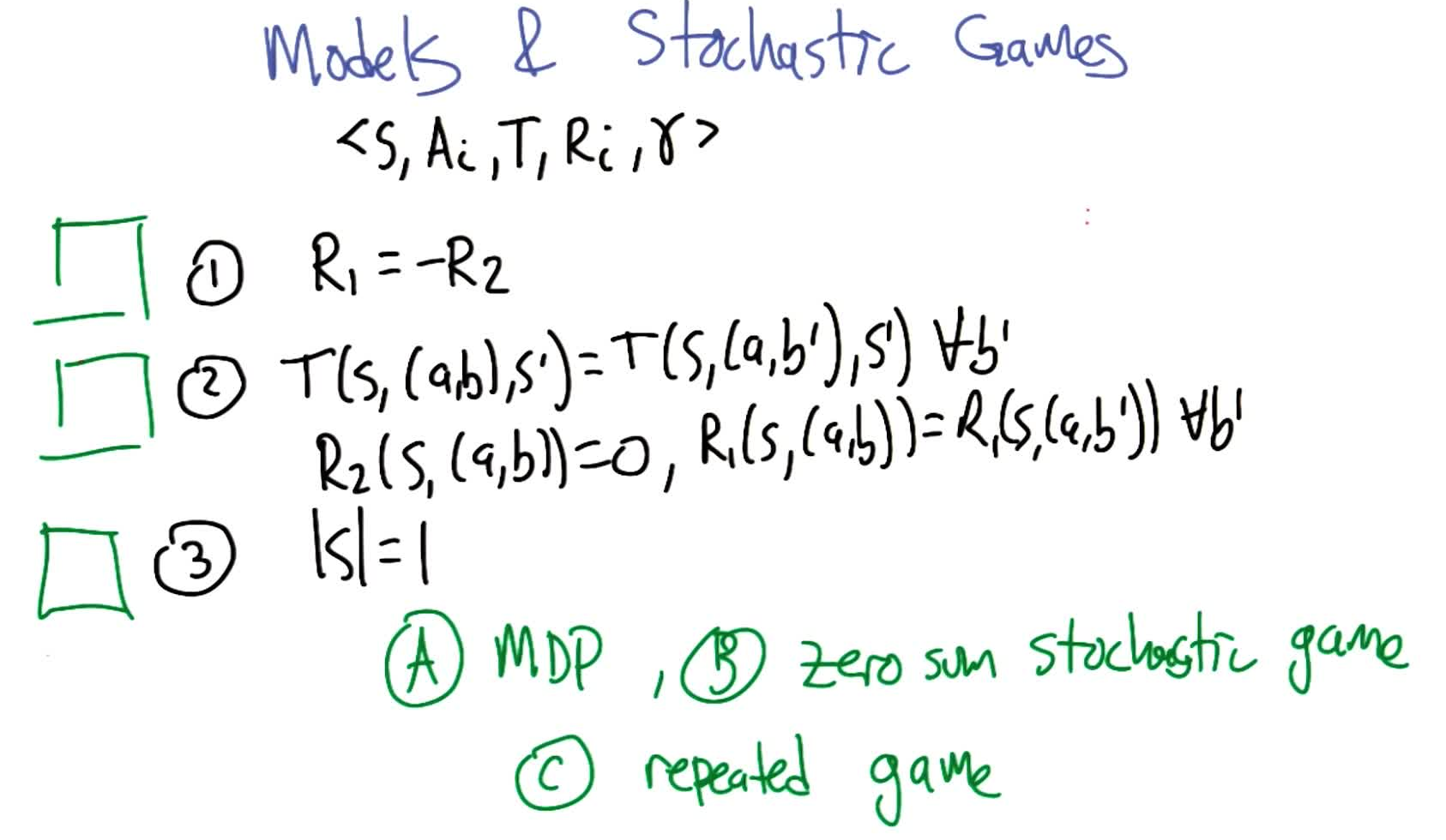

Generalized MDPs

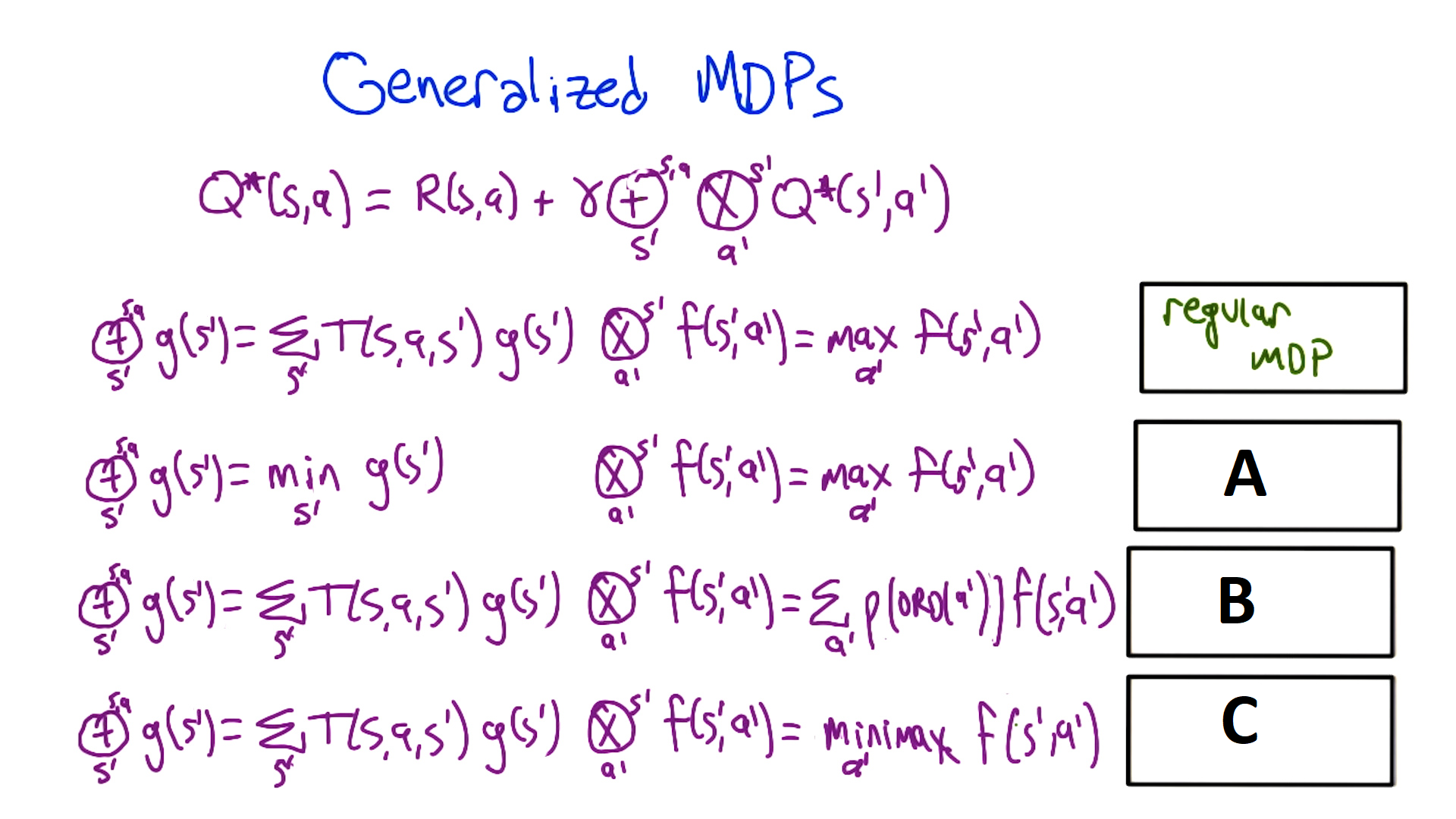

The Bellman equation can be generalized by replacing the expectation ($\sum$) and maximization ($\max$) with other operators:

\[Q^{*}(s,a) = R(s,a) + \gamma \; \underbrace{[\oplus]}_{s'} \; \underbrace{[\otimes]}_{a'} \; Q^{*}(s',a')\]Quiz 4: What decision processes result from different operator substitutions?

$\oplus$ (over $s’$) $\otimes$ (over $a’$) Result $\mathbb{E}$ (expectation) $\max$ Standard MDP $\min$ $\max$ Pessimistic / Risk-averse MDP $\mathbb{E}$ (expectation) $\rho(\text{ord})$ (rank-weighted) Exploration-sensitive MDP $\mathbb{E}$ (expectation) $\text{minimax}$ Zero-sum game

Explanations:

$\min$ over $s’$, $\max$ over $a’$ (Pessimistic/Risk-averse MDP): Instead of taking the expected next state, the environment always puts you in the worst possible next state. It’s as if the environment is adversarial: you choose the best action, then the environment chooses the worst outcome for you. Related to H∞ control in control theory. The agent learns to choose actions where the least bad thing can happen.

$\mathbb{E}$ over $s’$, $\rho(\text{ord})$ over $a’$ (Exploration-sensitive MDP): Instead of always taking the best action ($\max$), actions are weighted by their rank via a fixed convex combination $\rho$. This generalizes both $\max$ ($\rho$ puts all weight on rank 1) and $\min$ ($\rho$ puts all weight on last rank), and also captures $\varepsilon$-greedy (high weight on best, small weight on others). The values computed are on-policy: they reflect the value of the policy you’re actually following (including exploration), not the policy you could follow. Also useful when the action space is too large to compute $\max$ exactly: randomly sample a subset of actions, take the max of that sample, and the resulting rank distribution over all actions fits this framework (Tom Dietterich’s Space Shuttle scheduling work).

$\mathbb{E}$ over $s’$, minimax over $a’$ (Zero-sum game): The action $a’$ represents a joint action by two players. One player maximizes, the other minimizes. This is the standard setup for two-player zero-sum games, which we’ll cover in detail in the game theory section.

Key result: as long as the operators used are non-expansions, value iteration and Q-learning will converge to the corresponding fixed point. All of the following are non-expansions:

- $\max$, $\min$, order statistics, fixed convex combinations

Caution: if the weights of a convex combination depend on the values themselves (e.g. Boltzmann/softmax exploration), the non-expansion property can fail, and convergence is not guaranteed.

Part 2: Deep Reinforcement Learning

[Supplementary] Value-Based Deep Reinforcement Learning Methods

Why Function Approximation?

In tabular RL (Part 1), we stored a value for every state(-action) pair. This breaks down when:

- High-dimensional states: e.g. Atari frames ($210 \times 160 \times 3$ pixels → $\sim 10^{242,000}$ states)

- Continuous states: e.g. CartPole has 4 continuous observations (cart position/velocity, pole angle/velocity) → infinite state space

- Generalization needed: updating one state should improve estimates for similar states

Key insight: without function approximation, each state is independent. With it, updating one state adjusts a function (e.g. a neural network) that affects all similar states. This enables generalization but introduces interference.

Four Approaches to Deep RL

| Approach | What’s approximated | Examples |

|---|---|---|

| Value-based | Q-function or V-function | DQN, Double DQN |

| Actor-Critic | Both value network + policy network | A2C, A3C, DDPG, TD3 |

| Model-based | Transition and/or reward function | Dyna-Q, World Models |

| Derivative-free | Black-box optimization of policy | Evolutionary strategies, CMA-ES |

This lecture focuses on value-based methods.

Neural Network Architecture for RL

For value-based methods, a simple feed-forward network suffices:

- Input: state observations (e.g. 4 values for CartPole)

- Hidden layers: 1-2 layers is typically sufficient (deep networks need more data and training time)

- Output: $Q(s, a)$ for each action (one output node per action)

For this course, one hidden layer (3 layers total: input, hidden, output) works. Don’t go deeper than necessary: deeper networks require more samples to train, which means longer training times.

The training target comes from the Q-learning update: $y = r + \gamma \max_{a’} Q(s’, a’; \theta)$

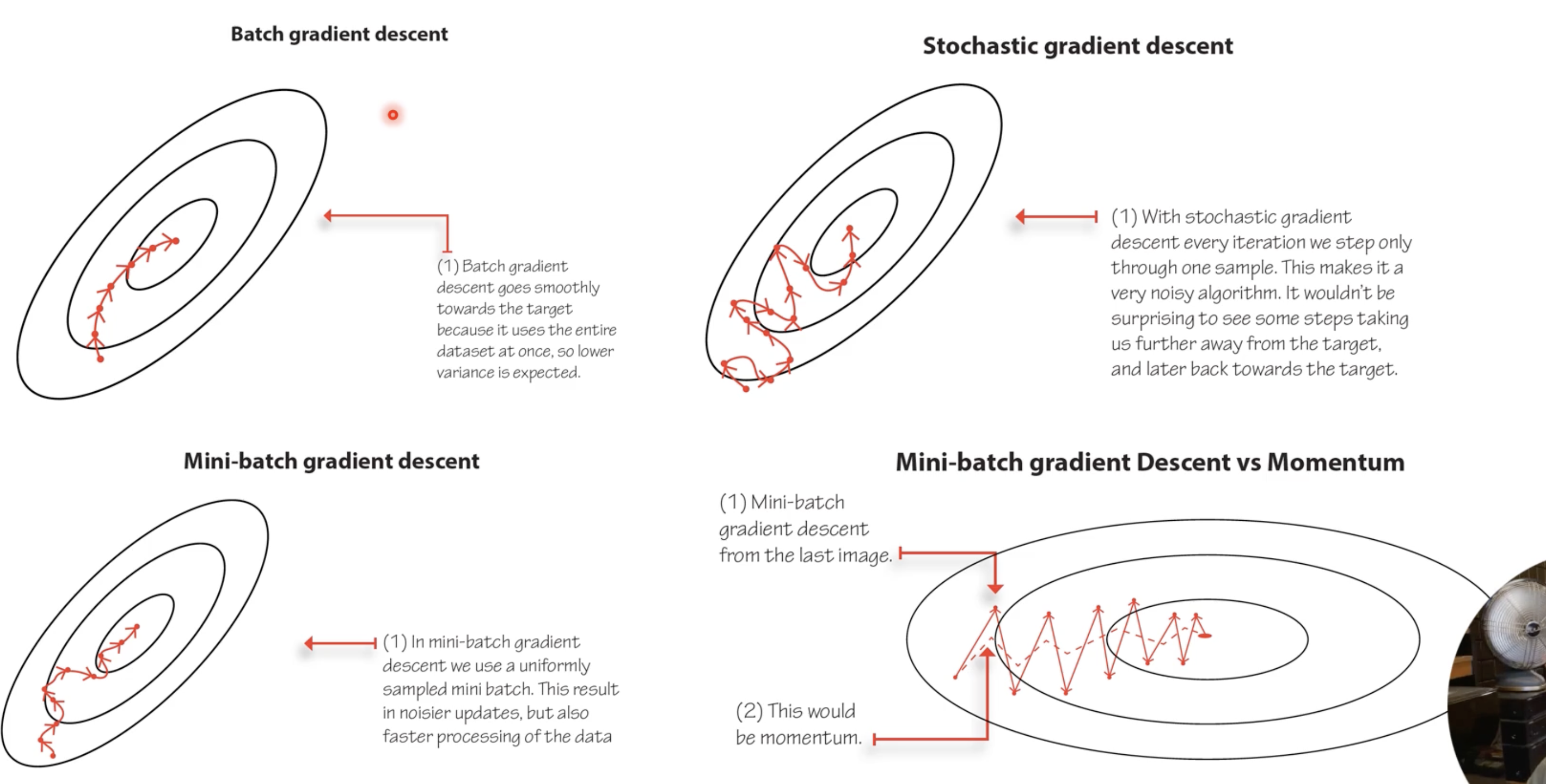

Optimization: batch gradient descent (uses full dataset) is impossible in RL since data is collected online. Use mini-batch SGD with Adam or RMSprop. Momentum-based methods smooth out noisy gradients by averaging recent gradient directions.

Critical implementation detail: when computing the loss $\mathcal{L} = (y - Q(s,a;\theta))^2$, do not backpropagate gradients through the target $y$. In PyTorch, use

.detach()on the target. Unlike supervised learning where labels are fixed numbers, here the target depends on the same network being trained.

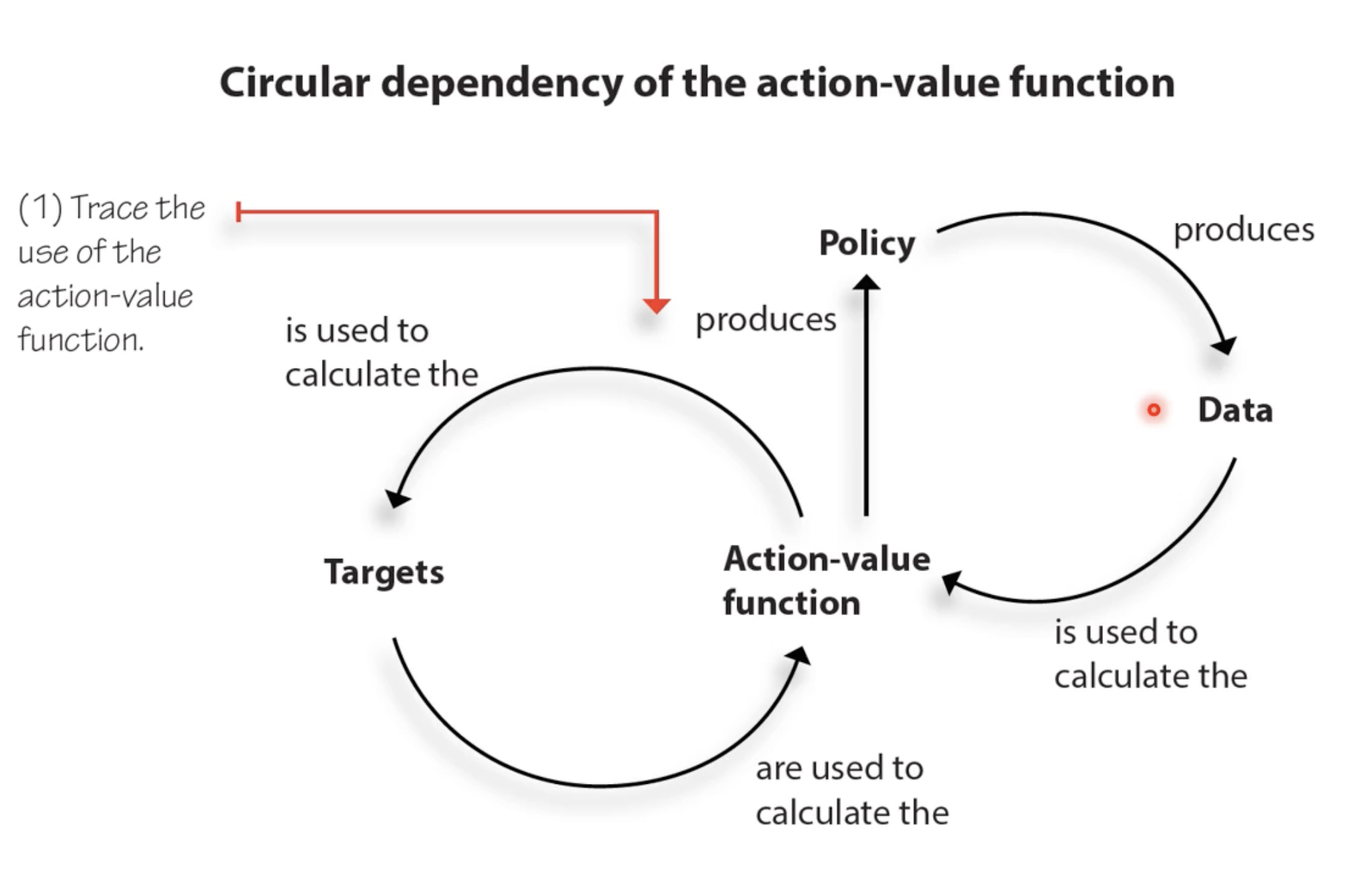

The Circular Dependency Problem: The Q-function is used to (1) produce a policy, which (2) generates data, which (3) is used to compute targets, which (4) are used to train the Q-function. This circular loop (Q → policy → data → targets → Q) is what makes deep RL fundamentally harder than supervised learning, where labels are fixed ground truth.

Two Problems with Naive Deep Q-Learning (The Deadly Triad)

The combination of function approximation + bootstrapping + off-policy learning is known as the deadly triad. When all three are present (as in naive DQN), training can diverge. The two core manifestations:

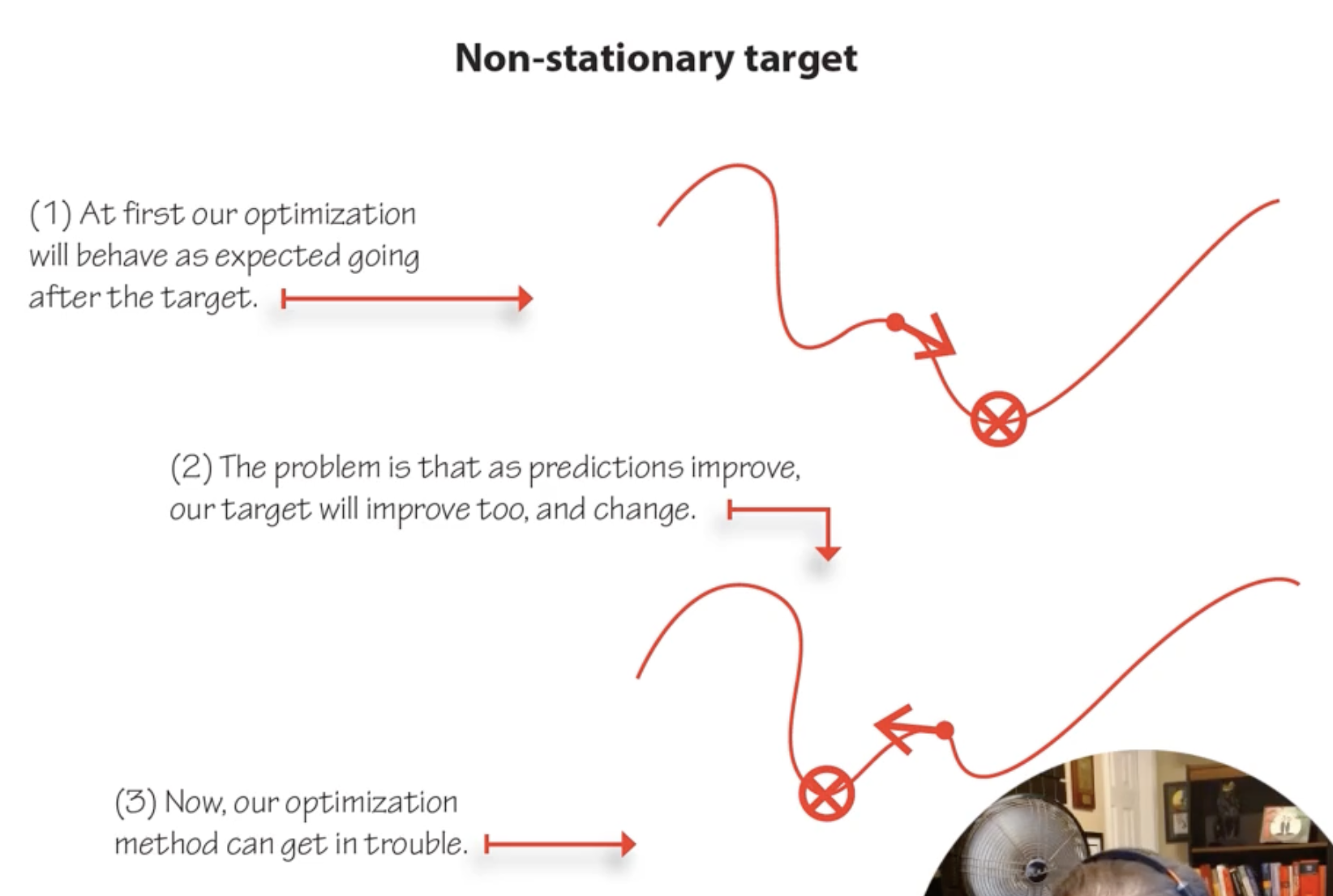

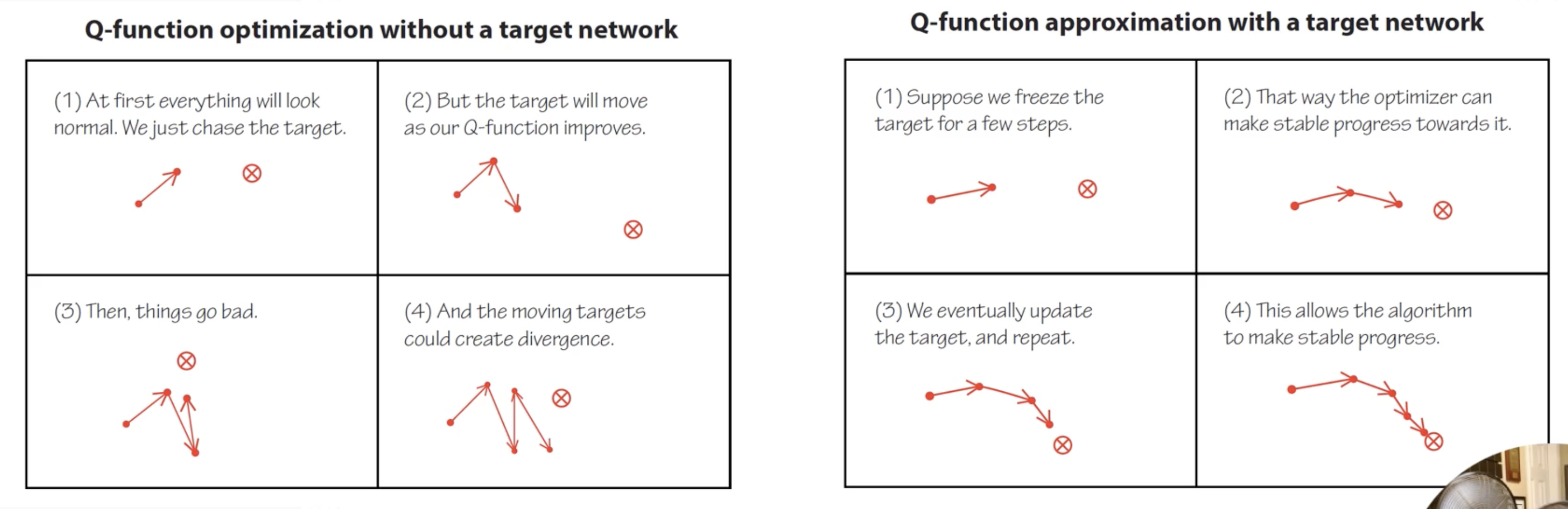

Problem 1: Non-Stationary Targets

When the network updates its weights, it changes Q-values for all states simultaneously. But the targets also depend on Q-values. So every update shifts both the prediction and the target, creating a moving target problem that can cause divergence.

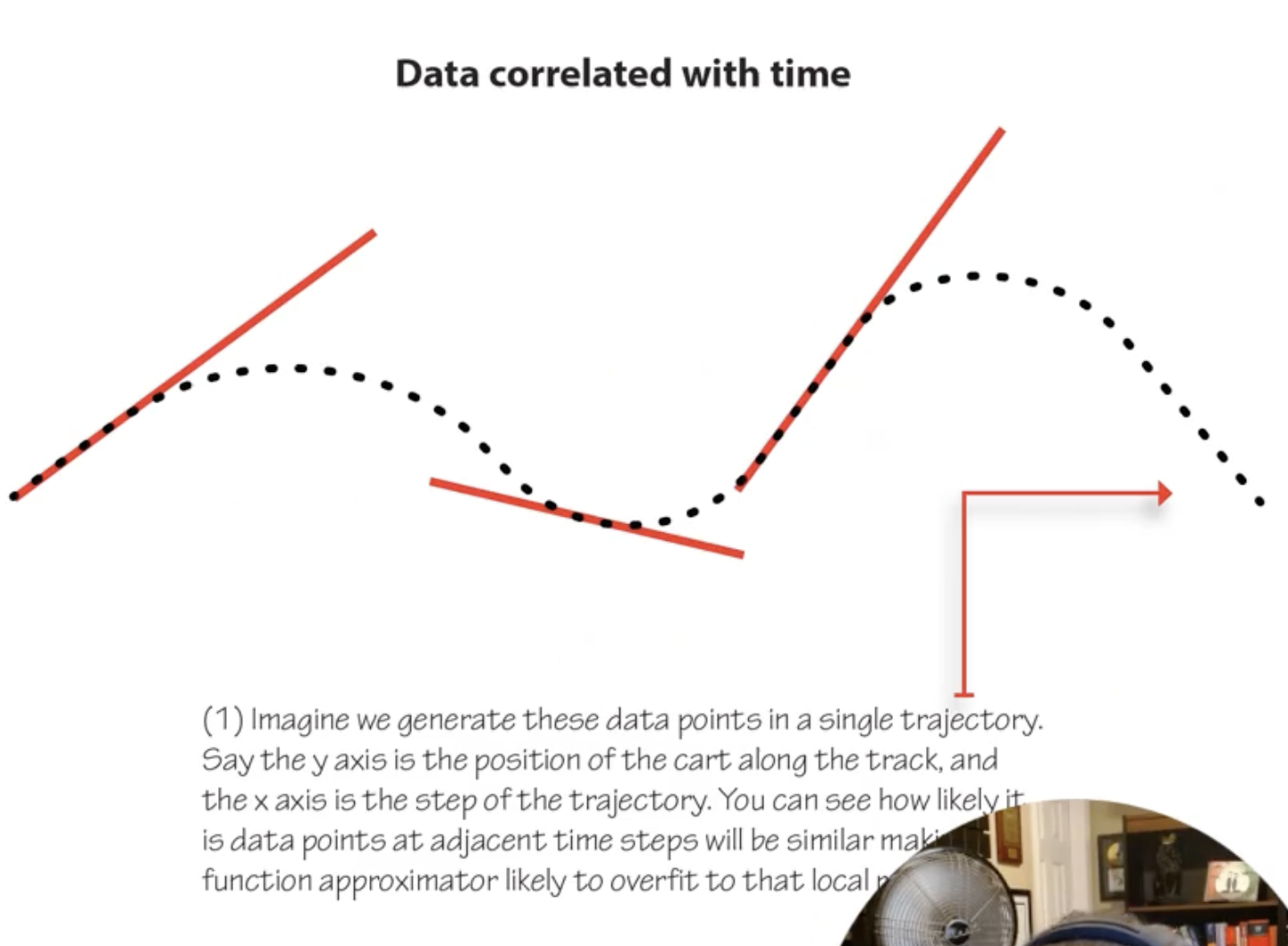

Problem 2: Correlated Data

RL data comes from sequential trajectories, not i.i.d. samples. Consecutive transitions are highly correlated (state $s_t$ and $s_{t+1}$ are similar). Standard SGD assumes i.i.d. data; violating this causes unstable training.

DQN: Deep Q-Networks (Mnih et al., 2015)

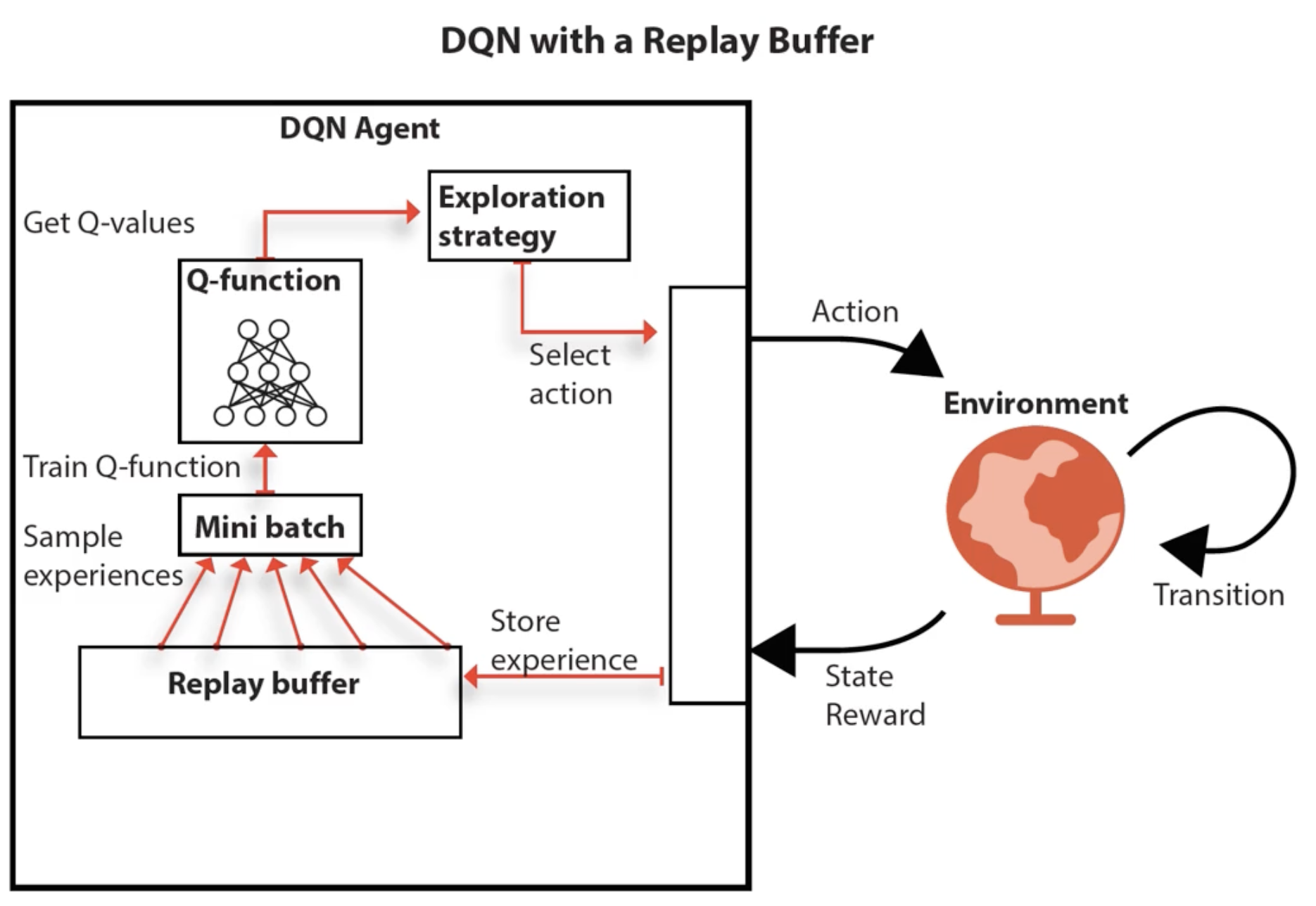

DQN addresses both problems with two key innovations:

Solution 1: Target Network

Maintain a separate copy of the Q-network ($\theta^-$) that is held fixed for many timesteps (e.g. 10,000 for Atari, 5-10 for CartPole). Use it to compute targets:

\[y = r + \gamma \max_{a'} Q(s', a'; \theta^-)\]The target network $\theta^-$ is periodically updated by copying the online network $\theta$. This stabilizes training because the target doesn’t shift on every update.

Solution 2: Experience Replay Buffer

Store all transitions $(s, a, r, s’, \text{done})$ in a replay buffer $\mathcal{D}$ (e.g. 1M samples for Atari). Instead of training on the most recent transition, sample a random mini-batch from $\mathcal{D}$:

\[\nabla_\theta \mathcal{L}(\theta) = \mathbb{E}_{(s,a,r,s') \sim \mathcal{U}(\mathcal{D})} \left[ \left( r + \gamma \max_{a'} Q(s', a'; \theta^-) - Q(s, a; \theta) \right) \nabla_\theta Q(s, a; \theta) \right]\]This breaks temporal correlations, making data approximately i.i.d. The buffer is a FIFO queue: once full, oldest samples are replaced.

DQN Training Loop:

- Agent selects action ($\varepsilon$-greedy using online network $\theta$)

- Execute action, observe $(s, a, r, s’, \text{done})$

- Store transition in replay buffer $\mathcal{D}$

- Sample mini-batch from $\mathcal{D}$

- Compute target $y$ using target network $\theta^-$

- Update online network $\theta$ via gradient descent on $(y - Q(s,a;\theta))^2$

- Every $C$ steps: copy $\theta \to \theta^-$

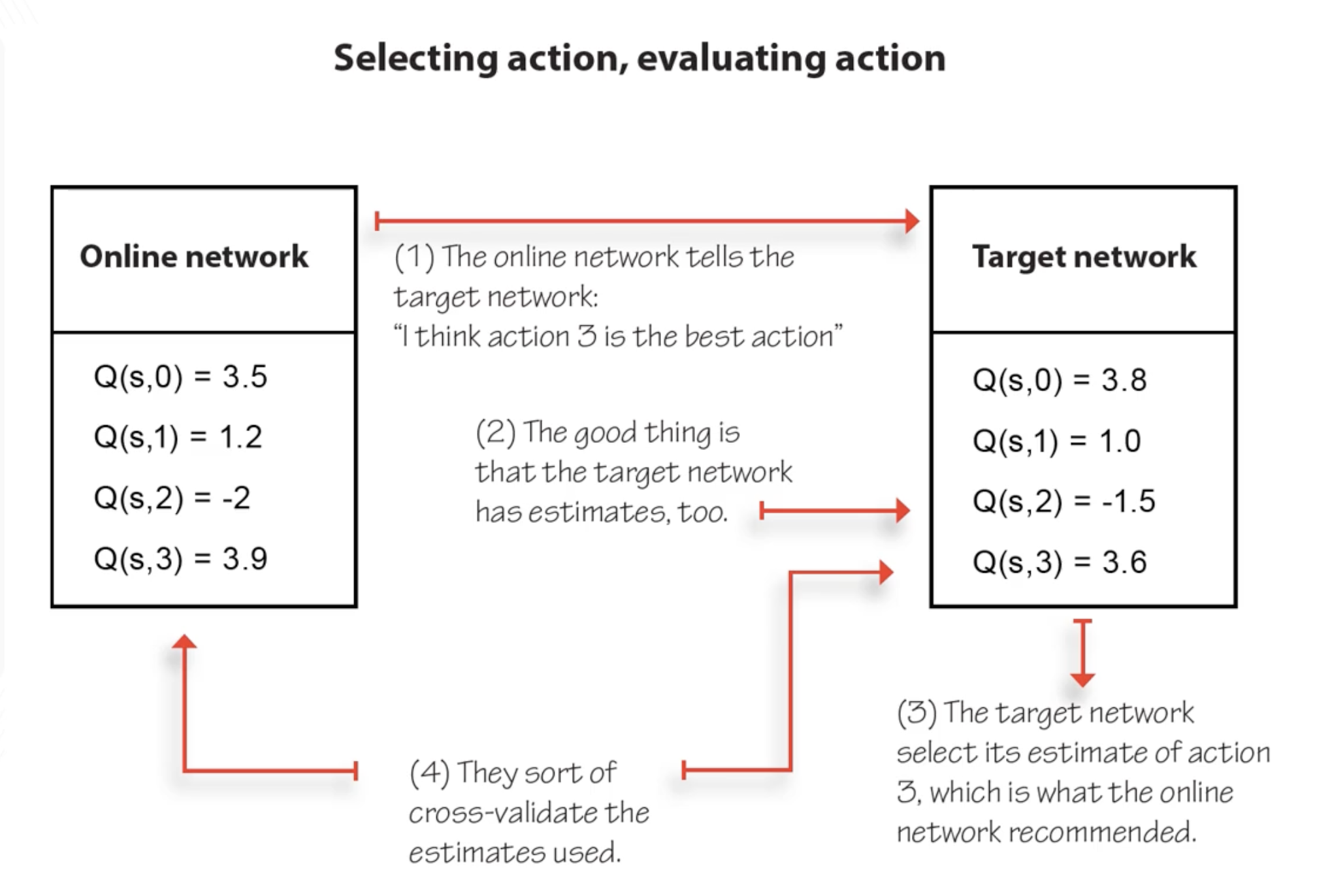

Double DQN (DDQN): Fixing Overestimation

DQN overestimates Q-values because $\max$ over noisy estimates has a positive bias: $\mathbb{E}[\max_a \hat{Q}(s,a)] \geq \max_a \mathbb{E}[\hat{Q}(s,a)]$.

Key insight (unwrapping the max): notice that $\max_{a’} Q(s’, a’; \theta^-)$ is the same as $Q(s’, \arg\max_{a’} Q(s’, a’; \theta^-); \theta^-)$. We’re using the same network to both select the best action and evaluate its value. This coupling is what causes the overestimation: the network picks actions it’s biased toward, then evaluates them with the same bias.

Fix: decouple action selection from action evaluation using the two networks:

\[y_{\text{DQN}} = r + \gamma \max_{a'} Q(s', a'; \theta^-) \quad \longrightarrow \quad y_{\text{DDQN}} = r + \gamma Q\left(s', \underbrace{\arg\max_{a'} Q(s', a'; \theta)}_{\text{online selects}}, \theta^-\right)\]- Online network $\theta$: selects which action is best ($\arg\max$)

- Target network $\theta^-$: evaluates the value of that action

This is a form of cross-validation: if the online network’s $\arg\max$ disagrees with the target network’s ranking, the overestimation is reduced. DDQN is trivial to implement (one line change from DQN) and strictly better in practice.

DDQN Practical Notes (CartPole):

- Use Huber loss (smooth L1) instead of MSE: equivalent to “clipping” gradients to a max value, preventing large destabilizing updates. In PyTorch, set

max_gradient_normvariable tofloat('inf')if using MSE to get equivalent behavior.- Decaying ε-greedy: start $\varepsilon = 1.0$, decay to $\sim 0.3$ over ~20,000 steps

- Replay buffer: 320 samples min, 50,000 max, mini-batch size 64

- Target network: freeze for 15 steps, then full copy

- Optimizer: RMSprop or Adam, learning rate ~0.0007 (higher than vanilla DQN due to double learning stability)

- Vanilla DQN can diverge on some random seeds without double learning; DDQN is more robust

Further Extensions (Rainbow)

| Extension | What it fixes |

|---|---|

| Dueling Networks | Separate estimation of state value $V(s)$ and advantage $A(s,a)$ |

| Prioritized Experience Replay | Sample important transitions more often (high TD error) |

| Noisy Nets | Learned exploration via noisy network parameters |

| Distributional RL | Learn distribution of returns, not just expected value |

| Multi-step returns | Use $n$-step TD targets instead of 1-step |

The Rainbow paper (Hessel et al., 2017) combines all of the above into one agent and shows they’re complementary. For this course, DQN + Double DQN is sufficient.

5. Generalization

In all the RL we’ve done so far, learning in one state tells us nothing about any other state. But real problems have millions of states, and we can’t visit them all. Generalization lets us leverage what we’ve learned in visited states to make predictions about states we haven’t seen — the same core idea as supervised machine learning, now applied within RL.

States as Features

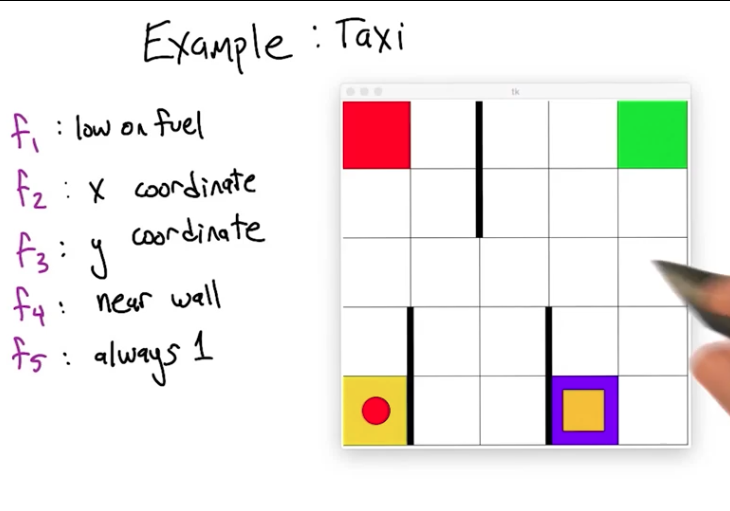

To generalize, we need a notion of similarity between states. Instead of treating states as unanalyzed blobs (state 17, state 42…), we describe them via features: properties that capture similarity between states.

In the taxi problem (~500 states), features might include: x-coordinate, y-coordinate, distance to passenger, near wall, etc. States that share feature values are considered similar, enabling generalization. The choice of features defines the inductive bias: which states look similar to the learner.

Features should be both computationally efficient (easy to extract from state) and value informative (actually predictive of returns). The ideal feature would encode the value function itself, but then there’s nothing to learn. The worst feature is one that’s easy to compute but tells you nothing about value.

What to Approximate

| Function | Maps | Generalization means… |

|---|---|---|

| Policy $\pi(s)$ | states → actions | Similar states → similar actions |

| Value function $Q(s,a)$ | state-actions → returns | Similar state-actions → similar returns |

| Model $T(s,a,s’)$ | state-actions → next states | Standard supervised learning (actual labels!) |

Most research focuses on value function approximation because it’s the natural intermediate representation. Model learning gives true supervised examples (you observe $(s,a) \to s’$), but accurate multi-step prediction is hard. Policy approximation is used in policy gradient methods (robotics).

General Update Rule with Function Approximation

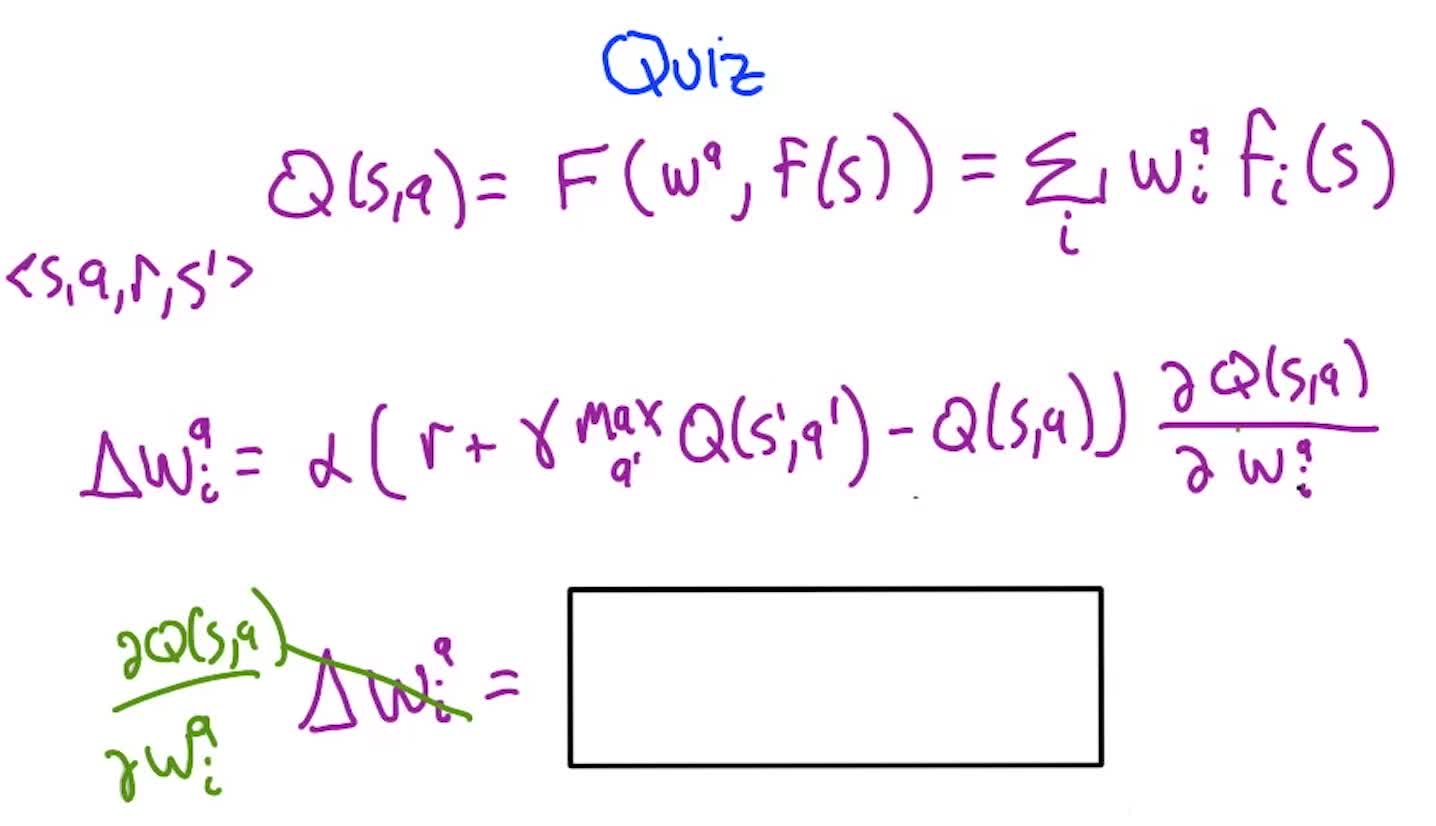

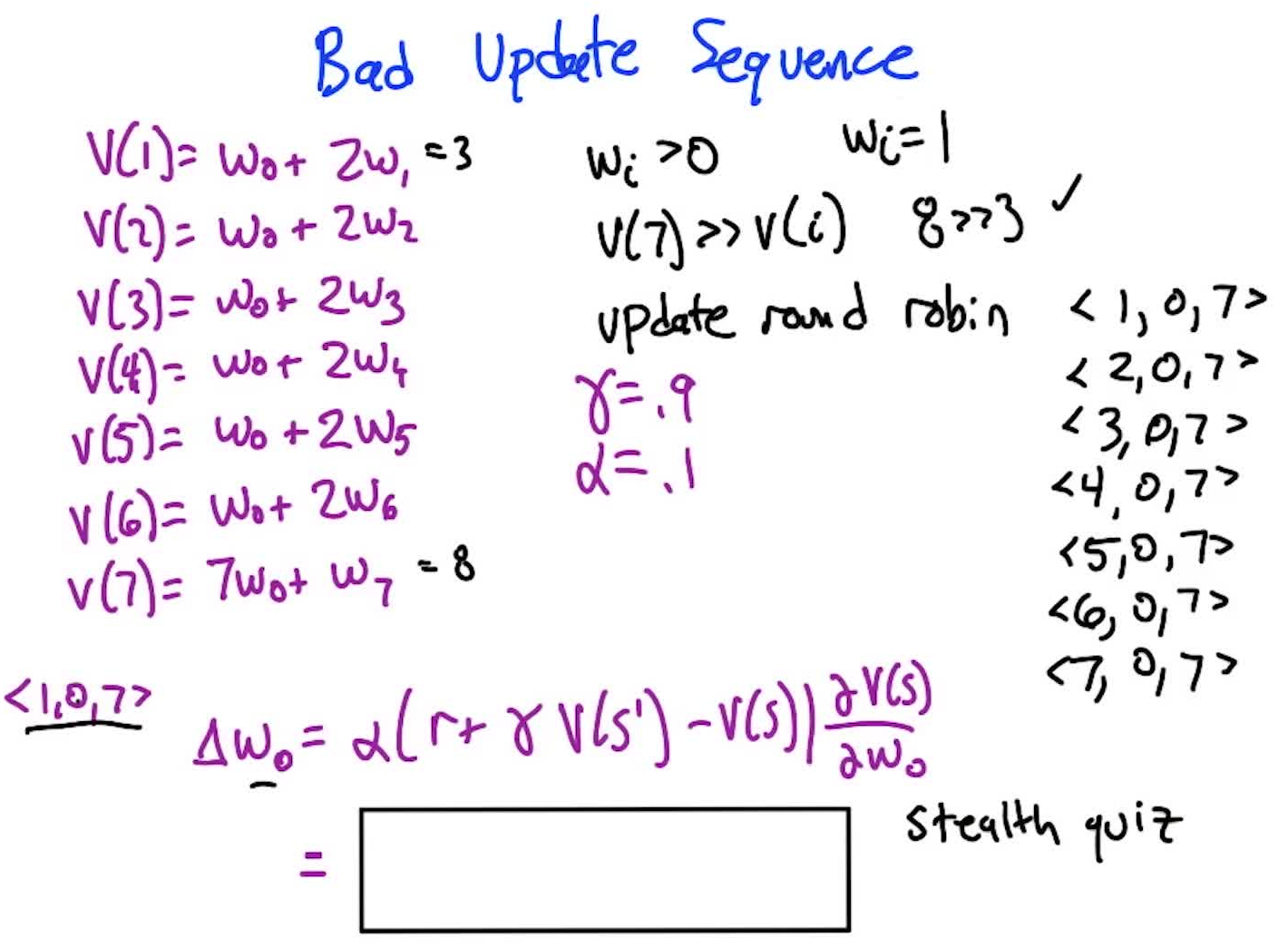

Represent $Q(s,a)$ via parameters $\mathbf{w}$: $Q(s,a) = f(s, \mathbf{w}^a)$. The update rule becomes:

\[w_i^a \leftarrow w_i^a + \alpha \underbrace{\left[r + \gamma \max_{a'} Q(s', a') - Q(s, a)\right]}_{\text{TD error}} \cdot \underbrace{\frac{\partial Q(s,a)}{\partial w_i^a}}_{\text{gradient}}\]This is the standard gradient descent rule: move weights in the direction that reduces the TD error, scaled by how much each weight influences the prediction. Same structure as the perceptron update.

Key difference from supervised learning: the target $r + \gamma \max_{a’} Q(s’, a’)$ is not a true label. It’s bootstrapped from our own (possibly wrong) estimates. This is what makes RL function approximation fundamentally harder and less stable.

Linear Value Function Approximation

The simplest case: $Q(s,a) = \sum_{i} w_i^a \cdot f_i(s) = \mathbf{w}^a \cdot \mathbf{f}(s)$

Each weight $w_i^a$ represents the importance of feature $i$ for action $a$’s value. Weights are shared across all states, which is what enables generalization.

Quiz 1: What is $\frac{\partial Q(s,a)}{\partial w_i^a}$ for the linear case?

Answer: $f_i(s)$. Since $Q = \sum_j w_j f_j(s)$, differentiating w.r.t. $w_i$ treats all $f_j$ as constants. Only the $i$-th term survives, giving the feature value itself.

So the linear update simplifies to: $w_i^a \leftarrow w_i^a + \alpha \cdot \delta \cdot f_i(s)$ where $\delta$ is the TD error.

Successes and Failures

Successes:

- TD-Gammon (Tesauro): 3-layer backprop net learned Backgammon at expert level

- Atari/DQN (Mnih et al.): deep CNNs learned from pixels, human-level on many games

- Shuttle scheduling (Dietterich): value function approximation for NASA scheduling

Why Backgammon worked: the strong random component (dice rolls) forces exploration and means nearby states have nearby values. In deterministic games like tic-tac-toe, moving one piece can completely change the game, breaking the smoothness assumption that function approximation relies on.

The problem: these successes are matched by many failures. Students and researchers frequently see “death spirals” where bootstrapped predictions go south and the entire system diverges. It need not work, but it need not not work — there are no guarantees either way.

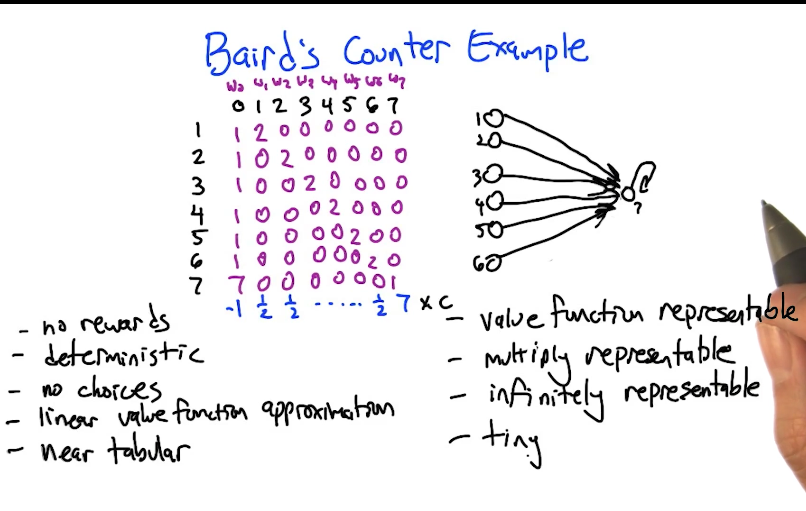

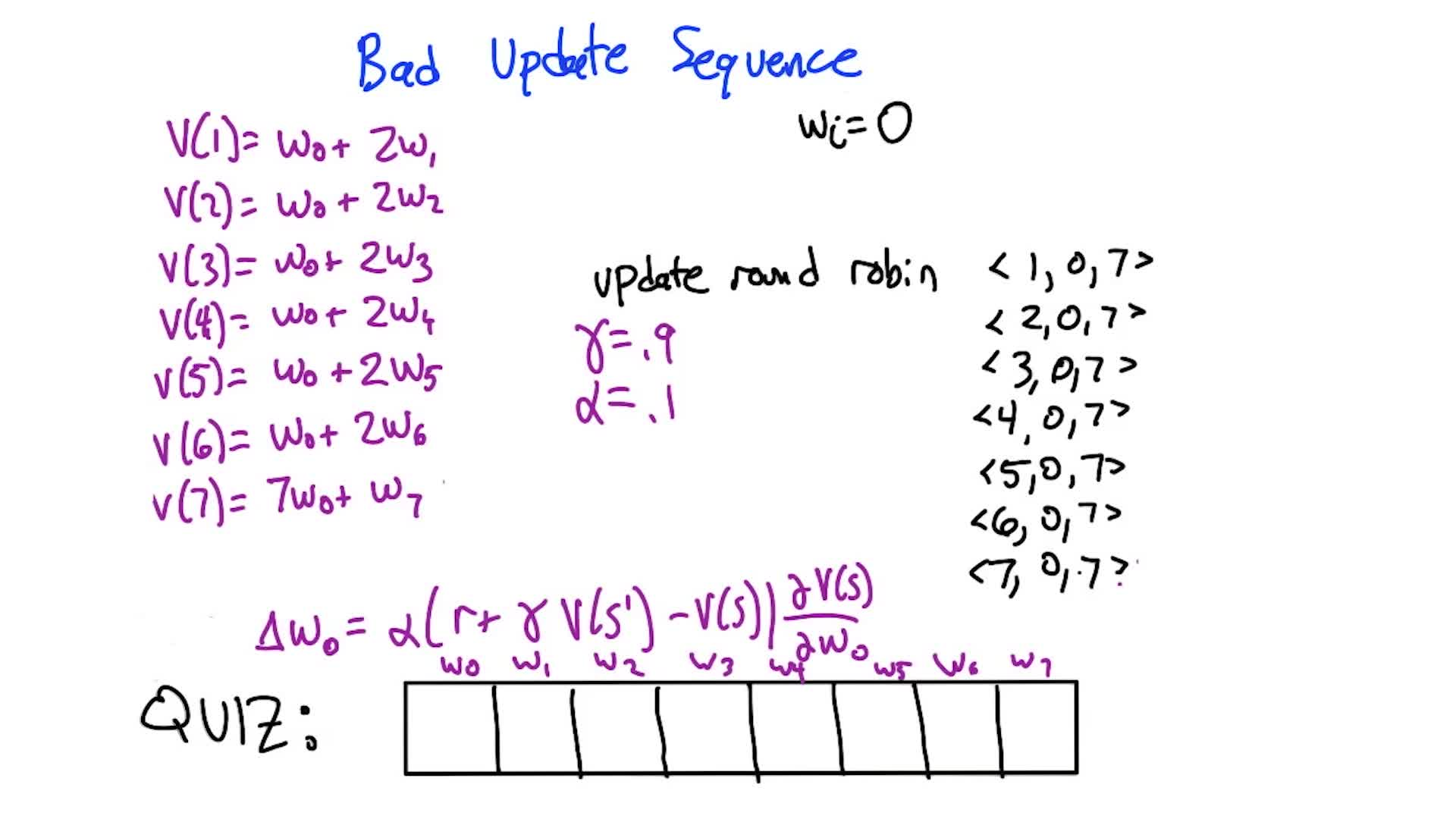

Baird’s Counterexample: Linear FA Can Diverge

A devastating example showing divergence even in the friendliest possible setting:

- 7 states, one action each, deterministic transitions, all rewards = 0

- Near-tabular features: 8 features, mostly indicator variables per state, plus one shared feature

- The optimal value function is all zeros, representable in infinitely many ways by these weights

Despite this, round-robin TD updates cause weights to spiral to infinity.

Quiz 2: Starting with all weights = 1 (so $V(1)=3$, $V(7)=8$), what is $w_0$ after the update for transition $1 \to 7$? ($\gamma=0.9$, $\alpha=0.1$)

Answer: $w_0 = 1 + 0.1 \times (0 + 0.9 \times 8 - 3) \times 1 = 1 + 0.1 \times 4.2 = \mathbf{1.42}$

Weight goes UP. After all 6 transitions (states 1-6 → 7), $w_0 \approx 10.4$. The self-transition $7 \to 7$ brings it down by ~5.2, but not enough. After one full round: $w_0 \approx 5.23$. It keeps growing every round → divergence.

Quiz 3: What if all weights start at 0?

Answer: All weights stay 0 forever. TD error = $0 + 0.9 \times 0 - 0 = 0$, so no updates happen. The exact answer is “sticky” in the deterministic case — but if transitions were stochastic, you could drift away from the right answer and then diverge.

Lesson: even with linear function approximation, a near-tabular representation, deterministic dynamics, zero rewards, and a tiny state space — TD updates can diverge. The shared weight (feature 0) creates interference between states that destabilizes learning.

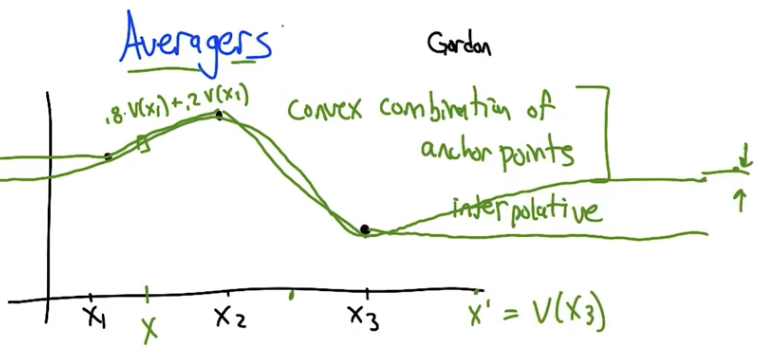

Averagers: Function Approximation That Provably Converges

Averagers (Gordon) are function approximators where the value at any state is a fixed convex combination of a set of anchor points (basis states):

\[V(s) = \sum_{s_b \in \text{basis}} w(s, s_b) \cdot V(s_b), \quad w(s, s_b) \geq 0, \quad \sum_{s_b} w(s, s_b) = 1\]Examples: k-NN (average of k nearest neighbors), distance-weighted interpolation, kernel methods.

Why averagers converge: the convex combination weights can be folded into the MDP’s transition function, creating a new “pseudo-transition” $T’(s, a, s_b)$ that is still a valid probability distribution. The result is an equivalent MDP over just the basis states, which has a unique value function by the standard Bellman theory. Value iteration and Q-learning on this induced MDP are guaranteed to converge.

The key property: because values are convex combinations, predictions can never extrapolate outside the range of anchor point values. This prevents the runaway divergence seen in Baird’s example.

Quiz 4: Name a supervised learning algorithm that is an averager.

Answer: k-NN (k-nearest neighbors). The value at a query point is $\frac{1}{k} \sum_{i=1}^{k} V(s_i)$ for the $k$ closest anchor points — a convex combination with equal weights $1/k$. Distance-weighted kernel methods also qualify.

Trade-off: averagers are safe (provably convergent) but limited. Value functions often have sharp cliffs and discontinuities that smooth interpolation can’t capture well. As the number of anchor points increases, the approximation error decreases — in the limit (infinite anchor points), averagers converge to the true value function.

Also mentioned (further reading): LSPI (Least Squares Policy Iteration) — linear function approximation with policy improvement built in, nice theoretical properties. GTD2 — a gradient TD method that incorporates the function approximator into the loss function itself, provably converges even where standard TD diverges (e.g. solves Baird’s counterexample).

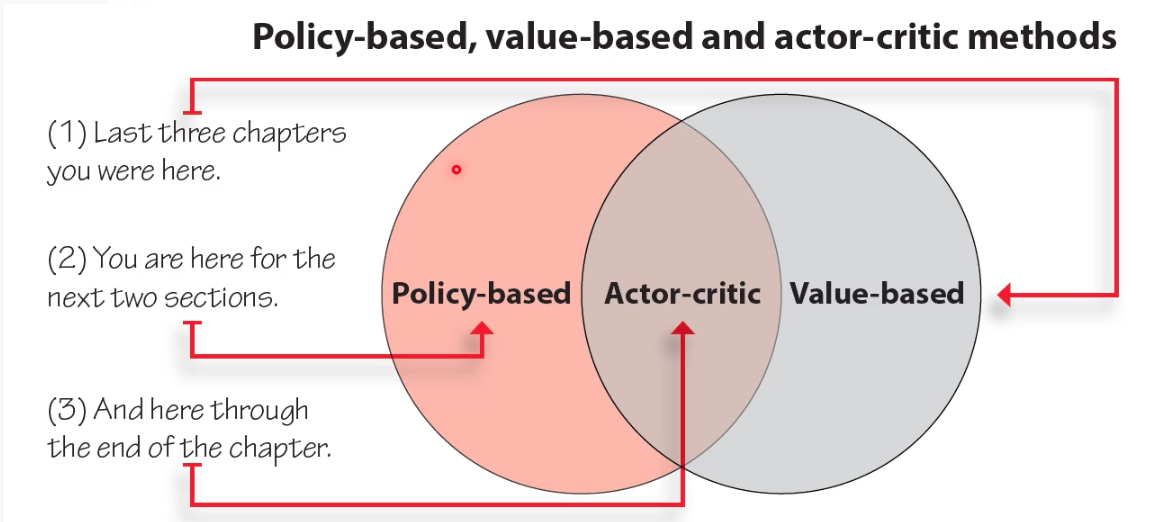

[Supplementary] Policy-based and Actor-Critic Methods

The previous supplementary covered value-based deep RL (DQN). This one covers the other two families: policy-based methods that directly learn a policy network, and actor-critic methods that combine both.

Value-Based vs Policy-Based Objectives

| Value-based (DQN) | Policy-based (REINFORCE, etc.) | |

|---|---|---|

| What’s learned | $Q(s,a;\theta)$ (value network) | $\pi(a \mid s; \theta)$ (policy network) |

| Objective | Minimize $\mathcal{L} = \mathbb{E}\left[(q_*(s,a) - Q(s,a;\theta))^2\right]$ | Maximize $J(\theta) = \mathbb{E}{s_0 \sim \mu_0}[V^{\pi\theta}(s_0)]$ |

| Output | Q-values per action → argmax for policy | Distribution over actions (mean + std dev) |

| Action space | Discrete only (finite outputs) | Discrete or continuous |

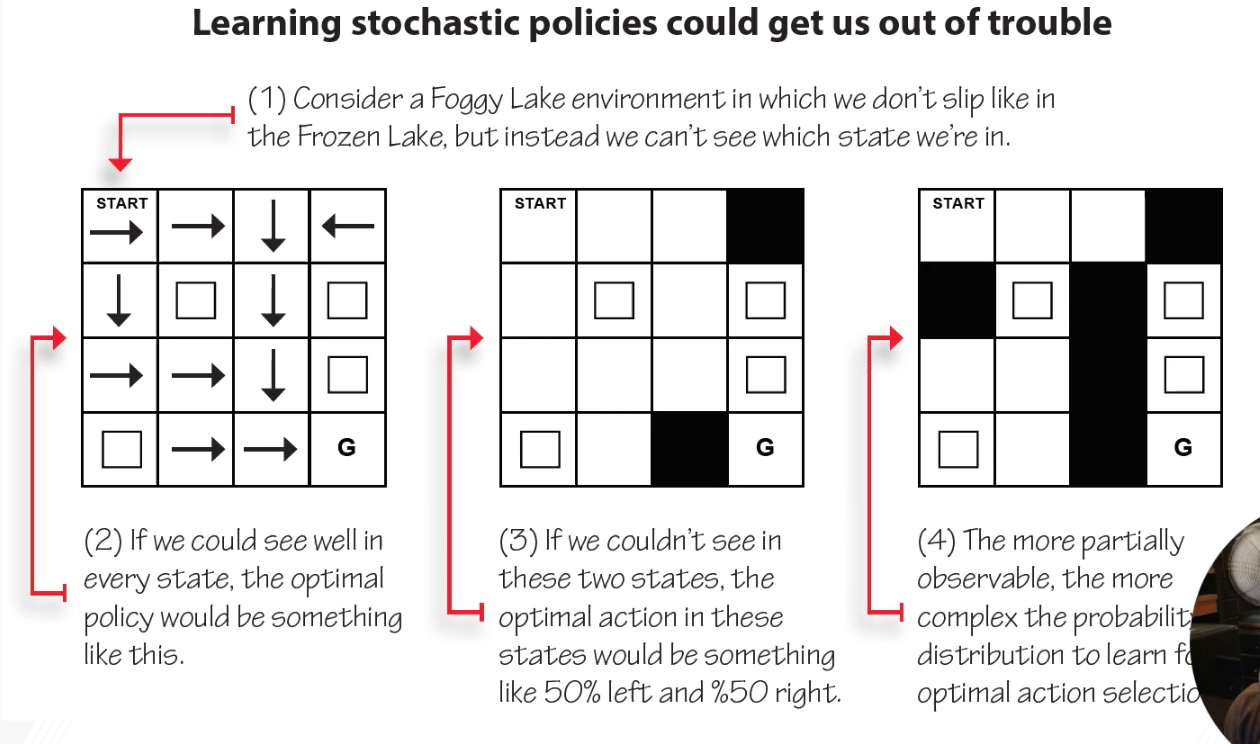

Why Policy-Based Methods?

Continuous actions: DQN outputs one Q-value per discrete action — can’t handle “jump 15cm.” Policy networks output distribution parameters ($\mu$, $\sigma$) and sample continuous actions.

Stochastic policies: sometimes optimal behavior is stochastic (e.g. rock-paper-scissors: uniform random is the only Nash equilibrium). Value-based methods produce deterministic policies via argmax.

Aliased states example: In a partially observable grid, two visually identical states may require opposite actions. A deterministic policy must pick one and be wrong half the time. A stochastic policy (50% left, 50% right) handles both correctly in expectation.

The Policy Gradient

The goal: find $\theta$ that maximizes $J(\theta) = \mathbb{E}[G(\tau)]$ where $\tau$ is a trajectory under $\pi_\theta$.

Through the log-derivative trick, the gradient becomes:

\[\nabla_\theta J(\theta) = \mathbb{E}_{\tau \sim \pi_\theta} \left[ \sum_{t=0}^{T} \nabla_\theta \log \pi_\theta(a_t \mid s_t) \cdot \Psi_t \right]\]where $\Psi_t$ is a score that tells us how good the action was. Different choices of $\Psi_t$ give different algorithms:

| $\Psi_t$ | Algorithm |

|---|---|

| $G(\tau)$ (full trajectory return) | REINFORCE (original) |

| $G_t$ (future rewards only) | REINFORCE with reward-to-go |

| $G_t - V(s_t)$ | Vanilla Policy Gradient (with baseline) |

| $r_{t+1} + \gamma V(s_{t+1}) - V(s_t)$ | Advantage Actor-Critic |

| $A^{GAE(\lambda)}$ | GAE (Generalized Advantage Estimation) |

Key insight: using only future rewards ($G_t$ instead of $G(\tau)$) reduces variance without adding bias — actions shouldn’t get credit for rewards that happened before them.

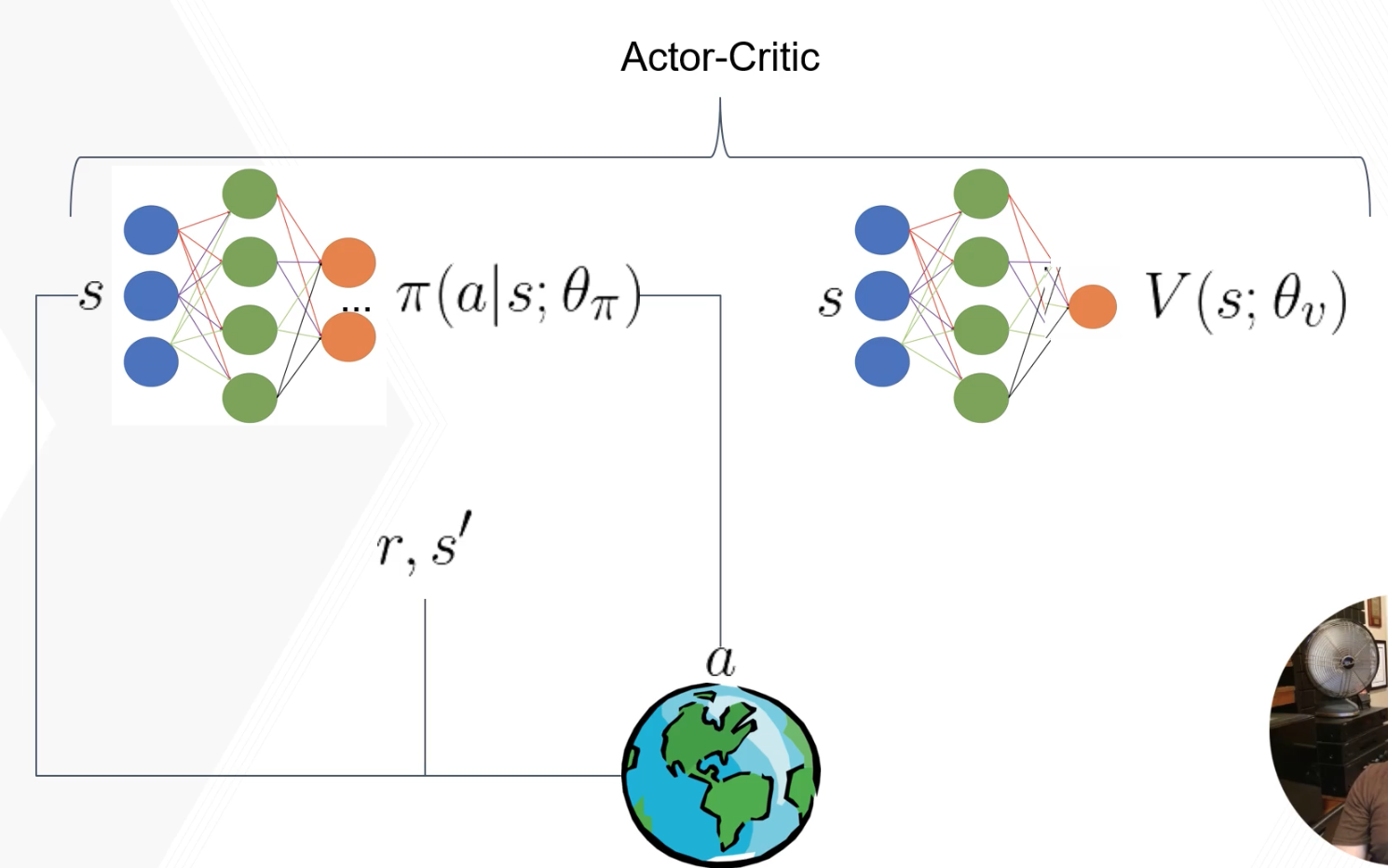

Actor-Critic Architecture

Actor-critic methods use two networks: an actor (policy $\pi(a \mid s; \theta_\pi)$) and a critic (value function $V(s; \theta_v)$).

How it works:

- Actor outputs action $a$ given state $s$

- Environment returns reward $r$ and next state $s’$

- Critic computes TD target: $r + \gamma V(s’; \theta_v)$

- Train critic: minimize $(r + \gamma V(s’; \theta_v) - V(s; \theta_v))^2$

- Compute advantage: $A(s,a) = r + \gamma V(s’; \theta_v) - V(s; \theta_v)$

- Train actor: $\nabla_\theta J = A(s,a) \cdot \nabla_\theta \log \pi_\theta(a \mid s)$

The advantage $A(s,a)$ tells the actor: “was the action you took better or worse than what the critic expected?” Positive → reinforce that action. Negative → discourage it.

Terminology note: Sutton argues that only methods using bootstrapped value functions (TD-based critics) are true “actor-critic.” Methods using Monte Carlo returns for the critic (like REINFORCE with baseline) are technically “policy gradient with baseline.” The terminology is used inconsistently in the literature.

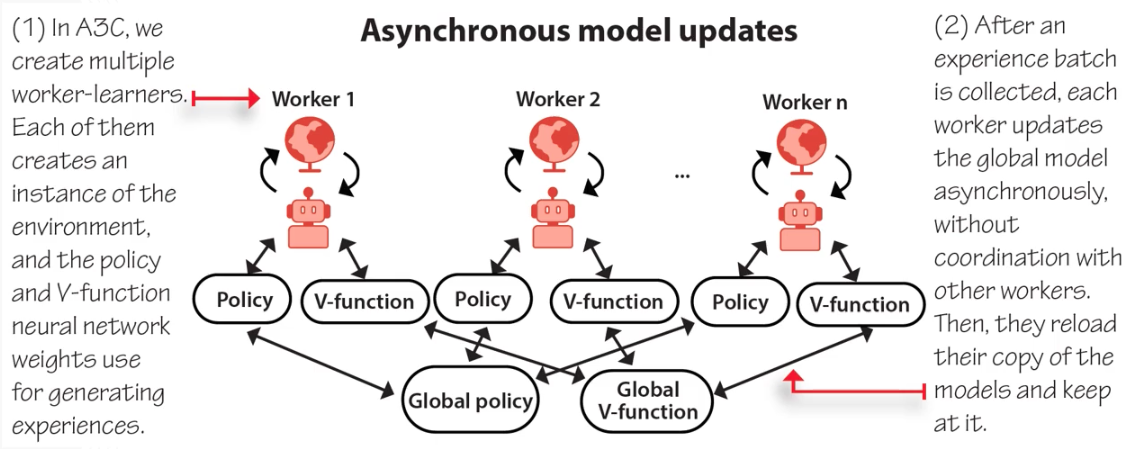

A3C: Asynchronous Advantage Actor-Critic

Instead of a replay buffer (DQN’s solution to correlated data), A3C uses parallel workers:

- Multiple workers, each with a copy of the policy + value network and their own environment instance

- Workers collect experience independently (decorrelates data)

- Each worker computes gradients and asynchronously updates the shared global network (Hogwild! style: no locks)

- Workers periodically sync their local copy with the global network

A3C is CPU-friendly: no GPU needed. Workers = CPU threads. Good for laptops.

A3C uses n-step bootstrapping for the advantage estimate, plus an entropy bonus $\beta H(\pi(s))$ in the policy loss to encourage exploration.

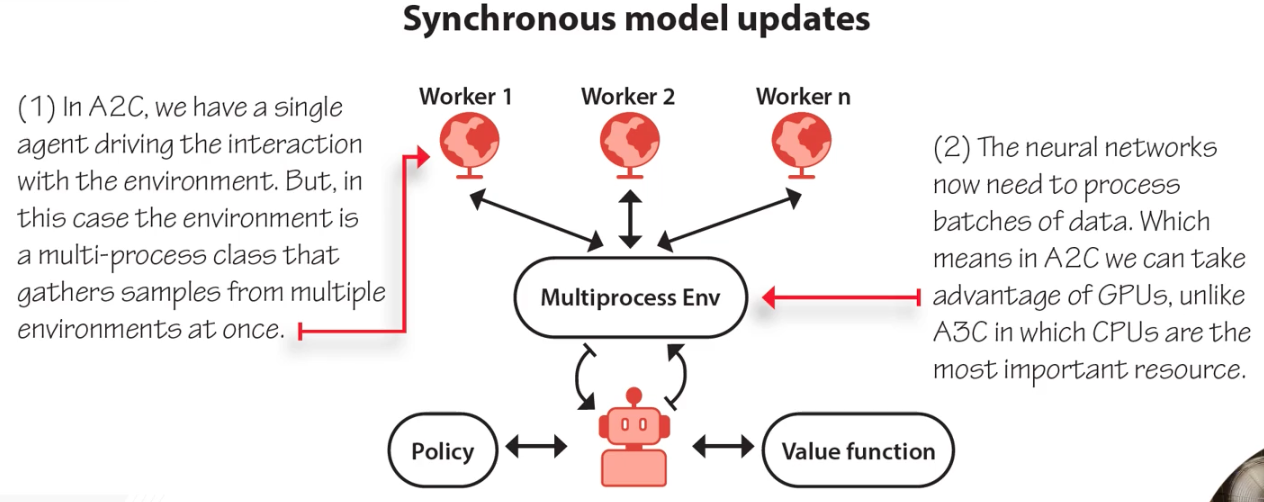

A2C: Advantage Actor-Critic (Synchronous)

Same as A3C but with a synchronization barrier: all workers finish collecting before a single batched update to the global network. This allows GPU utilization (batched forward/backward passes) at the cost of workers waiting for each other.

GAE: Generalized Advantage Estimation

Just as TD($\lambda$) blends 1-step and $\infty$-step value estimates, GAE blends advantage estimates:

\[A^{GAE(\lambda)}(s_t, a_t) = \sum_{k=0}^{\infty} (\gamma \lambda)^k \delta_{t+k}, \quad \text{where } \delta_t = r_{t+1} + \gamma V(s_{t+1}) - V(s_t)\]- $\lambda = 0$: one-step advantage (low variance, high bias)

- $\lambda = 1$: Monte Carlo advantage (high variance, low bias)

- $\lambda \in (0,1)$: blends both (commonly $\lambda = 0.95$)

GAE is used by most modern policy gradient algorithms (PPO, A2C). It’s the advantage-function equivalent of TD($\lambda$) for value functions.

Weight Sharing

The actor and critic can share hidden layers (one network, two output heads: policy + value). This is more parameter-efficient but can cause interference between the two objectives. The Phasic Policy Gradient (PPG) paper addresses this by alternating between phases of policy and value training.

DDPG: Deep Deterministic Policy Gradient

For continuous control, DDPG uses a deterministic actor $\mu(s; \theta_\mu)$ that directly outputs the optimal action (not a distribution), plus a Q-network critic $Q(s, a; \theta_Q)$:

- Actor outputs: $a = \mu(s)$ (a concrete action vector)

- Critic evaluates: $Q(s, \mu(s))$ (value of that action)

- Like DQN: uses replay buffer + target networks (but with soft updates: $\theta^- \leftarrow \tau \theta + (1-\tau)\theta^-$, typically $\tau = 0.01$)

DQN vs DDPG target network updates: DQN copies weights every $C$ steps (hard update). DDPG blends 1% of online weights into target every step (soft/Polyak update). Soft updates are smoother.

Algorithm Summary

| Algorithm | Type | Key Feature |

|---|---|---|

| REINFORCE | Policy gradient | Simplest: uses MC returns as $\Psi_t$ |

| A3C | Actor-critic | Async parallel workers, CPU-friendly |

| A2C | Actor-critic | Sync parallel workers, GPU-friendly |

| PPO | Actor-critic | Clipped objective for stable updates; go-to algorithm |

| DDPG | Deterministic actor-critic | Continuous control, replay buffer + soft target updates |

| TD3 | Deterministic actor-critic | Fixes DDPG overestimation (twin critics, delayed actor updates) |

| SAC | Entropy-regularized actor-critic | Maximizes reward + entropy; robust exploration |

6. Partially Observable MDPs (POMDPs)

In all the RL we’ve done so far, the agent always knows exactly what state it’s in. In the real world, that’s rarely true: you infer the state from noisy, incomplete observations. POMDPs formalize this — and things get much harder.

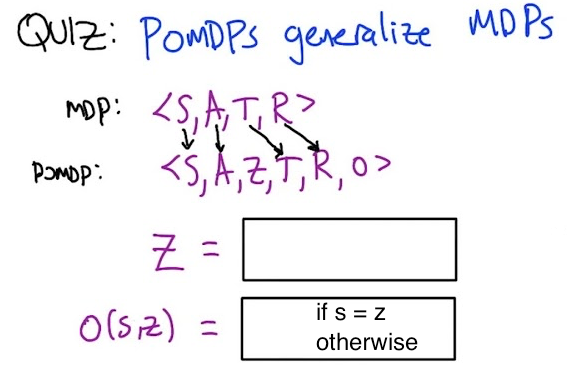

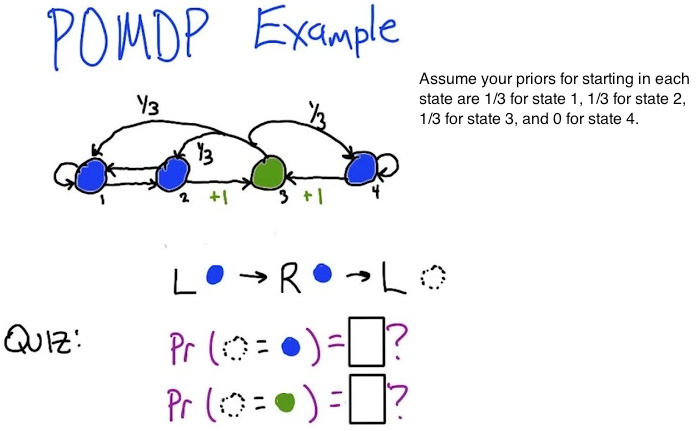

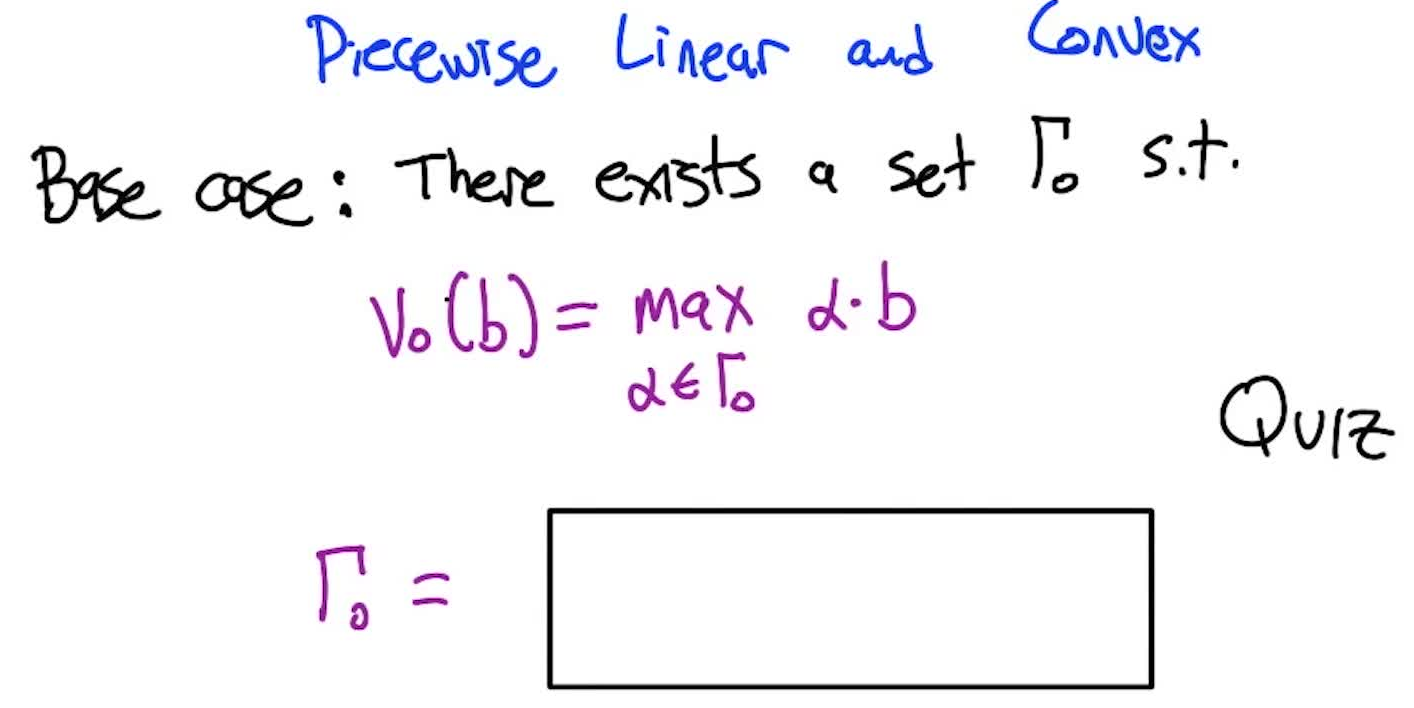

POMDP Definition